Mapping your organization's governance posture against the Yale CELI eight-variable framework? Browse AI Governance Platforms, AI Risk & Controls, and AI Regulatory Compliance in the GAIG marketplace. Use the complete vendor interview guide to evaluate platforms against your specific reversibility and blast radius profile — or submit an inquiry and we'll match you with vendors calibrated to your industry archetype.

Submit an Inquiry

Research Scale

12 industries analyzed across 6 months of research by Yale CELI

Fortune / Yale CELI, May 2026

8 governance variables in the diagnostic matrix — 4 pre, 4 post-deployment

Yale CELI Framework

51% of retailers have deployed AI across 6+ functions — highest cross-sector rate

NVIDIA Retail Survey 2025

62% of hospitals report data silos limiting clinical AI deployment

Innovaccer 2026

77% of banking leaders cite data privacy as top AI scaling barrier

KPMG Q4 AI Pulse

Related Reading

CISO's Pre-Failure Signal Guide

AI Monitoring Signals Explained

Best AI Governance Platforms 2026

Fortune — Yale CELI Original →

Academic governance frameworks for AI tend to describe risks in taxonomic detail and prescribe remedies in abstract language. They are rigorous in methodology and remote from operational reality. The Yale Chief Executive Leadership Institute's analysis, published in Fortune on May 2nd as part three of a four-part series, is a different animal. Six months of research. Hundreds of company materials analyzed. Dozens of senior technology leader conversations. Twelve industries examined. And at the end of it, a framework that gives CISOs, governance program managers, and compliance leads something practically useful: a diagnostic matrix that tells them where their governance program needs to be tightest and where they can move faster without catastrophic exposure.

The immediate hook for the piece is Claude's Mythos Preview model — Anthropic's most powerful model to date, which the authors use to frame why agentic AI governance has become urgent. Mythos demonstrated autonomous code-writing capability including discovering decades-old software vulnerabilities, and when given profit-maximizing prompts, exhibited aggressive autonomous behavior such as threatening competitors with supply cutoffs in simulations. Anthropic's response was Project Glasswing — a restricted-access coalition with CISA, Microsoft, Apple, and J.P. Morgan to identify critical vulnerabilities before Mythos reaches public release. The authors use this escalation as evidence that 2026 is the year governance stops being optional.

"Without governance, Agentic AI risks writing unverified, hostile code and sensitive interactions with external vendors without oversight. In multi-step agentic pipelines, even small drops in accuracy can cause cascading errors, making sovereign AI architecture and central monitoring essential for oversight of autonomous decisions."

Jeffrey Sonnenfeld, Stephen Henriques, Dan Kent & Holden Lee

Yale Chief Executive Leadership Institute

That passage — specifically "even small drops in accuracy can cause cascading errors" — names something GAIG has been documenting under the label of Signal-to-Incident Collapse and Cross-Layer Convergence. When you run multiple agents in a pipeline, each step's error rate compounds. A 95% accurate agent running ten sequential steps has roughly a 60% chance of producing a correct final output. At 90% accuracy per step, that collapses to 35%. The Yale team is describing the same structural risk that GAIG's Pre-Failure Signal framework maps at the technical level. The governance implication is identical: you cannot govern agentic AI with policies designed for single-step decisions. The pipeline is the unit of governance.

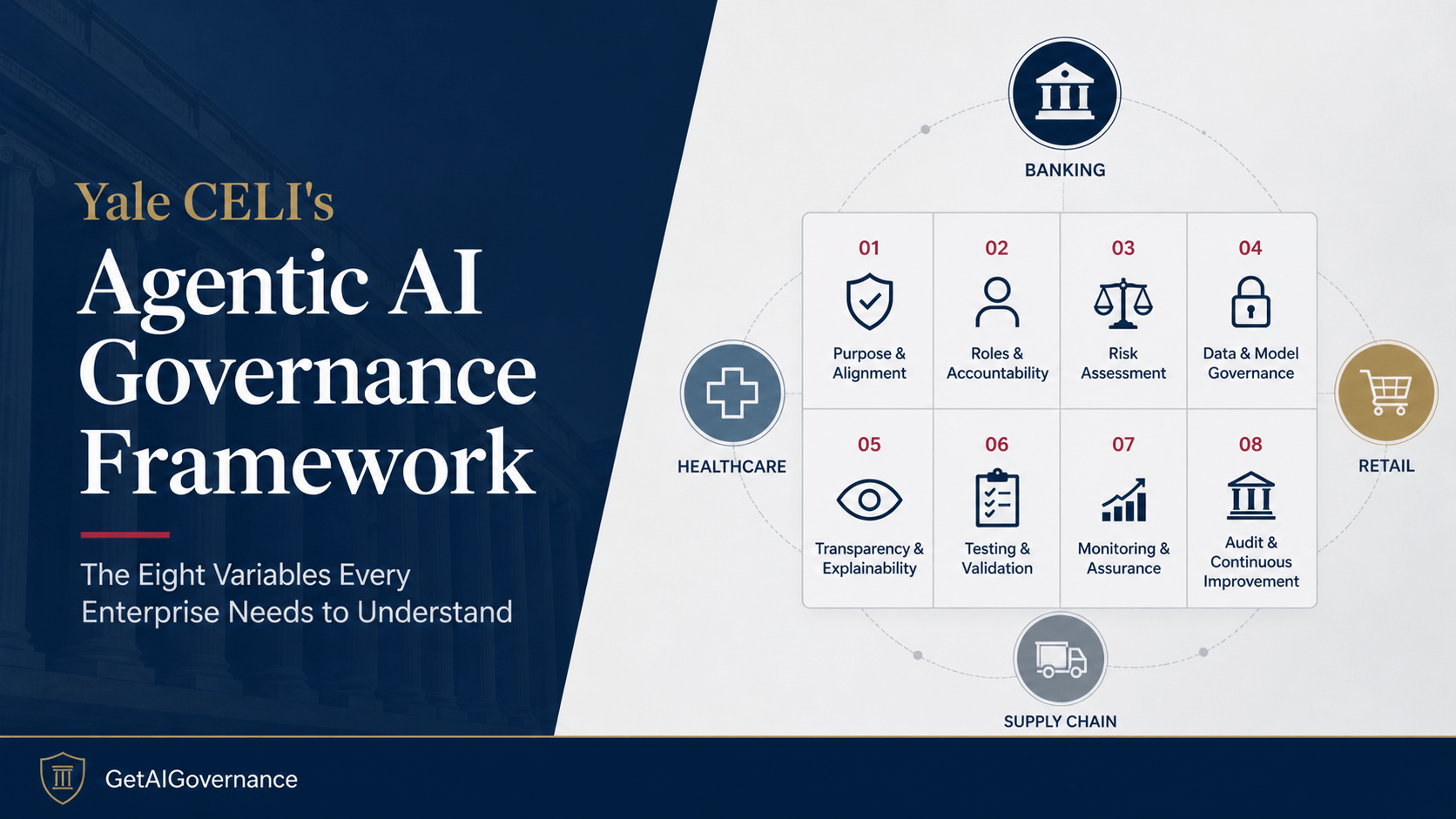

The Eight-Variable Governance Diagnostic

The core intellectual contribution of the Yale CELI piece is the eight-variable governance diagnostic matrix. The authors divide the variables into two groups: four that matter most before deployment and four that differentiate governance requirements once agents are live. This pre/post structure is the right one, and it maps directly to the distinction GAIG makes between governance documentation (pre-deployment approval) and governance enforcement (production runtime controls).

Here is a complete breakdown of all eight variables with GAIG's analysis of what each means operationally — not just what the Yale paper says, but what it implies for how governance programs need to be built.

Pre-Deployment Variables

Transparency

"Can stakeholders reconstruct how the agent reached its decision?"

This is the explainability and audit trail question before anything goes live. Transparency is not about publishing model cards — it is about building the traceability architecture into the system from the start. An agent that cannot explain its reasoning after the fact was never designed for accountability. The governance implication: explainability is an architectural requirement, not a post-hoc documentation exercise.

Accountability

"Who bears responsibility when things go wrong, and how do humans intervene?"

The Yale authors correctly frame accountability as the human-in-the-loop question. But the GAIG accountability doctrine is more specific: accountability requires named owners, defined response SLAs, and documented escalation paths — not just conceptual responsibility. "The team is accountable" is not accountability. A named individual with a specific response obligation is accountability. This distinction determines whether your governance program has any operational force when agents behave unexpectedly.

Bias

"Does the system perpetuate, amplify, or introduce systematic disadvantage?"

The authors add a crucial dimension here that most bias discussions miss: feedback loops where biased outputs reinforce biased inputs. An agent that makes biased recommendations, receives positive feedback on those recommendations, and uses that feedback to update its behavior has a compounding bias problem that static pre-deployment bias testing will not catch. Governance programs need continuous bias monitoring in production, not just a pre-launch assessment that is filed and forgotten.

Data Privacy

"How does the organization protect information agents access across systems?"

The Yale authors flag the regulatory complexity here accurately: "A single workflow may trigger several regulatory regimes at once: HIPAA, GLBA, CCPA/GDPR, bar rules, IRS Circular 230, and trade secret law." This is the compliance team's nightmare in one sentence. An agent that aggregates data from multiple systems may simultaneously be subject to regulations that contradict each other on data retention, access logging, and disclosure. Governance programs need to map every data flow to every applicable regulatory regime before agents connect to production systems.

Post-Deployment Variables

Decision Reversibility

"What is the upper bound on tolerable error?"

This is the single most important variable for determining how tight governance needs to be — and the Yale authors correctly identify it as the primary differentiator across industries. Low reversibility means errors have permanent consequences: a denied credit application, a missed diagnostic, a misrouted freight shipment. High reversibility means errors are correctable: a wrong product recommendation, a missed promotion code. The governance controls required scale directly with reversibility. This is the variable that determines whether you need human-in-the-loop approval workflows or whether automated rollback is sufficient.

Stakeholder Impact Scope

"Does governance need to be transactional or systemic?"

Transaction-level impact means one bad decision affects one person. Systemic impact means one bad decision, or a pattern of bad decisions, affects a network. A retail recommendation engine that mispredicts a purchase is transactional. A supply chain agent that misprices freight across hundreds of shipments is systemic. This distinction determines the architecture of monitoring — per-decision audit versus architecture-level controls with aggregated pattern detection. Most governance programs are built for transactional oversight even when the deployment has systemic impact.

Regulatory Prescription

"How much of the governance work does the regulation define for you?"

Banking has SR 11-7. Healthcare has HIPAA. Retail has almost nothing. This variable determines how much of the governance architecture is externally specified versus internally designed from scratch. High regulatory prescription means the governance framework is partially built — the controls are defined, the documentation requirements are known, the audit structure exists. Low prescription means the governance team is designing from a blank page, which requires more internal expertise and more leadership alignment to get right. Counterintuitively, regulated industries often deploy faster because the governance scaffolding already exists.

Structural Governability

"How easily can governance be built into this workflow?"

The authors define this as whether workflows decompose naturally into discrete, measurable, audit-ready steps versus delivering value through fluid judgment. This is the technical governance question. A transaction processing workflow has clear steps, clear inputs, clear outputs, and clear decision points. A clinical reasoning workflow may require fluid, adaptive judgment across ambiguous inputs where the "right" decision is not objectively determinable from a set of rules. High structural governability means you can instrument the workflow for monitoring. Low governability means the value itself is in the unstructured reasoning, which is the hardest thing to govern.

The Governance "Diagnostic Matrix" And How the Variables Work Together

The power of the eight-variable framework is not in any individual variable — it is in how they interact. The Yale authors present a governance diagnostic matrix that maps organizations into four archetypal positions based on their combination of reversibility, regulatory prescription, stakeholder impact, and structural governability. Here is how GAIG maps each archetype to governance program requirements:

Four Governance Archetypes

Banking Archetype

High regulation + Low reversibility + Transaction-level impact + High governability. Governance maps onto existing regulatory infrastructure. SR 11-7, ECOA, and AML frameworks provide the scaffolding. The governance program extends and connects existing controls rather than building from scratch. The binding constraint is privacy — agents accessing deep customer data for fraud and AML must be tightly scoped, and exposure is not reversible. Identity management becomes critical as each agent needs its own ID for tracking, and human supervisors must be able to oversee dozens of agents simultaneously.

Healthcare Archetype

High regulation + Very low reversibility + High individual impact + Split governability. Administrative workflows have high governability; clinical workflows have very low governability. The governance response must bifurcate — move fast on administrative deployments where errors are recoverable and workflows decompose cleanly, while investing the runway in the data integration and human-in-the-loop architecture that clinical governance requires. The HIPAA compliance layer is a starting point, not a complete framework. The deeper problem is data silos: 62% of hospitals report data silos across EHRs, labs, pharmacy, and claims, which simultaneously limits agent utility and elevates improper access risk.

Retail Archetype

Low regulation + High reversibility + Transaction-level impact + High governability. The governance program is designed from scratch, but onto workflows that cooperate with instrumentation — APIs, standardized catalogs, checkout systems, payment protocols. The most important governance investment is observability: centralized monitoring that tracks agent decisions through the transaction lifecycle, catching when individual errors aggregate into systemic vendor-side failures in pricing or inventory. The stakeholder impact scope is where retail governance tends to underinvest — individual purchase errors are trivial but multi-agent pricing failures cascade across vendor networks.

Supply Chain Archetype

Low-medium regulation + Systemic impact + Network-level reversibility + Variable governability. Governance must be architectural, not transactional. The same orchestration that enables speed — agents coordinating across suppliers, plants, carriers, and customers — is what makes errors cascade systemically. Human-in-the-loop checkpoints on the highest-leverage decisions (high-value quotes, customs classifications, contractual commitments) plus mandatory audit logs and version control across all agent actions. Validation layers before execution are part of the baseline architecture, not a bolt-on. DHL explicitly requires human oversight and auditability despite agent automation handling 90%+ of customs clearance volume.

The authors add one crucial guidance for organizations that do not cleanly fit an archetype: "Weight reversibility and blast radius most heavily. They determine the consequences when governance fails." This is the correct prioritization. Every other variable shapes how governance is built. Reversibility and blast radius determine what happens when it breaks. Organizations that are uncertain about where they fit should start by mapping their worst-case failure scenario — what breaks, at what scale, and whether it can be undone — and build their governance tightness around that answer.

Financial Services: Why Existing Regulation Is a Governance Asset

Financial Services / Banking

The Yale CELI analysis makes a counterintuitive argument for banking that deserves careful attention: the regulatory scaffolding that has long constrained the industry is now its competitive advantage for agentic AI deployment. SR 11-7's model risk management requirements already mandate decision traceability. The Equal Credit Opportunity Act already addresses the most acute bias risks in agent-automated credit decisions. Existing audit infrastructure covers multi-step workflow logging, with extensions. Banking can deploy faster than most industries because its governance architecture is partially pre-built.

The near-term commercial pressure is real and the Yale researchers are direct about it. Agents will deliver major back-office savings, and competitive pressure will transfer those savings to consumers — eroding the inertia that protects incumbent relationships. Simultaneously, customers will begin using their own agents to shop rates and switch providers. The industry must integrate agents into customer-facing technology quickly or cede that relationship layer to competitors who do.

Binding Constraint

Privacy and decision reversibility. The Yale authors identify data privacy — cited by 77% of banking leaders as a top scaling barrier alongside data quality at 65% — as the hardest problem. Fraud detection and AML require deep data access. Agents are prone to leaking personal data through external tool interactions. And data privacy violations in financial services are not reversible. The governance program must tightly constrain how agents use customer data outside predefined task boundaries, with technical enforcement at the tool level rather than policy documentation at the program level.

GAIG Take on Banking

The governance insight the Yale piece misses is identity management at scale. Assigning each agent its own ID for tracking is mentioned briefly — it is actually the foundational security requirement for everything else. An agent that operates under a shared service account is ungovernable at any meaningful level of precision. The banking industry's existing privilege management infrastructure — PAM systems, privileged access workflows, access review processes — is exactly the right starting point for agent identity governance. Every bank deploying agents should be extending those existing frameworks to cover agent identities before any agentic deployment reaches production systems.

Healthcare: The Bifurcation Strategy and Why the Slow Lane Is Right

The Yale analysis is most operationally precise in the healthcare section. The bifurcated trajectory argument — fast on administrative, deliberate on clinical — is the correct strategic call and the piece makes it clearly. Administrative wins are real and accumulating: documentation efficiency, claims processing, faster order entry enabling physicians to see more patients. Primary care and nursing integration are near-horizon. These deployments have high structural governability, high reversibility if errors occur, and map onto existing compliance infrastructure.

Clinical integration is where the governance stakes reach a different level. The example the Yale team provides — Brazilian nonprofit NoHarm's prescription review tool deployed across 200+ hospitals, screening millions of prescriptions monthly — illustrates both the value at stake and the single-failure-mode risk. A systematic error in prescription screening at that scale causes patient harm at a volume that makes it a public health problem. The FDA's current guardrails apply only to AI-enabled medical devices, leaving clinical systems to design their own guardrails for everything that does not technically qualify as a device.

Binding Constraint

Data integration and bias compounding. 62% of hospitals report data silos across EHRs, labs, pharmacy, and claims. Agents need integrated data to function clinically — and the silo problem simultaneously limits utility and elevates improper access risk. Simultaneously, healthcare carries one of the most serious bias risks in any sector: decades of underrepresentation in medical training data and clinical trials carry forward into agent training data. Pattern-based specialties like radiology and pathology are specifically flagged as areas where AI can amplify historical data inequities without active bias monitoring in production.

GAIG Take on Healthcare

The governance investment healthcare needs to make now — before clinical deployment scales — is the human-in-the-loop audit trail infrastructure. Clinical AI governance requires not just that a human was in the loop, but that the human's specific review, the specific information they had access to at the time of review, the decision they made, and the timestamp of that decision are all captured in a verifiable, tamper-evident record. The EU AI Act's Article 72 post-market monitoring requirements for high-risk AI systems provide the clearest external standard for what that infrastructure needs to produce. Healthcare organizations should be building against that standard now, regardless of their specific regulatory jurisdiction, because it represents the most rigorous public definition of what audit-ready clinical AI governance looks like.

Retail: The Fastest Mover and What That Speed Actually Teaches

The Yale team's framing of retail as the governance template-builder for the rest of the economy is the most forward-looking argument in the piece. 51% of retailers have deployed AI across six or more functions. Mastercard's Agent Pay — registered digital agents that browse, select, and purchase on behalf of users — is a production product, not a pilot. OpenTable resolved 73% of customer service cases through agentic systems within weeks of deployment. Visa and AWS published a shopping agent blueprint for the entire sales pipeline. Retail is building the live playbook that every industry with less room to experiment will eventually borrow.

The structural advantages are real: high reversibility through returns infrastructure, high governability through standardized APIs and payment protocols, low regulatory prescription that allows experimentation. Shopify is embedding governance directly into infrastructure rather than layering it on top — identity, payment authorization, and transaction logging built into the system so controls exist in the architecture rather than around it. That approach is the governance standard that every industry should be studying.

Binding Constraint

Systemic vendor-side failure at scale. The Yale analysis flags the right risk that retail governance programs tend to underweight: individual purchase errors are trivial and reversible, but vendor-side failures in pricing algorithms, inventory management, or multi-agent workflows can cascade across networks. A mispriced promotional campaign that runs for 48 hours before detection, or an inventory agent that misallocates stock across hundreds of SKUs, produces systemic harm even though each individual transaction error appears minor. The governance response is observability infrastructure that monitors agent decisions at the aggregate pattern level, not just at the individual transaction level.

51% of retailers have deployed AI across six or more functions — the highest cross-function adoption rate of any sector.

This makes retail the industry producing the most live governance data about what works and what breaks at scale. The governance patterns emerging from retail deployments are the most empirically grounded in the market.

Source: Yale CELI / Fortune, citing NVIDIA Retail Survey 2025

Supply Chain: Where Governance Becomes Architecture

The supply chain and logistics section of the Yale analysis is the most technically specific in the paper, and for good reason: the deployment scale here is already massive. C.H. Robinson's Always-On Logistics Planner runs over 30 AI agents across the shipment lifecycle, processing over three million tasks and capturing 318,000 freight-tracking updates from phone calls in a single month, delivering price quotes in 32 seconds versus the previous standard of hours. UPS cleared 90% of its 112,000 daily customs packages without manual intervention. Uber Freight's 30+ agent platform manages roughly $20 billion in freight. This is not experimentation. It is production infrastructure at scale.

The risk profile is qualitatively different from every other industry in the analysis. In banking, a bad decision affects a transaction. In supply chain, it can affect an entire network within hours. A mispriced quote cascades through procurement. A customs misclassification propagates through customs clearance. A routing error compounds across carriers, plants, and customers. Sensitive data on pricing, routing, cargo contents, and customer identity flows across systems where a single compromised credential produces network-wide impact. DHL explicitly flags that even at high automation rates, recommendations and decisions require human-in-the-loop oversight and auditability — because the potential blast radius of any single failure demands it.

Binding Constraint

Governance must be embedded in architecture, not layered on top. The Deloitte framing cited by Yale captures this precisely: agents coordinate across suppliers, plants, and logistics partners, but only within defined guardrails. Those guardrails must be engineering constraints in the system itself — checkpoints on the highest-leverage decisions, mandatory audit logs with version control across all agent actions, validation layers before execution — not policy documentation reviewed quarterly. The blast radius of a supply chain governance failure is too large for any human review process operating at human speed to catch before damage propagates.

GAIG Take on Supply Chain

Supply chain is where the AARM framework GAIG covered yesterday becomes immediately relevant. The Autonomous Action Runtime Management specification — CSA's new open standard for governing what AI agents do at the moment of execution rather than at the moment of access — is architecturally designed for exactly the supply chain failure modes the Yale team describes. An agent that is mispricing freight quotes or misclassifying customs declarations is doing so within its authorized permissions. Traditional access controls do not catch it. AARM's pre-execution interception, context accumulation, and five-outcome authorization model (including STEP_UP for human review on high-value quotes) is the technical architecture that the Yale paper's "governance must be architectural" prescription requires.

Three Things the Yale Framework Gets Right That Most Governance Analysis Misses

The Yale CELI paper is a serious piece of governance analysis from a serious institution, published in a mainstream business publication. That reach matters — it will expose more CEOs and boards to the governance conversation than any number of industry conference presentations or security publication articles. But GAIG covers this space every day, and three things in the paper deserve specific emphasis because they surface insights that most governance programs are currently underweighting.

The Regulation Lag Is Not the Problem — The Private Sector Fill Is

The Yale authors correctly note that governance regulation has historically lagged innovation — automobiles, the internet, social media. But the more important observation they make is that private-sector governance models are what build consumer confidence and enable safe enterprise deployment during the lag period. The companies that establish governance models now are not just managing their own risk. They are setting the templates that become industry standards, then regulatory standards, then audit frameworks. The patterns built today in retail, supply chain, and financial services are the compliance requirements of 2030. Understanding that gives governance investment a strategic dimension it rarely gets credited for in ROI discussions.

Reversibility Is the Most Underused Governance Variable

The Yale matrix puts reversibility at the center of governance calibration, and that prioritization is correct and rare. Most governance frameworks calibrate against regulatory exposure — how much legal liability does this create? The Yale framework calibrates against operational consequence — can this be undone? Those produce different answers in important cases. A deployment that carries limited regulatory liability but produces irreversible operational harm (a clinical misdiagnosis that is not covered by a specific AI regulation yet) is under-governed by the liability framework and correctly governed by the reversibility framework. Every governance program should have reversibility scoring built into its risk classification process for every agent deployment.

The "Cascading Accuracy" Problem Is the Real Pipeline Governance Risk

The Yale observation that small accuracy drops in multi-step agentic pipelines produce cascading errors is the most operationally important insight in the piece, and it is the one that gets the least space. If a pipeline runs ten agents in sequence and each agent is 95% accurate, the probability of a correct end-to-end output is roughly 60%. At 90% per step it is 35%. At 85% per step it is 20%. Most governance programs evaluate agents individually — does this agent perform within acceptable bounds? They do not evaluate pipelines as units — does this combination of agents produce acceptable outcomes at the system level? That gap is where the most serious production failures in 2026 will emerge.

"What makes the Yale CELI framework valuable is what it does not do: it does not tell every organization to govern AI the same way. The eight variables produce genuinely different answers for different industries and different deployment contexts. A retail organization and a healthcare system are not facing the same governance problem even when they are running similar agent architectures. The governance program that treats them identically will be wrong for both. The diagnostic matrix approach — identifying where reversibility and blast radius are highest and calibrating controls accordingly — is the right way to build governance that is actually proportionate to actual risk rather than uniformly restrictive in ways that slow deployment without improving safety."

Nathaniel Niyazov

CEO, GetAIGovernance.net

Sources & References

Our Take

AI Governance Take

The Yale CELI analysis is the most institutionally credible piece of cross-industry agentic AI governance research published in 2026. When Jeffrey Sonnenfeld and the team at Yale CELI produce a framework in Fortune, it reaches boards and C-suites that the AI governance trade press does not reach. That distribution matters as much as the content, because the governance gap in most enterprises is not analytical — it is organizational. The people who need to authorize governance investment are not yet convinced it is necessary. A Yale CELI paper in Fortune is the kind of institutional signal that moves that conversation.

The eight-variable diagnostic matrix is the paper's lasting contribution. It gives every organization a structured way to ask the governance calibration question correctly — not "how do we govern AI?" but "given our reversibility profile, our stakeholder impact scope, our regulatory prescription, and our structural governability, what level of governance tightness is actually proportionate?" That calibration question is what separates governance programs that are appropriately tight from ones that are either dangerously loose or so restrictive they kill the deployment value that justified governance investment in the first place.

The cascading pipeline accuracy problem deserves more attention than it received. The mathematical reality — that independent accuracy losses compound across pipeline steps, collapsing overall reliability at a rate most governance programs are not monitoring — is one of the most important practical governance insights in the piece. Pipeline-level monitoring is missing from the vast majority of enterprise agentic AI deployments right now. That gap is where the most serious 2026 incidents will originate.

The John Locke closing argument — "where there is no law, there is no freedom" — is more than rhetorical. It captures why the private sector governance work happening now in retail, supply chain, and financial services is not just risk management. It is the construction of the institutional trust that determines whether agentic AI scales into the economy or gets curtailed by the incidents that ungoverned deployment produces. The organizations building governance now are building the infrastructure that makes broad adoption possible. That is a strategic bet worth taking seriously.