Key Statistics

28% of organizations have formally defined AI governance oversight roles

— IAPP 2024

73% experienced outages linked to ignored or suppressed alerts

—Splunk 2025, n=1,855

78% of executives can't pass an AI governance audit within 90 days

—Grant Thornton 2026

24 distinct Pre-Failure Signals mapped across 4 Control Layers

—GAIG PSI Index

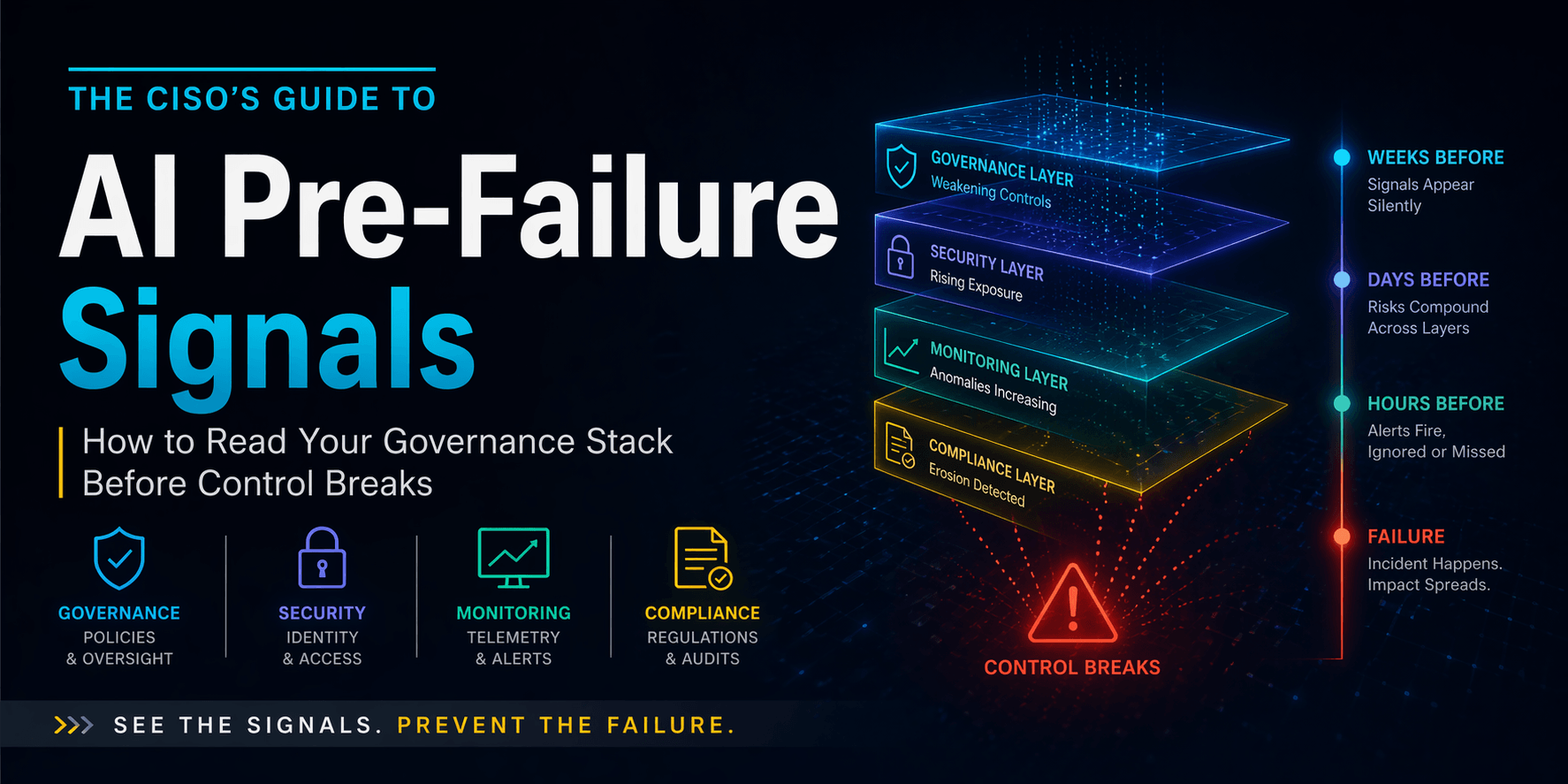

A model update gets pushed on a Wednesday. The monitoring team doesn't recalibrate thresholds because nobody told them the update happened. Three weeks later a hallucination rate signal fires. Nobody investigates — that alert has fired forty times before without producing anything actionable. Four weeks after the update a customer-facing workflow starts producing systematically incorrect outputs. The incident gets discovered by a user complaint. The CISO gets called into a meeting where someone uses the word "sudden."

That sequence had six Pre-Failure Signals. Nobody read any of them. The governance layer had stopped matching operational reality weeks earlier. The security team had no behavioral baseline for the updated model. The monitoring layer had been suppressing alerts through accumulated fatigue. The compliance team had no audit trail of the update or any human response to the signals that preceded it. Four Control Layers were each showing distinct degradation — and nobody had visibility across all four simultaneously.

This is not unusual. It is the modal enterprise AI incident pattern. And it is almost entirely preventable — not through better tools, but through reading the environment that already exists around you. The Pre-Failure Signal Index names those signals, locates them in the timeline of failure, and gives practitioners the pattern recognition to catch them before control breaks.

"By the time the alarms finally scream, the model has already been leaking for a month. A breach is just the final receipt for a thousand signals you chose to miss."

—Nathaniel CEO GetAIGovernance

Lock These Before Reading Further

These terms are used consistently throughout. Each is defined once here and never again.

Pre-Failure Signal (PSF)

A measurable indicator that a Control Layer is degrading before an incident occurs.

Control Layer

One of four operational domains — Governance, Security, Monitoring, Compliance — each responsible for a distinct failure mode.

Signal Cluster

A group of related Pre-Failure Signals within a single Control Layer.

Cross-Layer Convergence

The alignment of Pre-Failure Signals across two or more Control Layers simultaneously.

Red Code Signal

A Cross-Layer Convergence pattern indicating imminent failure — the window for governance intervention has closed.

Execution Chain

The full decision and action path of an AI system from input to output to consequence.

⊞Pre-Failure Signal Severity Matrix

Every Pre-Failure Signal mapped across layer, detection difficulty, time window, impact severity, and ownership. This is the reference document. Screenshot it. Share it. Run it against your current posture.

Signal Name | Layer | Detection Difficulty | Time Before Incident | Impact Severity | Who Owns Detection |

|---|---|---|---|---|---|

GOVERNANCE LAYER | |||||

Model Registry Drift | Governance | High | Weeks–Months | High | AI Governance Lead |

Classification Staleness | Governance | High | Weeks–Months | Critical | Risk & Compliance Lead |

Shadow Procurement Entry | Governance | Very High | Weeks–Months | High | Procurement + Security |

Policy Enforcement Gap | Governance | High | Weeks | Critical | CISO + Legal |

Ownership Ambiguity | Governance | Medium | Weeks–Months | High | CISO |

SECURITY LAYER | |||||

Permission Creep Drift | Security | Medium | Days–Weeks | Critical | Identity & Access Team |

Orphaned Access Tokens | Security | Medium | Days–Weeks | High | Security Ops |

OAuth Anomaly Patterns | Security | High | Days–Weeks | Critical | Security Ops |

Tool Invocation Drift | Security | Very High | Days | High | AI Security Lead |

Prompt Injection Precursors | Security | High | Days | High | Threat Detection |

MONITORING LAYER | |||||

Alert Fatigue Suppression | Monitoring | Low | Weeks | Critical | Monitoring Lead |

Dashboard Abandonment | Monitoring | Low | Weeks | High | Monitoring Lead |

Signal-to-Incident Collapse | Monitoring | Medium | Days–Weeks | Critical | AI Governance Lead |

Baseline Drift Neglect | Monitoring | High | Days–Weeks | High | ML Ops |

False Positive Tolerance | Monitoring | Low | Days–Weeks | High | Monitoring Lead |

COMPLIANCE LAYER | |||||

Evidence Reconstruction | Compliance | Medium | Weeks | Critical | Compliance Lead |

Control Mapping Drift | Compliance | High | Weeks–Months | High | Compliance Lead |

Audit Trail Gaps | Compliance | Medium | Weeks | Critical | Compliance + Legal |

Vendor Review Lapses | Compliance | Medium | Weeks–Months | High | Third-Party Risk |

Documentation Decay | Compliance | Low | Weeks–Months | Medium | Governance Lead |

RED CODE — CROSS-LAYER CONVERGENCE | |||||

Unreviewed Model Deployment | Red Code | Low | Hours–Days | Critical | CISO |

Untracked Data Integration | Red Code | High | Hours–Days | Critical | Data Governance Lead |

Autonomous Access Expansion | Red Code | High | Hours–Days | Critical | Identity & Access + CISO |

Multi-Layer Convergence | Red Code | Very High | Hours | Critical | CISO |

1. Human and Governance Pre-Failure Signals

Stage: Early · Weeks to Months Before Incident

These signals don't appear in system logs. They appear in org charts, meeting notes, and procurement records. By the time a system alert fires, the governance breakdown that enabled it started here. These are the Pre-Failure Signals that have the longest lead time and the lowest detection rate — because they require organizational awareness, not technical monitoring.

Model Registry DriftGovernance Layer

The registry stops reflecting production reality. AI systems get deployed without registration. Registered systems expand their operational scope without a corresponding update. The registry transitions from a live inventory into a historical artifact — accurate at the time of entry and increasingly fictional afterward. Platforms like Credo AI create governance infrastructure around registry management, but the Pre-Failure Signal appears when their outputs stop matching operational reality. The tool isn't the signal. The drift between the tool's record and what's actually running in production is.

Classification StalenessGovernance Layer

A system classified as low-risk six months ago is now making consequential decisions nobody anticipated at classification time. No reclassification review was triggered because nobody owns that process on a rolling basis. Under the EU AI Act, classification staleness is a legal exposure: obligations attach to what the system does in practice, not what it was approved to do at deployment. The gap between approved scope and operational scope is a Pre-Failure Signal that exists entirely in governance records — visible only to someone actively looking.

Shadow Procurement EntryGovernance Layer

A business unit acquires an AI-powered SaaS tool through standard procurement without triggering the AI governance review process. The tool connects to internal data sources within weeks of deployment. By the time governance learns about it, the tool has been processing sensitive data for two months and the vendor assessment has never been conducted. Shadow procurement entry is the governance equivalent of shadow AI — the same failure mode at the organizational rather than the individual level.

Policy Enforcement GapGovernance Layer

Policies exist in documentation. Technical controls that enforce them don't. The AI usage policy says agents cannot access customer PII without authorization. No technical control verifies or enforces that statement at runtime. The gap between what the policy says and what the system does is invisible during normal operations and immediately visible under examination. OneTrust and similar governance platforms close this gap when connected to live system behavior — but a Policy Enforcement Gap Pre-Failure Signal means that connection has broken or was never made.

Ownership AmbiguityGovernance Layer

The person who built the monitoring configuration left the team. The person who owns the model registry is currently on three other projects. The vendor assessment process is owned by whoever has time. AI governance programs with single points of human ownership are one resignation away from quiet collapse. The Pre-Failure Signal is detectable through a simple question: if your most governance-knowledgeable team member left tomorrow, which parts of the program would stop functioning within 90 days?

28% of organizations have formally defined oversight roles for AI governance.

The other 72% are distributing AI governance accountability across teams without defined owners. Ownership Ambiguity is not an edge case. It is the majority state.

Source: IAPP 2024 Governance Survey

2. Security and Behavior Pre-Failure Signals

Stage: Mid · Days to Weeks Before Incident

Security systems detect violations. Pre-Failure Signals appear when behavior is still considered valid — access that's authorized but anomalous, tokens that are legitimate but drifting, agents operating within permissions but outside historical patterns. By the time a security system flags something, the pre-failure window has already compressed significantly.

"Security systems detect violations. Pre-Failure Signals appear when behavior is still considered valid."

— GAIG Observation

Permission Creep DriftSecurity Layer

An AI agent's access scope expands incrementally across several deployment cycles. Each individual expansion passed review because it looked reasonable in isolation. The cumulative access profile was never assessed as a whole. The agent now has effective access to systems it was never designed to touch — and every individual access grant was legitimate. Permission creep drift is invisible to point-in-time access reviews. It requires continuous cumulative tracking of what an agent can reach, not just what it was most recently authorized for.

Orphaned Access TokensSecurity Layer

OAuth tokens issued to AI integrations that are no longer actively monitored. The integration still functions. The token still grants access. Nobody is watching what the integration does with that access — because the integration passed its initial security review and was never flagged for re-evaluation. Orphaned tokens are the AI equivalent of dormant privileged accounts. They represent valid access with no accountability layer watching it. LayerX Security addresses this at the endpoint and browser level for AI tool access specifically.

OAuth Anomaly PatternsSecurity Layer

Established AI integrations changing their token refresh cadence outside historical patterns. Access patterns shifting in terms of timing, volume, or data targets. Geographic or ASN changes in token usage that don't correspond to known infrastructure changes. These appear in logs that most organizations aren't actively reading for AI-specific behavioral anomalies. The logs exist. The pattern recognition to interpret them as AI Pre-Failure Signals rather than background noise typically doesn't.

Tool Invocation DriftSecurity Layer

An AI agent begins calling tools or APIs outside its established historical pattern. The calls are authorized. The frequency, target, or sequence has changed. Without a behavioral baseline for the agent's normal tool invocation pattern, the drift is undetectable. This is the behavioral precursor to either active compromise or silent misconfiguration — and it's indistinguishable from normal operation without continuous baseline monitoring. Check Point Software detects network-layer AI traffic anomalies at this level for organizations with the architecture to surface it.

Prompt Injection Precursor PatternsSecurity Layer

Structurally anomalous inputs appearing in prompt logs at increasing frequency. The security layer hasn't flagged successful injection — the attempts are being blocked or failing. But frequency escalation precedes successful exploitation in documented incident patterns. Rising attempt volume is a Pre-Failure Signal for the injection surface even when the security controls are currently holding. The question isn't whether the controls are working. It's whether the attack surface is increasing and why.

3. Monitoring Degradation Pre-Failure Signals

Stage: Mid to Late · Days to Weeks Before Visibility Collapse

When monitoring fails, it doesn't announce itself. It goes quiet. Alert volumes drop not because the environment got safer but because teams stopped responding and thresholds got adjusted upward to reduce noise. By the time the real signal fires, the observability layer has been compromised for weeks. For the full breakdown of why this happens structurally, the GAIG AI Monitoring Dashboard analysis covers the accountability gap in detail.

The moment alerts stop triggering action, your monitoring layer has already failed.

— GAIG Observation

Alert Fatigue SuppressionMonitoring Layer

Teams adjusting thresholds upward not because baselines genuinely changed but because the investigation overhead became unsustainable. The platform is now calibrated to miss what it previously caught. This is monitoring decay masquerading as operational optimization. Arize AI and Fiddler AI provide strong signal capture — but their value is entirely contingent on the accountability layer that decides what to do when signals fire. Alert Fatigue Suppression means that accountability layer has eroded.

Dashboard AbandonmentMonitoring Layer

Check frequency declining without formal acknowledgment. The daily monitoring review is now weekly. The weekly review is now "when something looks wrong." The person responsible for monitoring oversight has been pulled onto higher-priority work. Dashboard Abandonment is not a technology failure — the platform is running and capturing data. It's an organizational failure that turns a monitoring program into a data collection exercise with no human layer reading it.

Signal-to-Incident CollapseMonitoring Layer

Alerts firing at historical rates but investigation-to-action conversion approaching zero. The monitoring program is producing data. Zero governance actions are being triggered by it. This is the configuration described in the monitoring dashboard analysis — and it is itself a Pre-Failure Signal for the entire observability layer. When signals stop creating action, the monitoring program has functionally stopped existing regardless of what the dashboard shows.

Baseline Drift NeglectMonitoring Layer

A model update was deployed. Monitoring thresholds weren't recalibrated to reflect the updated model's behavior profile. The monitoring system is now measuring current production behavior against a baseline that no longer corresponds to the deployed system. Every signal it produces is potentially miscalibrated — too sensitive in some dimensions, blind in others. Baseline Drift Neglect makes the monitoring layer unreliable without making it obviously broken.

False Positive ToleranceMonitoring Layer

The team has collectively decided that a specific signal category is probably noise. That decision was never formally documented, never reviewed, and never tied to a threshold adjustment with a rationale record. It exists as institutional knowledge — which means it's invisible to anyone who joined the team in the last six months and unknown to the compliance team that would need to explain it to an auditor.

73% of organizations experienced outages directly linked to ignored or suppressed alerts.

The ignored alert became the outage. Monitoring Pre-Failure Signals announce that the system watching for failure has already stopped working instead of the failure coming.

Source: Splunk State of Observability 2025, n=1,855

4. Compliance Erosion Pre-Failure Signals

Stage: Slow Burn · Weeks to Months · Surface Under Scrutiny

Compliance failures are rarely discovered during operations. They surface under scrutiny — in audits, regulatory examinations, and litigation discovery. The erosion has been building for months by the time anyone external sees it, and the documentation trail that was supposed to demonstrate compliance instead demonstrates how long the gaps existed.

Evidence ReconstructionCompliance Layer

When audit evidence is requested, the team generates it from memory and scattered records rather than pulling it from live automated systems. The evidence may not be wrong, but it wasn't captured in real time — and a skilled auditor will know. Vanta and Delve automate evidence generation from live system connections, closing this gap when properly configured. Evidence Reconstruction Pre-Failure Signals appear when that automation has broken down or when the compliance program never connected to live systems in the first place.

Control Mapping DriftCompliance Layer

The regulatory framework your compliance program maps to got updated. Your internal control set didn't. The mapping document still exists and still looks complete — but it no longer accurately reflects what the regulation requires. Control Mapping Drift is invisible internally and immediately visible to an external examiner comparing your controls against current framework requirements. The gap between the framework version you mapped to and the version currently in force is the Pre-Failure Signal.

Audit Trail GapsCompliance Layer

AI system actions are logged. Human responses to those actions aren't. An auditor reviewing the trail can see what the system did. They cannot see what the team did in response, when they did it, or who made the decision. Under EU AI Act Article 72, post-market monitoring systems must not just collect data — they must demonstrate analysis and response. An audit trail that logs system behavior without logging human accountability is a compliance gap regardless of how comprehensive the system-side logging is.

Vendor Review LapsesCompliance Layer

Third-party AI vendor assessments have expiration dates that pass without triggering renewal. The vendor has updated their model, their data handling practices, or their subprocessors since your last assessment. You have agreed to terms that may no longer reflect what the vendor is actually doing with your data. Vendor Review Lapses are common because nobody set a calendar trigger when the assessment was completed, and nobody owns the renewal cycle.

Documentation DecayCompliance Layer

Incident response playbooks referencing team members who left the organization. Risk assessments citing AI systems that have been significantly updated since the assessment. Training completion records that don't correspond to the AI tools employees are currently operating. Documentation Decay is not a filing problem. It's an organizational signal that the governance program stopped updating itself when the environment changed — which is itself a Pre-Failure Signal for the entire compliance layer.

Cross-Layer Red Code Signals

Stage: Imminent · Hours to Days · Governance Intervention Window Closed

No system fails in isolation. Red Code Signals are not single-layer events. They are Cross-Layer Convergence — the alignment of Pre-Failure Signals across multiple Control Layers simultaneously. This is where individual drifts compound into inevitable failure. The CISO reading a Red Code Signal is no longer in prevention mode. They're in containment mode.

Incidents occur when signals align across layers without detection.

— GAIG Observation

Unreviewed Model DeploymentRed Code · Cross-Layer

A model update deployed without a governance review, with no monitoring threshold recalibration, and with no compliance record of either event. Three Control Layers failed simultaneously. The model is running in production with no calibrated oversight. Relyance AI surfaces data flow changes triggered by model updates — the Pre-Failure Signal appears when those changes go untracked across the Execution Chain.

Untracked Data IntegrationRed Code · Cross-Layer

A new data source connects to an AI system. Governance didn't assess it. Security didn't scope the access. Compliance has no record of the data flow. The Execution Chain now includes data the system was never designed to handle, with no visibility into how the model is using it or what it's producing with it. This is the configuration that precedes data exposure incidents in regulated industries — and it's entirely invisible without cross-layer data governance tracking.

Autonomous Access ExpansionRed Code · Cross-Layer

An AI agent's permission scope expanded through a series of individually approved changes across several months. Each change was reviewed in isolation. The cumulative access profile was never assessed as a whole. Security has no behavioral baseline for the expanded scope. Governance has no record of the aggregate change. The agent is now operating with effective privileges that no single approval decision ever explicitly authorized. ModelOp tracks agent registries and per-use-case approval workflows — the Pre-Failure Signal is the gap between the registry record and production reality.

Multi-Layer Signal ConvergenceRed Code · Cross-Layer

Governance signals are active. Security behavioral anomalies are present. Monitoring thresholds are miscalibrated. Compliance audit trail is incomplete. Each individual signal was manageable in isolation. Their simultaneous alignment is not. This is the exact configuration that produces the "sudden" incidents that weren't sudden — they were four layers of Pre-Failure Signals that nobody had the cross-layer visibility to read as a system rather than as isolated noise.

The Time Compression Model

The earlier you read Pre-Failure Signals, the larger your response window. Organizations with cross-layer visibility catch failures at the weeks stage. Organizations watching one layer catch them at hours — if at all. This model maps signal timing to response options.

Pre-Failure Signal Timeline

Early Stage - Weeks Before

Governance & Human Signals — Largest Response WindowModel Registry Drift actively occurring. Classification reviews overdue. Shadow procurement entry has happened. Policy enforcement gaps widening. Ownership ambiguity setting in. These signals exist entirely in organizational behavior — no system alert will surface them. The window to act is longest here. The probability of detection without purposeful scanning is lowest. Organizations that catch failures at this stage do so through active governance audits, not passive monitoring.

Model Registry DriftClassification StalenessShadow ProcurementPolicy Enforcement GapOwnership Ambiguity

Mid Stage - Days Before

Security & Behavior Signals — Narrowing Window Permission creep reaching critical cumulative threshold. Orphaned tokens active and unmonitored. OAuth anomaly patterns appearing in logs. Tool invocation drift accelerating outside historical baseline. Prompt injection precursor frequency increasing. Security tools may be generating data. The behavioral baseline is breaking down. The window for governance-level intervention is narrowing — response at this stage requires security team action, not policy adjustment.

Permission Creep Drift Orphaned Access Tokens OAuth Anomaly PatternsTool Invocation DriftInjection PrecursorsAlert Fatigue SuppressionSignal-to-Incident Collapse

Red Code - Hours Before

Cross-Layer Convergence — Containment Mode Only Red Code Signal configuration active. Multiple Control Layers showing simultaneous degradation. Monitoring thresholds miscalibrated against current model behavior. Audit trail incomplete for the current incident sequence. The governance intervention window has closed. The incident is no longer preventable — it is detectable and containable. The CISO who reaches this stage without having read the earlier signals is operating blind in the most critical window of the Execution Chain.

Unreviewed Model DeploymentUntracked Data IntegrationAutonomous Access ExpansionMulti-Layer Convergence

7What a Real Early Warning System Looks Like

A real AI early warning system is not a tool. It is a behavioral posture — a set of organizational practices that ensure Pre-Failure Signals get read, owned, and acted on across all four Control Layers simultaneously. Before the next board meeting, before the next vendor conversation, before the next audit, your team needs to answer these five questions without looking anything up.

Can you name the individual — not the team, the person — responsible for acting on each active monitoring signal category? If the answer is a team name, Ownership Ambiguity is active.

When was the last time every AI system in your registry was validated against its current operational scope — not when it was registered, but when its classification was last confirmed against what it's actually doing?

Do you have a behavioral baseline for every AI agent operating with access to production systems — and does your security team receive automated alerts when behavior deviates from it?

Can you produce an audit trail that shows not just what your AI systems did but what your team did in response — with timestamps, named individuals, and documented outcomes?

If your most governance-knowledgeable team member resigned tomorrow, which parts of your AI governance program would stop functioning within 90 days — and have you documented those single points of failure?

"If any of those answers are uncertain, the uncertainty itself is a Pre-Failure Signal. Not a risk to be noted and monitored. A signal to be acted on now, before the layers converge."

Nathaniel Niyazov

CEO, GetAIGovernance.net

Looking for platforms that provide cross-layer visibility across AI Risk & Controls, Model Observability, AI Threat Detection, and AI Audit & Documentation? Submit an inquiry and we'll match you with vendors that address your specific Pre-Failure Signal exposure — not just the layer you're already watching.

Submit an Inquiry

Sources & References

Our Take

AI Governance Take

The gap this index describes is not a technology gap. Every Pre-Failure Signal listed here is detectable with existing infrastructure — if the accountability layer exists to read it and the cross-layer visibility exists to interpret signals as a system rather than as isolated noise. Organizations that catch failures at the weeks stage aren't running better tools. They're running better organizational practices around the tools they have.

The organizations that discover failures from a regulator, a user complaint, or a billing anomaly have one thing in common: they were watching one Control Layer and calling it governance. They had a monitoring dashboard and called it observability. They had a policy document and called it compliance. They had a security tool and called it protection. None of those things are wrong. All of them are insufficient without the cross-layer reading that turns individual signals into an early warning system.

The CISO who runs this index against their current environment will find Pre-Failure Signals. Not because their organization is particularly exposed — but because the signals are present in almost every enterprise AI deployment that has been running for more than six months without active cross-layer governance. Finding them early is not a failure. It's the point.

The organizations pulling ahead are the ones that treat Pre-Failure Signal detection as an ongoing operational practice — not a quarterly audit exercise, not a pre-incident review, not a board presentation framework. They scan for signals continuously. They name owners. They build the cross-layer visibility that turns four separate dashboards into one coherent picture of organizational AI risk.