Most organizations still treat AI governance like any other enterprise software category. They make a vendor list, ask for demos, compare feature grids, and pick the one that looks the most complete on paper. That approach works fine when you’re buying a CRM or a project management tool. It falls apart the moment you step into AI governance.

In practice the platforms solve very different problems. Some focus on writing policy and documenting approvals. Others track what models actually do once they’re running in production. A few try to enforce controls before any action even happens. Buyers walk into evaluations assuming every platform does roughly the same thing, so they end up with tools that cover one or two layers well and leave the rest wide open.

This guide cuts through the confusion. It lays out the major capabilities that actually make up AI governance, shows exactly what problem each one solves, and explains where each one fits in the real lifecycle of AI systems. The point is simple: you can stop guessing what you need and start buying based on how your own AI actually operates instead of whatever the vendor slides say.

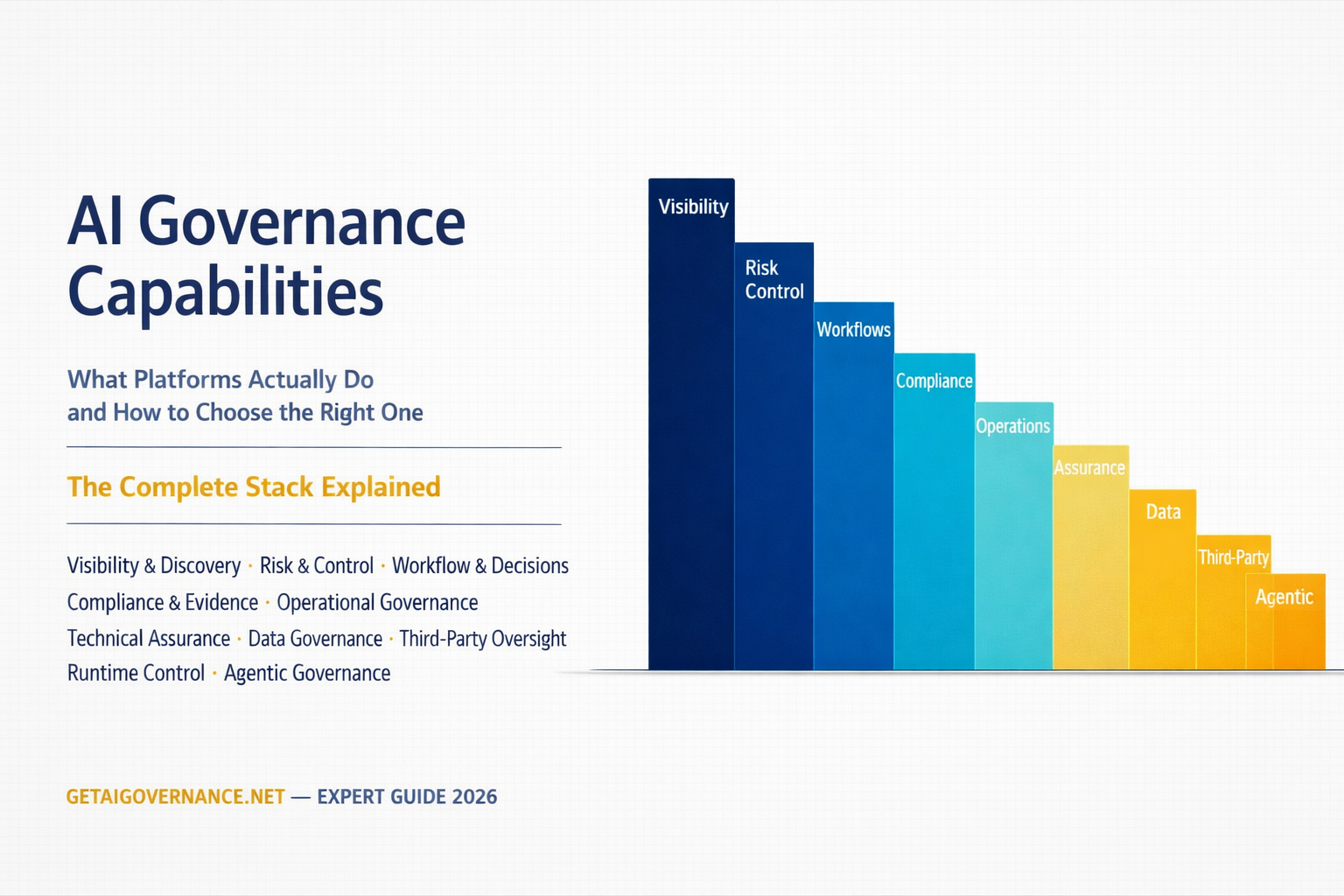

The AI Governance Stack

The clearest way to understand AI governance is as a stack of distinct layers. Each layer handles a different part of the problem.

At the bottom sits visibility: simply knowing which AI systems exist and what they are actually doing. Next comes risk and policy definition, where teams decide what acceptable behavior looks like for those systems. Above that is workflow and operations, turning policy into actual processes that people and systems can follow. Then you have monitoring and assurance, which checks whether the systems behave the way they were supposed to after they go live. Data governance cuts across all the layers, controlling how information moves in and out of the models. At the very top is runtime control, the mechanisms that decide in real time what an AI system is allowed to do, especially when it’s agentic or autonomous.

Most governance problems show up in the gaps between these layers, not inside any single layer. A company can have solid risk assessments but no real production monitoring to see if those assessments hold up. Another can track model behavior closely but have no idea what data is flowing into the system. A third can generate perfect compliance documents yet have nothing in place to actually enforce the policies when the model is running.

No single platform delivers equal strength across every layer. The useful question is not which one has the longest feature list. It is which layers matter most for your specific risk exposure and which platforms actually deliver depth where you need it.

CORE PLATFORM CAPABILITIES

1. Visibility and Discovery

Every governance program starts with the same basic question most organizations cannot answer reliably: what AI systems actually exist across the business? AI does not enter through one controlled channel. Data science teams push models straight into production. Developers embed external APIs into applications without formal review. Business units buy AI-powered SaaS tools on their own. Vendors quietly add AI features to existing platforms. The result is a scattered landscape where plenty of systems operate outside any formal record.

An AI registry creates the central catalog. It captures ownership, purpose, deployment details, and governance status for each system. More advanced platforms add automated discovery that scans codebases, integration points, and production environments to surface systems that were never formally registered. Holistic AI’s shadow AI detection is one example of this in practice, surfacing model dependencies embedded in scripts and third-party applications that fall outside any formal review process.

Most governance failures do not come from systems that were reviewed and approved. They come from systems that were never in the inventory at all. Without visibility, every other layer of governance rests on an incomplete foundation. You cannot apply risk assessments, workflows, or monitoring to systems you do not know exist. That gap is where real exposure begins.

2. Risk and Control Definition

Once the systems are visible, the next step is deciding what acceptable behavior looks like for each one. This layer includes risk assessments, risk classification, and policy definition.

Risk assessment looks at what data the system processes, what decisions it influences, and what happens when it fails. Risk classification assigns a level that determines the actual governance obligations. Policy definition turns those decisions into concrete rules the system must follow. Control mapping connects those rules to frameworks like NIST AI RMF, ISO 42001, or the EU AI Act.

The stakes are real. Once a system is classified, the organization is no longer choosing preferences. It is determining what it is legally required to do. The EU AI Act made this classification more consequential than ever because specific legal obligations attach to each risk tier. A system classified as high-risk faces conformity assessment requirements, technical documentation obligations, mandatory human oversight, and continuous post-market monitoring.

Getting the classification right is no longer just an internal governance question. It is a legal determination with enforcement consequences. If the boundary between acceptable and unacceptable behavior is drawn incorrectly, everything built on top of it will be misaligned from the start.

3. Workflow and Decision Layer

Policies on paper mean little without the processes that turn them into actual decisions. This layer handles approval systems, governance workflow orchestration, and escalation paths for when something needs extra review.

A governance workflow answers the practical questions: who reviews a new AI proposal, what documentation is required, who can approve deployment, and what happens when a system fails a risk check. It makes sure similar systems are handled in similar ways regardless of who is involved or how busy the team happens to be.

Without this layer, governance stays inconsistent and hard to scale. With it, decisions become repeatable across teams instead of dependent on whoever happens to review them. Trustible built its platform around this exact operationalization challenge, which is why its customer base skews toward large enterprises and government agencies where the coordination problem is most acute.

Organizations with hundreds of AI use cases moving through review pipelines simultaneously cannot manage that volume through ad hoc processes. The workflow layer is what makes governance scale without proportionally scaling the governance team.

4. Compliance and Evidence

Governance must be shown, not just defined. This layer produces the records that prove controls exist and are being followed. It includes audit logs, control mappings, and structured documentation aligned with frameworks like the EU AI Act and ISO 42001.

The important difference is how the evidence is created. Some platforms pull records directly from live systems. Others rely on manual input from teams. During an audit, that difference is not subtle. It determines whether the organization can defend its governance or not.

Regulators and auditors evaluate behavior, not intent. If documentation does not align with observed system behavior, it weakens the organization’s position. Platforms that generate evidence automatically from operational systems produce records that reflect what actually happened. Platforms that depend on human-entered documentation produce records that reflect what humans chose to document. That gap becomes obvious under examination.

This layer turns internal governance into external accountability. Organizations that can produce clear, traceable evidence organized around recognized frameworks stand in a fundamentally stronger position during regulatory reviews or customer security assessments. Those that cannot face longer audits, higher scrutiny, and greater compliance risk. The capability is not cosmetic. It is what separates defensible governance from aspirational documentation.

5. Operational Governance

AI systems keep changing. Models get retrained, data pipelines shift, ownership moves between teams, and use cases expand. Operational governance keeps the process continuous instead of one-time.

It includes intake processes for new systems, registration workflows at deployment, lifecycle tracking, and cross-functional coordination so the right people stay involved as systems evolve. ModelOp’s approach to lifecycle automation is a clear example at enterprise scale: systems are managed from intake through retirement through a single coordinated system.

This layer makes sure governance does not drift over time. Most governance programs fail here. They are designed for initial deployment, not for the ongoing changes that happen afterward. Treating governance as an ongoing process rather than a one-time decision is what keeps it aligned with reality.

Without operational governance, even the best policies become outdated quickly. A system approved six months ago can look very different today. Operational governance closes that gap by treating AI systems like living assets that require ongoing attention instead of static approvals that are filed away and forgotten.

6. Technical Assurance and Monitoring

This layer connects governance policies to what the systems are actually doing in production. It includes pre-deployment evaluation for bias, robustness, and explainability, plus ongoing monitoring, drift detection, and red teaming.

Testing shows how a system behaves in a controlled environment. Monitoring shows how it behaves when control is gone. Both are needed because a model can pass every test during development and still behave differently once it meets real-world data.

Monitaur focuses specifically on the production monitoring side, which is why its positioning resonates with financial services and insurance organizations where models make consequential decisions continuously in live environments. Holistic AI covers both sides, which is why it appeals to organizations that need governance supported by actual evidence of system behavior rather than documentation of what behavior was intended to be.

The distinction between testing and monitoring is operationally important. Without monitoring, deviations may go unnoticed until they create measurable impact. Technical assurance ensures that governance is grounded in real outcomes rather than initial assumptions about system performance.

7. Data Governance

AI systems create data questions that traditional enterprise data governance never had to handle. This layer tracks data lineage, monitors flows through models, enforces usage rules, and manages privacy obligations such as deletion and unlearning.

If an organization cannot trace how data moves through its AI systems, it cannot prove compliance, regardless of what its policies say. Relyance AI built its platform specifically around this problem, tracking data flows from source code through model inference in real time rather than relying on periodic audits or self-reported documentation.

The practical value of that capability becomes clear during regulatory examinations under GDPR or the EU AI Act. Organizations are expected to demonstrate not just that their policies address data usage but that their systems actually enforce those policies as data moves through them.

Data lineage tracking, data flow monitoring, and privacy alignment capabilities are increasingly being built into AI governance platforms that started from other directions, but the depth of coverage varies significantly. Organizations with substantial privacy exposure should evaluate these capabilities independently rather than assuming that any platform with “data governance” listed in its feature set provides equivalent coverage.

8. Third-Party AI Governance

A large and growing share of AI risk comes from systems the organization did not build itself. This layer covers vendor risk assessments, oversight of third-party models, API dependency tracking, and monitoring of foundation model services used inside internal applications.

In many organizations, the largest AI risks sit entirely outside internal systems. The EU AI Act makes this especially relevant because it places obligations on deployers, not just developers. Organizations now need visibility and controls over AI they consume from vendors just as much as the AI they build internally.

This includes vendor AI risk management, which evaluates the governance practices of AI providers before procurement and monitors them on an ongoing basis. It includes third-party model oversight, which tracks how externally provided models behave in the organization’s specific deployment context rather than relying on vendor-provided performance claims.

The gap between what most organizations know about the AI in their own systems and what they know about the AI in their vendor systems is substantial. It is also a gap that regulators are increasingly aware of.

9. Runtime Control

Runtime control shifts governance from watching what happens to actively shaping what is allowed to happen. This includes guardrails that evaluate inputs and outputs in real time, as well as deterministic gating that requires explicit validation before an agent can access certain tools or take certain actions.

For agentic systems especially, this layer matters because the cumulative effect of many small autonomous steps can create significant risk even when no single step looks problematic. Runtime enforcement is the layer that makes governance materially meaningful for autonomous AI.

Platforms like WeRAI and X-Loop3 Labs approach this from an architectural direction, arguing that governance should shape the space an AI system operates in before the model processes input at all, rather than filtering output after it has already been generated. This pre-semantic approach is early-stage but technically interesting, particularly as the limitations of output-layer guardrails become more visible in agentic deployment contexts.

Without runtime control, governance depends on identifying issues after they occur, which may be too late to prevent impact.

10. Emerging Capabilities: Agentic AI Governance

Agentic systems introduce a different class of governance challenge. These systems operate across sequences of actions, interacting with tools, data sources, and external systems over time.

Traditional governance frameworks were not designed for systems that act continuously without supervision. Agent Behavioral Contracts are one emerging approach. They define enforceable rules for what actions an agent can take, what resources it can access, and when it must escalate to a human.

ModelOp launched comprehensive agentic AI governance capabilities in 2025, including agent registries and per-use-case approval workflows for agentic systems. Saidot extended its platform with an Agent Catalogue that governs AI agents deployed through Azure AI Foundry. Both represent the beginning of a governance practice for autonomous systems that is still being defined as the systems themselves evolve.

The focus is no longer only on what a system produces, but on what it is allowed to do at each stage of execution. This shift marks the move from governing static models to governing systems that plan and act continuously on their own.

Governance Beyond the Platform

Platform capabilities address the operational and technical dimensions of AI governance, but governance as an organizational practice extends considerably further. Ethics and cultural governance includes the human structures that determine how AI development decisions are made: ethics review committees, AI literacy programs, values alignment processes, and the organizational culture that either reinforces or undermines whatever governance policies are formally in place. A platform cannot substitute for an organization that has genuinely committed to responsible AI development; it can only support and structure a commitment that already exists.

Legal and intellectual property governance addresses dimensions that platforms touch only partially: copyright risk in AI-generated content, IP indemnification obligations from AI vendors, licensing questions for models trained on proprietary data. Financial governance, sometimes called AI FinOps, addresses the economics of AI deployment: token usage costs, compute allocation, rate limiting, and the ROI measurement that connects governance investment to business outcomes. Environmental and sustainability governance is still nascent in most organizations but is beginning to appear in ESG reporting as the energy footprint of large model deployments becomes more visible.

These domains are important to name because they reveal the limits of what software can solve. A platform can automate evidence collection and enforce policy workflows. It cannot create organizational commitment to accountability, develop institutional understanding of AI risk, or substitute for legal expertise in navigating IP liability. Governance is an organizational capability that platforms support, not a product that platforms replace

How to Choose the Right Coverage

The starting point for any governance evaluation should be identifying your primary risk exposure rather than your most immediate compliance deadline. Compliance deadlines create urgency, but addressing them in isolation without understanding the broader risk landscape typically produces governance programs that satisfy regulators on paper while leaving operational risk unaddressed.

Start with the Risk Type

Compliance risk — the risk of failing a regulatory examination or audit — is addressed primarily by risk assessment, policy workflows, and evidence generation capabilities. Organizations primarily facing compliance risk need platforms that generate defensible audit trails automatically, map controls to specific regulatory frameworks, and produce documentation that regulators recognize as meeting their standards. OneTrust, Credo AI, and Trustible are among the platforms built with this use case as a primary design consideration.

Production risk — the risk that deployed AI systems behave incorrectly or harmfully in live environments — requires monitoring and behavioral oversight. Organizations with AI systems making consequential decisions continuously in production environments need platforms that track model behavior over time, detect drift, and surface anomalies before they compound into significant problems. Monitaur and Holistic AI address this most directly.

Data risk — the risk that AI systems process personal data incorrectly or in violation of privacy commitments — requires data governance capabilities that track how information actually flows through AI systems rather than how governance documentation says it should flow. Relyance AI was built specifically for this problem, and OneTrust addresses it through its broader privacy and data governance ecosystem.

Control risk — the risk that autonomous AI systems take harmful actions without appropriate oversight — requires runtime enforcement, guardrails, and gating mechanisms that prevent problematic actions before they occur rather than detecting them afterward. This is the most technically demanding governance requirement and the one currently least well-served by the mainstream platform market.

Scale and coordination risk — the risk that governance processes break down as AI deployment scales across an organization — requires lifecycle orchestration and system-of-record capabilities that maintain governance consistency across hundreds of systems and multiple teams. ModelOp addresses this most directly for large enterprise environments.

Most Organizations Require Multiple Layers

It is worth being direct about something that vendor marketing tends to obscure: most organizations with meaningful AI deployment need governance coverage across several layers simultaneously, and no single platform provides equal depth across all of them. Financial institutions typically require compliance documentation for regulatory examinations alongside production monitoring for models making credit or fraud decisions, alongside data governance for the personal financial data those models process. SaaS companies with AI features typically require data governance for user data alongside runtime control to prevent harmful model outputs alongside compliance workflows for customer security reviews.

The practical implication is that governance platform selection is often not a single-vendor decision. It is a portfolio decision about which capabilities to prioritize first, which platform provides the strongest coverage of the most critical layers, and where complementary tools might be needed over time. Organizations that approach governance as a single-vendor purchase frequently discover gaps later that are expensive to address retroactively.

Four Questions to Ask Every Vendor

Across all of the capability layers described in this guide, four questions separate platforms that provide meaningful governance from platforms that provide the appearance of governance. The first is whether the platform connects to live systems or operates as documentation only. Platforms connected to real infrastructure generate governance evidence from what systems actually do. Platforms that rely on human-entered documentation generate evidence from what humans choose to report, which is a different thing entirely during an examination.

The second is whether evidence is generated automatically or requires manual input. Manual evidence collection introduces gaps whenever governance teams are under-resourced, which is frequently. Automated evidence collection is continuous and does not depend on governance teams prioritizing documentation tasks during periods of competing demands.

The third is whether the platform enforces behavior or only tracks it. Monitoring tells you what happened. Enforcement shapes what can happen. Both have value, but they solve different problems, and organizations with runtime control requirements should not accept monitoring capabilities as a substitute.

The fourth is whether the platform can scale across teams and environments without proportionally scaling the governance team. Governance that works for ten AI systems but breaks down at one hundred is not a governance solution for a scaling organization. Platforms that embed intelligence into workflows — guiding reviewers through assessments, surfacing relevant context automatically, routing decisions to appropriate stakeholders without manual coordination — scale governance capacity without requiring equivalent growth in headcount.

Common Mistakes in Governance Platform Evaluation

The most common mistake is assuming that one platform covers all governance needs. Vendor positioning is designed to suggest comprehensive coverage, and feature lists are constructed to maximize the impression of breadth. The operational depth of any given capability is rarely visible from a feature list or a demo; it becomes visible during implementation when specific requirements encounter real limitations.

The second common mistake is confusing compliance workflows with operational control. A platform that generates documentation and manages review workflows produces compliance artifacts. It does not necessarily prevent AI systems from operating outside policy boundaries between review cycles. Organizations that treat documentation-generation platforms as equivalent to enforcement-capable platforms frequently discover the difference when something goes wrong in production and they realize their governance records confirm the problem occurred but do nothing to explain why controls did not prevent it.

The third mistake is over-prioritizing documentation over operational visibility. In environments where regulatory pressure is immediate, the incentive is to build governance programs that satisfy auditors quickly. That typically means documentation-first approaches that generate audit-ready artifacts without connecting deeply to live system behavior. Those programs pass audits. They are less effective at actually preventing the harms that governance is supposed to address.

Our Take

AI governance is not a single product category. It is a system of capabilities that operate across different layers of an organization's AI environment, each addressing a different type of risk and a different point in the AI lifecycle. Visibility without monitoring leaves organizations blind to production problems. Policy definition without enforcement produces documentation that satisfies auditors but does not constrain behavior. Compliance infrastructure without data governance leaves privacy exposure unaddressed. Runtime control without operational governance breaks down at scale.

The organizations that navigate this most effectively are the ones that approach governance as an architectural question rather than a procurement question. Before evaluating vendors, they map their actual risk exposure across the capability layers described in this guide. They identify which layers are most critical for their specific regulatory environment, industry, and deployment context. They evaluate platforms based on operational depth in those specific layers rather than the breadth of a feature list. And they plan for the reality that no single platform will provide equal depth across everything they need.

GetAIGovernance.net organizes platforms based on what they actually do across these capability layers, allowing organizations to evaluate solutions based on operational reality rather than marketing positioning. The marketplace atGetAIGovernance.net maps governance platforms to the specific capabilities they provide strongest coverage for, so organizations can make procurement decisions anchored in their actual risk exposure rather than vendor-defined categories