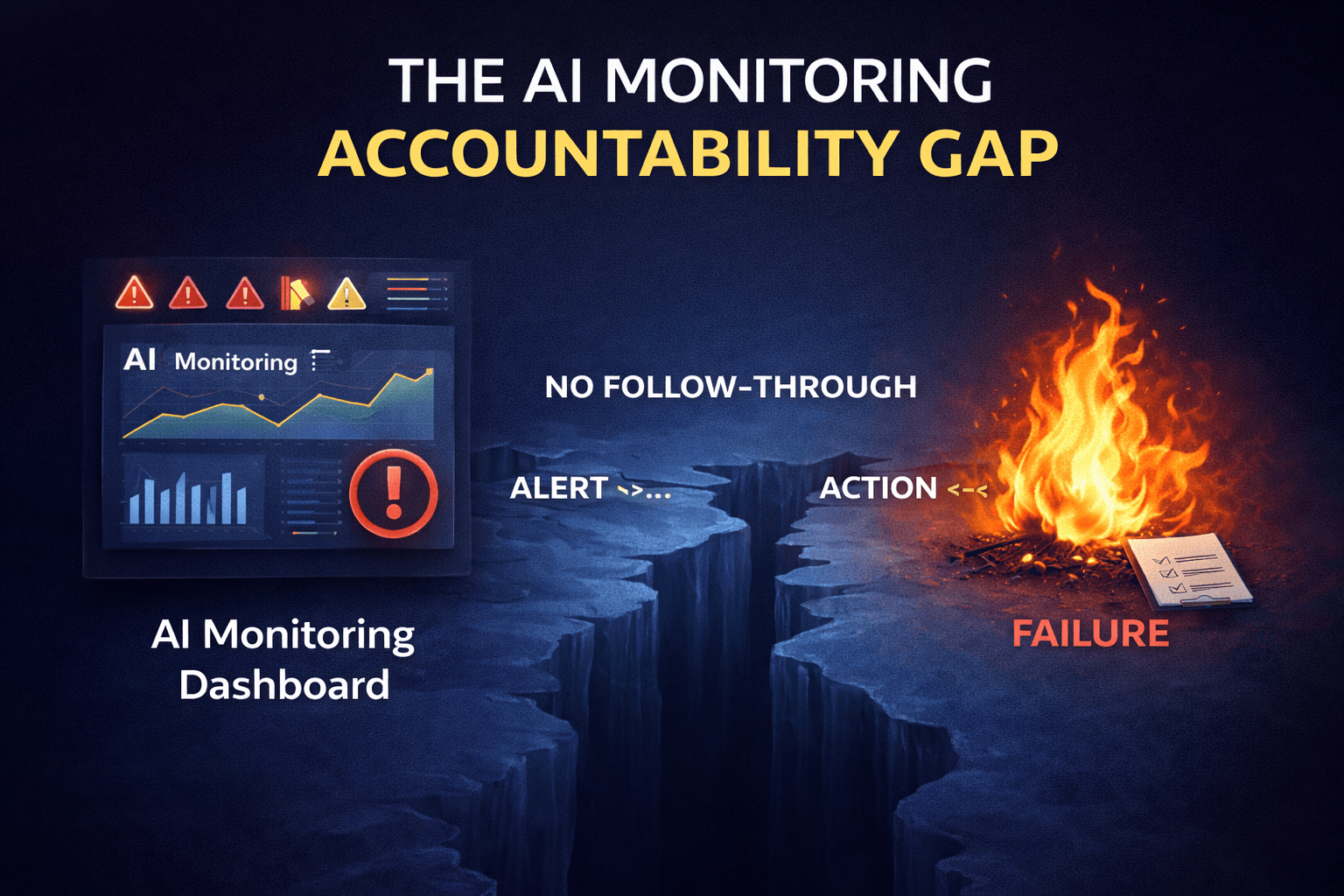

Every enterprise that bought an AI monitoring platform believes it has AI monitoring. The dashboard is live. The data is flowing. Somebody checks it when they remember to. Leadership got the implementation update, nodded, and moved on. The box is checked, the budget is spent, and the program is declared complete.

Here's what didn't get built: the human layer. Nobody defined who owns each signal category. Nobody documented what response is required when a threshold is crossed. Nobody established an escalation path, a response SLA, or an accountability structure for what happens when the platform surfaces something concerning. The monitoring vendor didn't build that layer — it isn't their product. The implementation team didn't build it because getting the data flowing was the deliverable. Leadership didn't ask about it because the dashboard looked impressive.

So it never got built. The platform runs. The data accumulates. The signals fire. And they fire into a vacuum.

78% of business executives lack strong confidence they could pass an independent AI governance audit within 90 days.

This is not a policy problem. Most of these organizations have policies. It's an accountability problem — the monitoring data exists and nobody can show what was done with it.

A monitoring dashboard that nobody acts on is not a monitoring program. It is a liability dressed up as infrastructure. And right now, that describes the majority of enterprise AI monitoring deployments.

How Monitoring Deployments Actually Get Built

Monitoring platforms get purchased in one of two moments. During a governance initiative, when leadership wants proof of oversight and somebody needs to show the board something concrete. Or after a near-miss incident, when someone gets scared and a budget gets unlocked fast. Either way the implementation follows an identical path: tool selected, data sources connected, signals configured, dashboard built, demo presented to leadership, implementation declared complete.

What never gets built during any of that: the accountability layer. The twelve distinct signal categories that AI monitoring covers — performance signals, drift signals, output quality signals, cost signals, user behavior signals — each require a named human owner, a documented response procedure, and a defined escalation path. None of that exists in most implementations because none of it was in the project scope.

75% of organizations have AI usage policies. Only 54% maintain incident response playbooks.

That 21-point gap is the accountability gap made measurable. Policy without a response procedure is documentation. It is not a program.

Source: Pacific AI 2025 AI Governance Survey, n=351

The monitoring vendor doesn't build the accountability layer because it isn't their product. Their product is signal capture and visualization. What your team does when a signal fires — that's yours to figure out. Most teams never do. The platform runs. The signals accumulate. The tab stays open. Nothing changes until something breaks loudly enough that someone has to respond.

"Organizations that are deploying AI can't show how decisions are made and who is accountable for the outcome. This is the AI proof gap."

The gap between a monitoring deployment and a monitoring program is not a technology gap. Every major platform in the Model Observability category is capable of capturing meaningful signals. The gap is structural. And it sits entirely on the human side of the equation.

The Signal-to-Action Gap

Walk through what actually happens when a monitoring signal fires in a typical enterprise environment. Hallucination rate trending upward over the past two weeks. Drift signal crossing a configured threshold. A cost spike appearing in the token usage data. An anomalous user behavior pattern surfacing in the prompt logs. The signal is real. The platform captured it correctly. Now what?

Who owns that signal? If the honest answer is "the ML team probably" or "whoever's on call" or "we'd flag it in the next sprint review" — that is not ownership. That is diffusion of responsibility wearing a process costume. A signal without a named owner is noise with a prettier graph on top of it. A named owner without a response SLA is a suggestion. A response SLA without documented escalation criteria is a good intention that evaporates under deadline pressure.

Only 28% of organizations have formally defined oversight roles for AI governance, according to the 2024 IAPP Governance Survey. Only 28% of CEOs take direct responsibility for AI governance oversight according to McKinsey's State of AI survey — and only 17% of boards do. When nobody owns the program at the top, nobody owns the signals at the bottom. The accountability gap is organizational, not technical.

"Every organization I speak with has a monitoring dashboard. Almost none of them can tell me who is responsible for acting on what it shows by end of day. That's not a monitoring program. That's a very expensive way to document that something went wrong."

Nathaniel Niyazov, CEO, GetAIGovernance.net

Real signal ownership requires five things: a named individual per signal category, a defined response timeframe, a documented investigation standard, escalation criteria, and a record of what was done and why. Almost no enterprise monitoring deployment has all five for all active signal categories. Most have none for most signals. That isn't a criticism of the team. It's a description of what the implementation left unbuilt.

Signal Category | What Most Teams Have | What's Missing |

|---|---|---|

Hallucination Rate | Dashboard display, threshold alert | Named owner, response SLA, investigation protocol, escalation path |

Data Drift | Statistical chart, optional email alert | Defined acceptable drift threshold, retrain trigger criteria, compliance documentation |

Cost / Token Spike | Usage graph, monthly billing report | Real-time ceiling enforcement, per-agent attribution, investigation workflow |

Anomalous Behavior | Flag in the dashboard | Triage criteria, security team routing, audit trail of investigation |

Output Quality | Aggregate score trend | Session-level trace access, documented acceptable quality floor, rollback authority |

For a complete breakdown of what each signal category actually measures and what platforms cover them well, the GAIG AI Monitoring Signals guide maps all twelve categories to specific platform capabilities and evaluation criteria.

Alert Fatigue Looks Different in AI

Traditional operations teams have years of experience tuning alert thresholds. They've investigated enough false alarms to develop institutional knowledge about what noise looks like versus what a real incident looks like. AI monitoring teams are new at this. The signals are new. The baselines are recent. The thresholds are educated guesses made during implementation, calibrated against a production environment that barely existed yet.

What happens next is predictable. The platform fires frequently in the first weeks because thresholds aren't calibrated yet. The team investigates and finds nothing actionable. They adjust thresholds. It fires again. They investigate again. Nothing actionable. Within a few months the team has developed quiet institutional skepticism toward the monitoring program — not consciously, not officially, but practically. The alerts feel like noise. The investigations feel like overhead. The dashboard becomes background wallpaper.

63% of daily security alerts go entirely unaddressed across enterprise environments.

PagerDuty's 2025 State of Digital Operations found that the average on-call engineer receives roughly 50 alerts per week, but only 2–5% require human intervention. AI monitoring teams are being trained by the same dynamic.

Source: Vectra AI 2026 / PagerDuty 2025

Then a real signal fires. A genuine hallucination rate spike. A real drift event that precedes a production quality failure. An anomalous prompt pattern that signals active misuse. It gets the same response as the last forty alerts: a quick look, a judgment call that it's probably fine, a return to the backlog.

73% of organizations experienced outages directly linked to ignored or suppressed alerts.

The ignored alert became the outage. Alert fatigue in AI monitoring doesn't announce itself. It looks like reasonable prioritization right up until the moment it doesn't.

Source: Splunk State of Observability 2025, n=1,855

The solution to AI monitoring alert fatigue is not better thresholds. It's building the accountability structure before the fatigue sets in — before the team learns to ignore the alerts, before the institutional skepticism calcifies. Signal ownership, response SLAs, and investigation protocols need to exist at implementation, not six months later when something finally breaks loudly enough that leadership notices.

Unactioned Monitoring Data Is a Compliance Liability

Most teams treating unactioned monitoring data as a backlog problem don't realize it's also a legal problem. In a regulated environment — financial services, healthcare, or any organization operating under the EU AI Act — having a monitoring system that captured evidence of a problem is not a neutral fact.

If that monitoring data shows a signal that was never investigated and never responded to, the organization has documented proof that it knew or should have known something was wrong and chose not to act. That is worse than not having monitored at all. No monitoring means no evidence. Unactioned monitoring means evidence of inaction.

EU AI Act — Article 72: Post-Market Monitoring

The post-market monitoring system shall "actively and systematically collect, document and analyse relevant data" on the performance of high-risk AI systems throughout their lifetime, allowing the provider to "evaluate the continuous compliance" of AI systems with regulatory requirements.

The operative word is analyse. The regulation does not simply require data collection. It requires analysis and demonstrated response to what the data shows. A dashboard that collects signals and displays them without documented analysis, investigation, and response is not Article 72 compliant — regardless of how sophisticated the platform is.

Source: EU AI Act, Article 72 — Post-Market Monitoring by Providers

In a regulatory examination or litigation context, a dashboard full of unaddressed signals is not a sign of a mature monitoring program. It is a paper trail. The compliance team that signed off on the monitoring implementation may have believed the platform itself constituted compliance. The regulator reviewing unactioned alert logs will not share that view.

For a full breakdown of what AI compliance frameworks actually require versus what most organizations have in place, the GAIG AI Compliance guide maps certification requirements, regulatory obligations, and the specific evidence organizations need to produce.

The Questions Your Monitoring Vendor Never Asked You

Every monitoring vendor demo shows what the platform surfaces. None of them walk through what your team does when it surfaces something. That omission is not accidental — the vendor's job ends when the data flows. Your accountability layer is out of scope for them. That's not a criticism. It's a structural reality worth naming clearly before you sign the contract.

Less than 1% of enterprises have achieved truly autonomous remediation according to ServiceNow's 2025 report. That means 99% of organizations are relying on humans to respond to every alert that fires. Humans who, in most cases, have no defined process for doing so.

Before your next monitoring platform conversation — or before your next audit of the deployment you already have — your team needs to be able to answer every one of these questions without looking anything up:

When a signal crosses threshold, who gets notified — by name, not by team — and how fast do they need to respond?

What constitutes an adequate investigation versus a dismissal? What documentation does a dismissal require?

What is the escalation path when the first responder can't resolve it within the defined window?

What gets documented for every signal response — and where does that documentation live for audit purposes?

What triggers a governance review versus a model rollback versus a full production shutdown — and who has the authority to make each of those calls?

If your team can't answer all of those questions right now, your monitoring deployment is producing data. It is not producing governance. The 2025 Autonomous IT Report describes this clearly: most organizations have established AI governance frameworks, but "many remain policy-heavy and operationally light." The monitoring platform is the policy. The accountability layer is the operations. And the operations layer is what's missing.

What a Monitoring Program Actually Looks Like

The gap between a monitoring dashboard and a monitoring program is the accountability layer. Building it isn't technically complex. It's organizationally specific — which means no vendor can build it for you. But it has a clear structure that every implementation should produce before go-live.

Named signal owners across every active monitoring category. Not a team. A specific person. Ownership means accountability. Accountability means someone's job is affected if the signal is ignored.

Documented response procedures per signal type. What investigation looks like, what the acceptable timeframe is, and what the acceptable outcomes are for resolution versus escalation.

SLAs with automatic escalation. Missing the response window creates an escalation automatically. Not optionally. The SLA is only real if breaking it has a consequence that doesn't require a human to notice it was broken.

Integration between monitoring signals and governance workflows. Signals don't just appear in a dashboard — they create tracked items in the governance process with owners, deadlines, and audit trails.

Audit trails that capture human response, not just system behavior. The auditor reviewing your monitoring program wants to see that signals fired, were investigated by a named individual within a defined timeframe, and produced a documented response. That's the evidence. Everything else is a dashboard.

For a deeper look at how AI governance platforms connect monitoring signals to policy enforcement and what the accountability layer looks like in practice across different deployment contexts, the GAIG governance capabilities guide covers the full stack from visibility through runtime control.

Evaluating AI monitoring platforms or auditing an existing deployment? The question to bring to every vendor conversation is what the platform does to ensure signals get acted on — not just what signals it captures. Browse Model Observability and AI Runtime Controls in the GAIG marketplace to compare platforms, or submit an inquiry and we'll help you structure the accountability layer your current deployment is missing.

Submit an Inquiry: https://getaigovernance.net/contact

Our Take

AI Monitoring Take

The AI monitoring market moved faster than the accountability frameworks organizations needed to use it. Vendors competed on signal breadth, dashboard sophistication, and integration depth. Nobody competed on "does your team have a defined process for responding to what we surface?" That question doesn't sell platforms. It sells consulting engagements. So it stayed off the sales deck and out of the implementation scope.

The result is a generation of monitoring deployments that are technically functional and operationally inert. The data is there. The signals are real. The platform is doing what it was sold to do. And somewhere in a tab nobody checks daily, a hallucination rate is trending in a direction that no human being has been assigned to act on.

Grant Thornton's 2026 AI Impact Survey puts the consequence plainly: "The organizations pulling ahead have built governance that gives their leaders the confidence to scale AI decisively. The rest are inheriting risks they cannot see and outcomes they cannot prove." A monitoring dashboard with no accountability layer is precisely that — risk you can see displayed and cannot prove you addressed.

The organizations closing this gap are treating the accountability layer as the actual deliverable — not the platform implementation. They're asking the signal ownership questions before go-live. They're building response procedures into the governance program from day one. They're connecting monitoring signals to governance workflows so that alerts don't just fire but create accountable action. And critically, they're treating unactioned monitoring data as the liability it legally is in regulated environments — not as a backlog to get to eventually.

The monitoring platform you bought is capable of being the foundation of a serious governance program. Whether it becomes that foundation or stays a very expensive dashboard depends entirely on the accountability layer your team builds around it.