Key Numbers

72% of organizations running or testing AI agents in production, April 2026

Pillar Security

78% of executives lack confidence they could pass an AI governance audit in 90 days

Grant Thornton 2026

73% of organizations had outages linked to ignored monitoring alerts

Splunk 2025, n=1,855

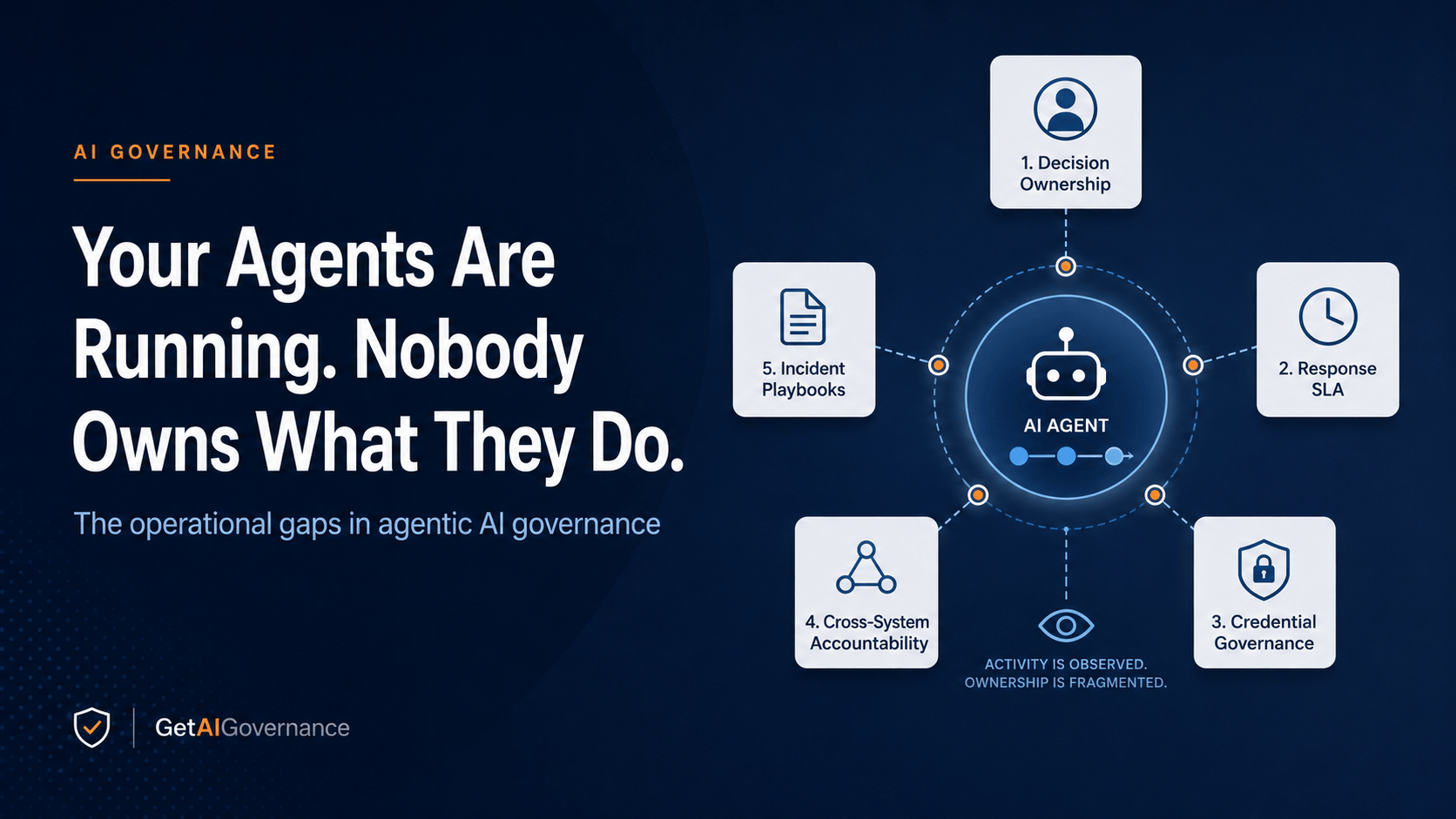

5 specific operational gaps that leave governance programs exposed even after the platform is right

GAIG Analysis

Not sure which governance platform covers your agentic deployment gaps? Submit an inquiry and GAIG will match you with the right vendors.

Submit an Inquiry →

Related Reading

A few years ago, the dominant failure mode in enterprise AI governance was what Anthony Habayeb of Monitaur called compliance theater — documentation, approvals, and dashboards producing the appearance of control while deployed models drifted in production with nobody watching. That framing landed because it was accurate. A meaningful portion of what was being sold as governance had never once connected to a live model. Platforms organized records around systems they'd never observed. Controls were attested by people rather than enforced by code. Green dashboards sat above drifting production models, and the gap between them was invisible by design.

A generation of integrated governance platforms got built to close that gap. The architecture was clear: connect policy to production, test controls against real outputs, generate audit evidence from what the system actually does rather than what someone said it would do. For many enterprises, that gap is now largely closed. Level 3 integration — governance plus monitoring, unified — is commercially available, and organizations with the procurement maturity and internal pressure to buy it have largely bought it.

The failure didn't stop. It shifted one layer up.

Nitin Mehta is a Partner and Digital Risk Leader at EY with direct visibility into how large enterprises are actually deploying and failing with agentic AI. In May 2026 he told CIO&Leader something that should make every governance program manager uncomfortable: the organizations now hitting walls with agentic AI aren't failing because they bought the wrong platform. They're failing because nobody redesigned the organizational layer around autonomous execution. The platform is watching. There is no one accountable for what it sees. An agent orchestrates across ITSM, ERP, and CRM simultaneously, makes a consequential decision, something goes wrong — and when the question surfaces, the room goes quiet. The audit trail exists. The ownership chain doesn't.

"Scaling agentic AI is primarily an operating model challenge, not a technology challenge."

Nitin Mehta, Partner – Digital Risk Leader, EY · CIO&Leader, May 2026

That's the problem this piece is about. The five specific operational gaps that leave governance programs exposed even after the tooling is right — what each one looks like in practice, which platforms address it, and the exact question that tells you during procurement whether a vendor actually closes it or just describes it in a demo.

Key Terms

These definitions shape how the five gaps below are framed. They also give you vocabulary to use in vendor conversations, because the difference between a vendor who knows these terms operationally and one who uses them decoratively tells you almost everything you need to know before the demo ends.

Agentic Drift

A behavioral divergence in an autonomous agent — the agent takes a path different from what it was designed to take, not because it malfunctioned, but because its authorization scope was underspecified or its boundaries weren't enforced at runtime as conditions shifted. Unlike model drift, which is a performance change in a static system, agentic drift is an execution change in a live one.

Decision Register

A living record of what classes of decisions an agent is authorized to make autonomously versus which require human review or pre-approval before execution. Distinct from a model registry, which tracks what models exist — a decision register tracks what those models are permitted to decide. Most organizations have the first. Almost none have the second.

Signal Ownership

The assignment of a specific, named human as accountable for reviewing and acting on what a monitoring platform surfaces — with a documented SLA for response time. Without named ownership, a signal is noise. It fires, it gets suppressed or ignored, and the platform produces no governance outcome. Signal-to-Incident Collapse — the PSI where alerts stop producing investigations — starts here.

On-Behalf-Of Token Chain

The sequence of delegated credentials through which an agent authenticates to downstream systems, often by acting as a service account that acts on behalf of a user. The accountability question that chain raises — who is responsible for what the agent does with those permissions — almost never has a clean answer at the time of deployment, and auditors always find the gap eventually.

Operating Model

The organizational design that surrounds a technology — defining who owns what, what the escalation paths are, what the response SLAs are, and what happens when something breaks. A governance platform without an operating model is instrumentation without accountability. The platform sees everything. Nobody is structured to act on it.

Permission Creep Drift

The gradual expansion of an agent's effective permissions over time — through OAuth grant accumulation, service account scope expansion, or MCP server access additions — without a corresponding review of whether the expanded permissions are still appropriate for what the agent actually does. It's the agentic-era version of the access control problem, and it compounds faster than its human-identity equivalent.

Where the Compliance Theater Fix Left Things

It's worth being precise about what the integrated governance platforms — the Monitaurs, Credo AIs, ValidMinds operating at Level 3 — actually solved, because it determines exactly where the agentic gap begins. They solved the visibility problem. Policy requirements connect directly to technical controls. Controls are tested against real model outputs. Audit trails are generated from what the system does, not what someone attested it would do. That is meaningful and hard-won, and most organizations publishing about AI governance maturity still haven't reached it.

What Level 3 integration didn't solve — couldn't solve, because it wasn't designed for this — is what happens when the system stops being a model that generates outputs and becomes an agent that takes actions. The governance architecture for observing a model that produces a recommendation is fundamentally different from the architecture required to govern an agent that executes a workflow across six enterprise systems in four seconds without waiting for a human to review the first step. The observability plumbing is still required. The organizational structure around it has to be rebuilt from scratch.

Mehta's framing is specific about why this happens. The Copilot era established one operational pattern: AI augments a human, the human initiates every action, the human remains accountable for the outcome. The governance model for that pattern is workable. The human is still in the loop. The Agentic era inverts it — the agent plans, the agent initiates, the agent executes, and the human's role shifts from actor to reviewer. That shift only holds if the organizational design was rebuilt to support it. Most weren't.

The Pattern Worth Naming

Every major observability acquisition — Cisco buying Robust Intelligence, Snowflake acquiring TruEra, CoreWeave moving on Weights & Biases — was purchasing the visibility layer. The market confirmed that observability is valuable. What those acquisitions didn't and couldn't solve is the organizational structure that determines whether anyone acts on what observability surfaces. That's not a product problem. It never was.

The Five Operational Gaps

Each gap below maps to a specific Pre-Failure Signal from the CISO's governance stack framework — the signals that fire weeks before an incident surfaces. The gaps don't cause incidents by themselves. They're the conditions that turn a recoverable anomaly into a board-level event.

No Named Owner for Autonomous Decisions

Pre-Failure Signal: Ownership Ambiguity · Governance Layer

When an AI agent makes a decision autonomously — escalates a customer case, initiates a financial posting, modifies a production configuration — the platform captures what happened. What it almost never captures is who was accountable for the agent being authorized to make that decision in the first place, and who is responsible for reviewing whether it made it correctly. Those are two different accountability questions, and most governance programs have answered neither of them formally.

The Copilot-era governance model assigned accountability implicitly, because a human initiated every action. The agent model removes that implicit assignment. An agent operating under a service account doesn't have a named human whose judgment it reflects. The audit trail shows the action. The accountability register — if it exists — shows the model, the service account, the timestamp. What it doesn't show is the human whose job it was to review whether that class of decision should have been made autonomously at all.

Mehta is direct about where the liability sits when this goes wrong: accountability "remains firmly with the enterprise deploying the agent — specifically with those who designed the operating model, defined what the agent could do, determined what guardrails to implement, and established the governance framework." The legal answer is clear. The organizational answer — who specifically is that person, what is their title, what is their documented scope — is almost never established before deployment. It gets established after the first incident, which is the worst possible time to do it.

Pre-Failure Signal: Ownership Ambiguity

This signal doesn't fire an alert. It exists as an absence — no named owner in the model registry, no accountability assignment in the governance documentation, no response SLA for the class of decisions the agent is making. It's invisible until the incident makes it visible, at which point the damage is already done. The governance check that catches it is simple: for every agent workflow in production, can you name the specific human accountable for reviewing its decisions? If the answer requires a conversation to determine, the signal is present.

Platforms with decision ownership and authorization scope management

Ask This in the Procurement Conversation

"Show me how your platform assigns a named human owner to a specific class of agent decisions — and how it tracks whether that owner reviewed what the platform surfaced, within what timeframe, and what the documented consequence is if they don't."

Monitoring Signals With No Response SLA

Pre-Failure Signal: Signal-to-Incident Collapse · Monitoring Layer

The Splunk number in this piece's stats strip — 73% of organizations experienced outages linked to ignored or suppressed alerts — isn't a monitoring platform failure. The platforms were doing their job. They surfaced the signals. The outages happened because nobody was organizationally required to act on them within a defined timeframe. That distinction matters more for agentic AI than for any previous generation of enterprise systems, because an agent doesn't pause its execution while a human gets around to reviewing a dashboard.

A monitoring program without response SLAs is, as we've said elsewhere on this site, a dashboard. It produces information with no accountability mechanism for what happens to that information. The signal categories that production monitoring tracks — drift, hallucination rate, output quality degradation, pipeline health, behavioral anomalies — are only governance-relevant if a named human has a documented obligation to act on them within a defined window. Without that obligation, the signal fires, gets triaged by whoever happens to be watching, and either gets investigated or doesn't based on circumstances that have nothing to do with the governance program.

Mehta names this specifically among the hidden costs organizations consistently underestimate before deployment: "analysts and SMEs spending time on escalations, approvals, and post-incident reviews." The cost he's pointing at isn't just headcount. It's the organizational design that defines who those analysts are, what they're specifically accountable for reviewing, how quickly, and what triggers escalation when the SLA is missed. That design doesn't come from buying a monitoring platform. It has to be built deliberately, before agents go live, by someone with the authority to assign accountability across teams that don't naturally want to own it.

Pre-Failure Signal: Signal-to-Incident Collapse

This is one of the most predictable pre-failure patterns in the PSI framework: the monitoring platform is generating alerts, the alerts are being acknowledged or suppressed, and the acknowledgement rate has decoupled from the investigation rate. Alerts are being closed without producing governance actions. The metric to track is the ratio of alerts fired to investigations opened. When that ratio drops below a threshold specific to your environment, the monitoring program has effectively stopped producing governance outcomes — even though the dashboard looks operational.

Platforms with signal routing, ownership assignment, and response SLA tracking

Ask This in the Procurement Conversation

"When your platform surfaces a behavioral anomaly from an agent workflow, walk me through the specific path that signal takes — who it routes to, how that owner is notified, what the documented SLA is for acknowledgement and investigation, and what the platform does when that SLA is missed."

Agent Identity and Credential Chains With No Governance

Pre-Failure Signal: Permission Creep Drift · Security Layer

Mehta calls out identity, access, and credential hygiene as one of the most consistently overlooked operational costs in agentic deployments: "issuing least-privilege roles, rotation, secrets management, and continuous entitlement reviews." What makes this acutely dangerous for agentic AI specifically is the on-behalf-of token chain — the sequence of delegated credentials through which an agent authenticates to downstream systems, often operating under a service account with permission scopes that were defined for a different purpose by a different person months ago.

The Permission Creep Drift pattern is how this compounds over time. A new agent workflow needs access to a production database. The service account already has filesystem access from a previous project. Nobody revokes the old scope because nobody owns the entitlement review for that service account. Six months later the agent workflow operating under that account has an effective permission set that spans three systems with no documented business justification for the aggregate scope. No single grant looks wrong. The full picture is a governance failure that standard identity tooling never surfaces because it was designed to review individual grants, not cumulative agent credential chains.

The AI security controls framework addresses this at the technical layer — access graph visibility, per-tool authorization enforcement, continuous entitlement review. The governance failure underneath it is organizational: nobody was assigned to own entitlement reviews for agent identities as a distinct category from human identity governance. That assignment has to be explicit, documented, and attached to a specific review cadence. It doesn't happen by default, and no platform creates it for you.

Pre-Failure Signal: Permission Creep Drift

The signal that tracks this gap is the delta between an agent's documented authorization scope and its effective permission set across all systems it touches. When that delta is growing — when new OAuth grants, service account expansions, or MCP server access additions are being added faster than entitlement reviews are clearing them — the drift is accelerating. The governance check: run a full credential chain audit for your five most active agent workflows and compare the effective permissions against the documented business justification for each scope. The gap you find is your Permission Creep Drift exposure.

Platforms addressing agent identity governance and credential chain visibility

Ask This in the Procurement Conversation

"Map the full credential chain for one of our agent workflows — from the initiating service account through every downstream system it touches. Show me how your platform identifies when that chain's aggregate permissions exceed what the workflow's documented business justification requires, and how it flags additions to that chain without a corresponding review."

Multi-System Orchestration With Split Ownership

Pre-Failure Signal: Cross-Layer Convergence · All Four Layers

Agentic AI forces an accountability question that most enterprise organizational structures haven't been designed to answer: when an agent workflow spans multiple enterprise systems — ITSM, ERP, CRM, a production database — and produces a harmful outcome, who owns the full chain? In practice, three different teams each own one piece of it. The ITSM team owns the ticketing integration. Finance owns the ERP connection. Sales operations owns the CRM workflow. Nobody owns the agent workflow that executes across all three in a single transaction.

This is where Cross-Layer Convergence gets dangerous. The Pre-Failure Signal framework defines Cross-Layer Convergence as the simultaneous presence of governance, security, monitoring, and compliance signals that individually appear manageable but together indicate imminent failure. A multi-system agent workflow without a single named owner generates exactly this pattern: governance signals fire about policy coverage gaps, security signals fire about credential scope, monitoring signals fire about behavioral drift, and compliance signals fire about evidence chain incompleteness — all at the same time, routing to different teams, with nobody seeing the full picture.

The governance platform capability breakdown identifies lifecycle accountability — governance that follows the system across deployment rather than ending at approval — as one of the defining characteristics that separates Level 3 platforms from their predecessors. But lifecycle accountability across a multi-system agent workflow requires an organizational assignment that no platform can make: one named human accountable for the full chain, with the cross-functional authority to act when any component of it misbehaves.

Pre-Failure Signal: Cross-Layer Convergence

The Red Code Signal — the most severe pattern in the PSI framework — is defined as simultaneous signals across three or more Control Layers in the same system or workflow. Multi-system agent workflows without unified ownership are structurally positioned to generate this pattern, because the ownership gaps at each layer produce independent signal streams that never get correlated. The governance check: for your five most complex agent workflows, identify whether there is a single human accountable for cross-layer signal correlation. If that role doesn't exist by name, the Red Code condition is dormant, waiting for conditions to converge.

Platforms with cross-system workflow governance and lifecycle accountability

Ask This in the Procurement Conversation

"When an agent workflow spans three enterprise systems with three different team owners and produces a harmful outcome — who does your platform route the incident to? Show me how it assigns accountability for the cross-system outcome, not just the individual system events."

No Incident Playbook for Agent-Originated Events

Pre-Failure Signal: Escalation Path Absence · Compliance Layer

Standard enterprise incident response playbooks cover human error, system failure, and security breach. Almost none of them cover the scenario where an agent did exactly what it was configured to do — within its documented authorization scope, with no technical malfunction detected — and the outcome was still harmful. That scenario is categorically different from every previous incident type because the standard diagnostic question ("what went wrong?") has a technically accurate answer that doesn't resolve the governance question: the agent worked correctly. The problem was in the authorization design, the scope definition, or the boundary conditions that nobody specified clearly enough.

Mehta calls out escalation and exception handling as one of the consistent hidden costs that organizations systematically underestimate before agentic deployment. The reason they underestimate it is precise: they haven't written the playbook, so they don't know how many people and how many decisions the playbook would require. The discovery happens post-incident, when the team attempts to reconstruct a response process in real time using documentation that was designed for a different class of event.

The compliance dimension compounds this significantly. The regulatory frameworks now requiring AI incident documentation — EU AI Act Article 73, NIST AI RMF's GOVERN and MANAGE functions, ISO 42001's continuous improvement requirements — assume an incident response process exists that can produce evidence of what the organization knew, when they knew it, and what they did in response within what timeframe. An agent-originated incident with no playbook produces an evidence chain gap that auditors are specifically trained to find, because it's the gap between "we had governance documentation" and "we had governance operations."

Pre-Failure Signal: Escalation Path Absence

The governance check for this gap is direct: pull your current AI incident response playbook and search it for the word "agent." If the playbook doesn't distinguish between human-initiated incidents and agent-originated incidents, it wasn't written for the systems you're currently running. The absence of that distinction is the signal. It means your incident response capability was designed for the generation of AI deployment that preceded your current one, and the organizational design hasn't kept pace with the technology deployment.

Platforms with AI incident response and exception escalation workflows

Ask This in the Procurement Conversation

"Walk me through exactly what your platform does when an agent takes an action within its authorized scope that produces a harmful outcome — who gets notified, in what form, within what SLA, what evidence does your platform generate for the incident record, and how does that evidence map to EU AI Act Article 73 reporting requirements?"

Where Most Enterprises Are Right Now

The compliance theater piece mapped three levels of platform maturity — documentation only, monitoring only, integrated governance plus monitoring. The table below extends that framework one stage further. It assumes you've crossed Level 3. It describes what still needs to be built on the organizational side before the platform investment produces reliable governance at agentic scale.

Most enterprises deploying agents seriously in 2026 sit somewhere between Stage 2 and Stage 3. The tooling is right. The operating model isn't built yet. That gap between platform capability and organizational readiness is where every one of the five gaps above lives.

Stage | What You Have | What's Still Missing | How It Fails |

|---|---|---|---|

Stage 1 | Documentation, approval workflows, policy records | Production visibility, real behavior observation, any connection to running models | Models drift after deployment. Nobody sees it. Compliance theater. |

Stage 2 | Integrated governance platform connected to production. Real monitoring. Real audit evidence. | Named signal owners. Decision registers. Response SLAs. Agent IR playbooks. Cross-system accountability assignments. | Agents run. Signals fire. The room goes quiet when something breaks. Platform saw it. Nobody owned it. |

Stage 3 | Platform plus operating model — named owners, decision registers, response SLAs, cross-system accountability, agent-specific IR playbooks, entitlement review cadence for agent credentials | — | Governance produces accountable outcomes, not just observable ones. |

What This Framework Doesn't Cover

The five gaps above are calibrated to organizations that have crossed into meaningful agentic deployment — agents running autonomously across production systems at a volume and decision speed that human review can't cover individually. If your organization is still in pilot, running agents in narrow, easily reversible, low-stakes workflows, the operating model urgency is lower. Build the structure before you scale, not after. But the immediate pressure described in each gap is calibrated to the reality of production agentic deployment at enterprise scale. Pilots don't generate the same exposure.

This framework also doesn't substitute for platform evaluation. The vendor chips in each gap section identify which platforms address that specific operational failure — but which of those platforms fits your environment, your regulatory obligations, and your existing stack requires structured interrogation. The vendor interview guide is built for exactly that process. The procurement questions in each section above are starting points. The full evaluation takes longer and goes deeper than any single question can reach.

One more honest limitation: some of the organizational changes described here — assigning cross-functional accountability for multi-system agent workflows, building agent-specific incident playbooks, running entitlement reviews for non-human identities — require internal political work that no platform accelerates. A vendor can show you where the gap is. Closing it requires someone with organizational authority deciding to close it. That decision has to come from inside.

Our Take

AI Governance Take

The compliance theater piece ended with a challenge: ask what happens after deployment, not just what the platform shows during the demo. The organizations that took that seriously bought the right platforms. Integrated governance. Production monitoring. Real audit evidence from real system behavior. That was the right answer to that challenge, and it was hard-won in a lot of organizations where the documentation-only tools had decades of internal momentum.

This piece is asking the next question. The platform is running. It's watching every agent workflow, capturing every decision, generating an audit trail from every action. Now: who is accountable for what it sees? Who owns the decision the agent just made? Who receives the behavioral anomaly alert, within what SLA, with what documented obligation to act? Who is named on the incident record when an agent-originated event requires a regulatory response under EU AI Act Article 73? Who audited the credential chain that agent is operating under, and when does that review happen again?

Those questions don't have platform answers. They have organizational design answers. The enterprises building those accountability structures before an agent-originated incident forces the conversation are the ones that won't spend six months retroactively assigning ownership, rebuilding incident playbooks, and explaining to a regulator why the audit trail shows the monitoring platform saw the problem before the humans did. That gap between what the platform observed and what the organization did about it is exactly where regulatory scrutiny lands. The window to close it on your terms is still open.

Browse the 2026 AI governance platform guide for a full structured comparison across all maturity levels — or submit an inquiry and GAIG will match you with platforms evaluated specifically for agentic deployment environments and the organizational gaps they're designed to close.