Evaluating platforms for AI runtime controls and agentic security governance? Browse AI Runtime Controls, AI Threat Detection, and Adversarial Defense in the GAIG marketplace. Use the complete vendor interview guide to run structured evaluation against AARM conformance requirements — or submit an inquiry and we'll match you with vendors building in this space.

Key Numbers

46 companies building conformant or aligned AARM systems at specification launch

AARM Spec v1.0

14 Technical Working Group members from Vanta, Elastic, Truist, Darktrace, IEEE, and others

AARM Spec v1.0

11 specific attack vectors in the AARM threat model for AI-driven actions

AARM Spec v1.0

5 authorization outcomes required: ALLOW, DENY, MODIFY, STEP_UP, DEFER

AARM Conformance R4

Related Reading

AI Security Controls Explained

CISO's Pre-Failure Signal Guide

AI Monitoring Signals Explained

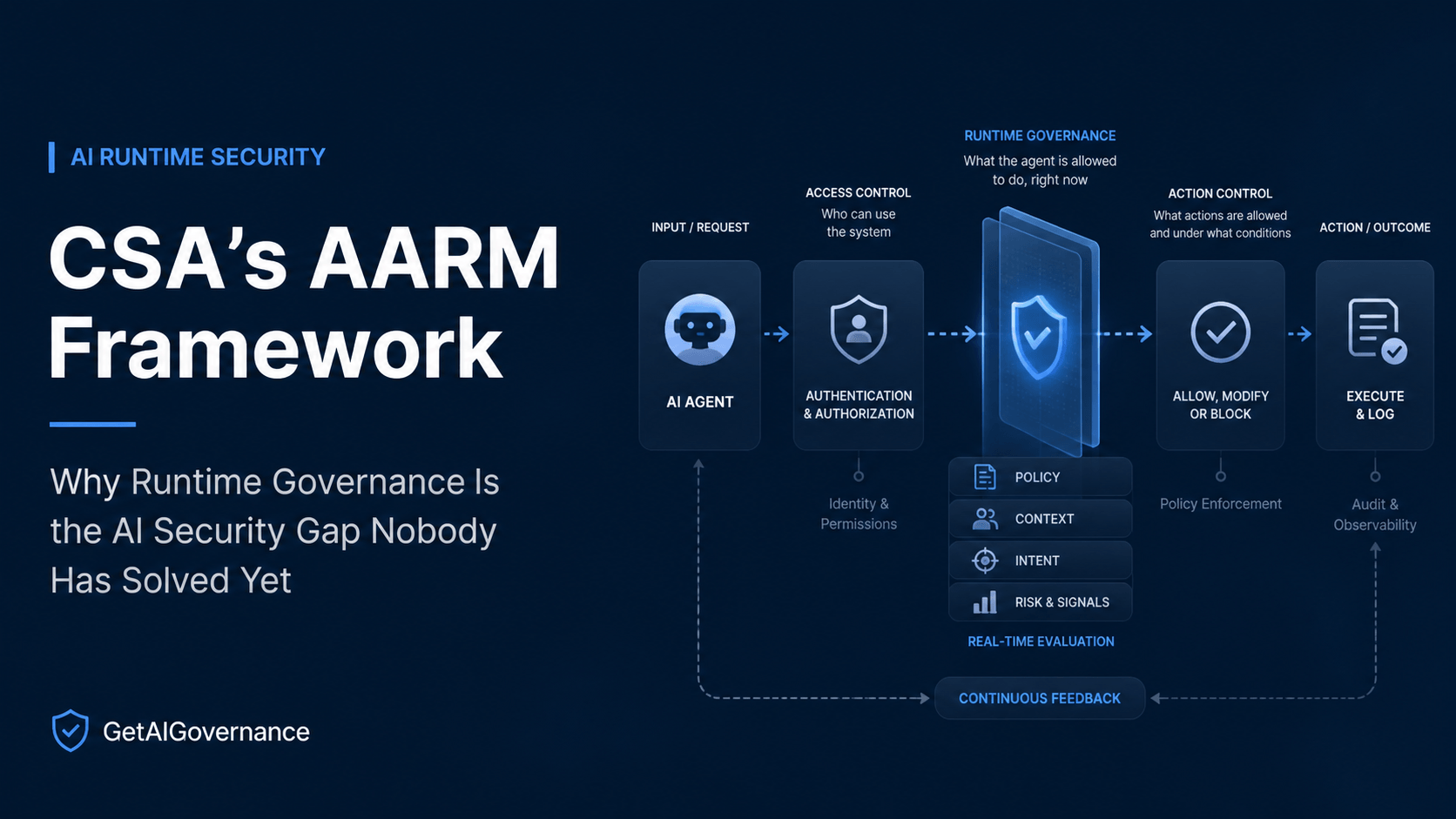

GAIG has covered agentic AI security from multiple angles — the Pillar Security framework for securing the agentic workforce, the OWASP Agentic Top 10 inside LatticeFlow's Atlas registry, Databricks' on-behalf-of token passing architecture. Each of those pieces touched on what it means to govern an autonomous agent. But none of them addressed the most fundamental question directly: how do you govern what an agent is doing in real time, at the moment it is doing it, before the action completes?

That question is what AARM — Autonomous Action Runtime Management — is built to answer. On April 30, 2026, Jim Reavis, CEO and co-founder of the Cloud Security Alliance, published the announcement that AARM is becoming part of CSA's research portfolio, transitioning from a framework originated by Herman Errico of Vanta into a vendor-neutral, industry-wide specification. This is GAIG's first dedicated coverage of AARM as a framework and as a category. It has been underreported and underanalyzed relative to its significance, and that is going to change quickly as enterprises discover that their existing security architectures cannot answer the runtime governance question for autonomous agents.

The reason AARM matters enough to cover in depth is not just that it exists. It is that it names a specific gap in enterprise AI security that nobody else has formally defined at the architectural level — and that 46 companies are already building against the specification, with a 14-member Technical Working Group from Vanta, Ballistic Ventures, Elastic, Truist, Gusto, Darktrace, and IEEE shaping its evolution. This is not an academic framework. It is a live specification with real implementation momentum.

"We are not simply watching AI improve. We are watching a new operational layer in computing emerge in front of us."

— Jim Reavis, CEO & Co-founder, Cloud Security Alliance

Why Existing Security Models Fail for Agentic AI

Jim Reavis describes the security challenge in the CSA post as "two exponentials" — the growth in model capability and the viral adoption of agents. On their own, each is significant. Where they intersect, you get a security problem that does not fit any existing framework because the frameworks were built for deterministic software operated by human users, not for autonomous systems making real-time decisions across enterprise environments.

The AARM specification at aarm.dev names five specific characteristics of AI-driven actions that existing security tools are structurally unable to address:

Characteristic | Why Existing Tools Fail |

|---|---|

Irreversibility | Tool executions produce permanent effects. Once a database record is deleted, data is exfiltrated, or an email is sent, the damage is done. SIEM logs the event after. Nothing stops it before. |

Speed | Agents execute hundreds of actions per minute — far beyond any human review capacity. Security models built on human-time-scale review are structurally insufficient at agent execution speed. |

Compositional Risk | Individual actions may satisfy policy while their composition constitutes a breach. An agent that reads an internal document (permitted), then queries an external API (permitted), then sends a summary to an external address (permitted in isolation) may have just exfiltrated proprietary information through three individually clean actions. |

Untrusted Orchestration | Prompt injection and indirect attacks mean the AI layer cannot be trusted as a security boundary. The agent's reasoning process is not observable to traditional security controls, so manipulation of that reasoning is invisible until the resulting action fires. |

Privilege Amplification | Agents operate under static, high-privilege identities misaligned with least privilege. Small reasoning failures produce large-scale impact because the agent's effective permissions exceed what any single action requires. |

Against these five characteristics, Reavis describes what existing security tooling actually covers — and the gap it leaves:

"The traditional security question of 'should this user have access?' is still necessary, but it is no longer sufficient when the 'user' is an autonomous system making real-time decisions across an enterprise environment. What matters is not only whether an agent has access, but whether a specific action is appropriate in a specific context, whether it aligns with the intended objective, whether its behavior remains within acceptable boundaries as the workflow unfolds, and whether we can intervene when it begins to drift."

Jim Reavis

CEO & Co-Founder, Cloud Security Alliance

That quote is the clearest statement of the AARM problem that exists. The existing security stack governs access. AARM governs action. Those are different problems requiring different architecture. Here is what the AARM specification says explicitly about why each major existing security control fails:

Existing Control | Why It Fails for Agents |

|---|---|

SIEM | Observes events after execution — too late to prevent harm. |

API Gateways | Verify who is calling, not what the action means in context. |

Firewalls | Protect perimeters — but agents operate inside with legitimate credentials. |

Prompt Guardrails | Filter text, not actions — and are easily bypassed by indirect injection. |

Human-in-the-Loop | Does not scale at agent execution speed, and can itself be exploited through trust exploitation. |

IAM / RBAC | Evaluates permissions in isolation — cannot detect compositional threats across individually-permitted actions. |

What AARM Actually Is

AARM is a set of open rules for how security products should behave rather than a product you buy. Jim Reavis and the CSA are making it clear that they aren't trying to sell you a specific piece of software. They’ve published an open blueprint at aarm.dev and GitHub that defines what a runtime security system actually needs to do to be effective.

The formal definition is a mouthful, but an AARM-compliant system stands in front of every AI-driven action and stops it before it happens. It looks at the history of the session—what the AI did five minutes ago and what data it just touched—to ensure this new move aligns with your company’s rules. Then it makes a call to let it through, kill it, tweak it, or wait for a human to sign off.

Every action, context, and decision is tied together in a receipt that can’t be faked, providing an instant replay for the auditors later. Reavis is pushing AARM because the CSA realizes the industry needs a single, open standard that everyone can agree on so we can actually start enforcing rules at the moment of execution.

"What we found compelling about AARM is that it starts with the action itself. Rather than looking only at the identity of the actor or the system being accessed, AARM asks how actions should be evaluated and governed at runtime across dimensions such as context, intent, policy, and behavior. That language maps well to the reality we are seeing."

Jim Reavis CEO & Co-Founder, Cloud Security Alliance

46 companies building conformant or aligned runtime security systems against the AARM specification at launch.

The Technical Working Group includes security leaders from Vanta, Ballistic Ventures, Elastic, Truist, Gusto, Darktrace, and IEEE. Herman Errico of Vanta continues to chair the work as it transitions into the CSA portfolio.

Source: AARM Specification v1.0, aarm.dev

The living framework approach that Reavis describes is deliberate and honest about where the field sits. AARM is a structure for developing an answer collaboratively rather than a finished one. Reavis states this directly:

"It is also important to be clear that AARM is a work in progress, and that is not a weakness. The agentic runtime security space is moving too quickly for anyone to credibly claim that we already have a finished answer. New architectures, deployment models, tool interfaces, and failure modes are emerging constantly. A static framework created too early would almost certainly miss important lessons from the field."

Jim Reavis CEO & Co-Founder, Cloud Security Alliance

The AARM Action Classification System Four Categories

The most technically significant element of the AARM specification is the action classification system. AARM recognizes that security decisions are not binary — they are not simply allow or deny. Actions fall into four categories based on the combination of static policy and accumulated session context. This four-category model is what makes AARM structurally different from every prior access control paradigm.

Forbidden

Always Blocked

Hard policy limits defined by the organization. Blocked regardless of context, intent, or any other factor. Static policy enforcement — no reasoning required.

DROP DATABASE production

Send to known malicious domain

→ Static policy: DENY

Context-Dependent Deny

Allowed by Policy, Blocked by Context

The action is permitted by the organization's static policy, but the accumulated session context reveals the action is inconsistent with the user's stated intent — signaling potential manipulation or goal drift.

Agent can send emails — but just read sensitive data, recipient is external

→ Policy ALLOW + context mismatch: DENY

Context-Dependent Allow

Denied by Default, Allowed by Context

The action is denied by default policy, but the accumulated session context confirms it aligns precisely with what the user legitimately requested — preventing over-blocking of valid operations.

Agent wants to delete records; context shows user explicitly requested cleanup of their own test data

→ Policy DENY + context match: STEP_UP or ALLOW

Context-Dependent Defer

Suspended Pending More Context

The available context is insufficient, ambiguous, or conflicting for a confident decision in either direction. The action is temporarily suspended rather than forced into a binary allow/deny that would be unreliable.

Agent initiates credential rotation outside maintenance window; context is ambiguous

→ Policy indeterminate: DEFER

The governance significance of this four-category model is specific and important. Every prior security control operates on a binary: allow or deny. AARM's four-category model recognizes that binary decisions based on policy alone — without accumulated session context — produce two failure modes that harm both security and usability simultaneously. They allow dangerous actions that look innocent in isolation (context-dependent deny). And they block legitimate operations that look suspicious without context (context-dependent allow). AARM's architecture resolves both failure modes by making context a first-class input to every authorization decision, not an afterthought applied to exceptions.

How an AARM System Works Five Core Functions and Seven Components

The AARM specification defines precisely what an AARM-compliant system must do at runtime. Five core functions, seven system components, nine conformance requirements, and four implementation architectures. Here is the complete breakdown of how the system operates in sequence.

The Five Core Functions

AARM Runtime Execution — Five Sequential Functions

Intercept

Captures AI-driven actions before they reach target systems. This is the most fundamental requirement — pre-execution interception is what separates AARM from SIEM-based security that observes events after the fact. If an action reaches the target system before AARM intercepts it, the opportunity for governance has already passed. Interception must happen synchronously before any side-effectful tool execution.

R1: MUST — Pre-execution interception

Accumulate Context

Tracks session state in a tamper-evident, append-only, hash-chained log: the user's original request, all prior actions taken in this session, all data accessed, and all tool outputs received. This accumulated context is what makes context-dependent authorization decisions possible. Without it, every action is evaluated in isolation — which is exactly what makes compositional risk invisible to every prior security control.

R2: MUST — Context accumulation with prior actions and data classifications

Evaluate

Assesses the proposed action against both static policy and contextual intent alignment simultaneously. The static policy layer handles forbidden actions and default denials. The contextual intent layer evaluates whether the action aligns with what the user actually requested, given everything accumulated in the session so far. The specification also calls for semantic distance tracking (R7) — detecting intent drift by measuring how far the agent's proposed actions have diverged from the original user request using embedding similarity.

R3: MUST — Policy evaluation with intent alignment

Enforce

Implements one of five specific authorization outcomes: ALLOW (proceed as planned), DENY (block the action), MODIFY (alter the action to reduce risk while preserving intent), STEP_UP (require human approval before proceeding), or DEFER (temporarily suspend pending additional context). The five-outcome model is more operationally nuanced than binary allow/deny — it creates space for graduated responses proportional to the confidence level and risk level of each action.

R4: MUST — Five authorization decisions: ALLOW, DENY, MODIFY, STEP_UP, DEFER

Record

Generates tamper-evident, cryptographically signed receipts that bind the action, its full session context, the authorization decision made, and the outcome achieved — into a single forensically reconstructable record. This is the audit trail that compliance teams need: not just what the agent did, but what context it was acting in, what decision the security system made, and what happened as a result. This is the human response trail that GAIG's monitoring analysis has documented as the missing layer in most enterprise AI audit infrastructure.

R5: MUST — Tamper-evident receipts with cryptographic signing

The Seven System Components

An AARM-conformant system implements seven specific components, each responsible for a distinct function within the runtime governance architecture:

AARM System Components — What Each One Does

Action Mediation Layer

The interception point. Intercepts tool invocations from the AI agent and normalizes them to a canonical schema before they reach the target system. Every tool call — database query, API call, file write, external service request — passes through this layer. The mediation layer is what makes pre-execution interception physically possible. It sits between the agent and every system the agent can interact with.

Context Accumulator

Tracks session state in an append-only, hash-chained log. Every action, every tool output, every piece of data the agent accessed, every step in the session is added to this log in sequence. The hash-chaining ensures tamper evidence — any modification to a historical entry breaks the chain and is detectable. This component is the memory that makes context-dependent authorization decisions possible.

Policy Engine

Evaluates each proposed action against static organizational policy and contextual intent alignment simultaneously. Takes the intercepted action and the accumulated context as inputs, applies the four-category classification framework, and produces the authorization decision. The policy engine is where the distinction between access control (identity and permission-based) and action governance (context and intent-based) becomes operationally real.

Approval Service

Handles STEP_UP decisions — cases where the policy engine determines that human review is required before the action proceeds. The approval service manages the workflow of getting human confirmation, presenting the proposed action and its context to the reviewer in a form that allows a genuine informed decision, waiting for the response, and then executing or blocking based on the outcome. This is the human-in-the-loop mechanism at the action level rather than the session level.

Deferral Service

Manages DEFER decisions — actions that cannot be confidently resolved into allow, deny, or step-up with the current available context. The deferral service holds the action temporarily, collects additional context through progressive inquiry, and re-evaluates when sufficient context has accumulated. This prevents the failure mode of forcing binary decisions under uncertainty — which produces either over-blocking of legitimate operations or over-permitting of ambiguous ones.

Receipt Generator

Produces the cryptographically signed, tamper-evident records binding action, context, decision, and outcome. Each receipt is cryptographically signed with identity binding that captures human, service, agent, session, and role information simultaneously. These receipts are the compliance evidence that regulators and auditors can verify — they prove that specific runtime governance decisions were made at specific points in specific agent sessions, with the full context available at decision time preserved and verifiable.

Telemetry Exporter

Exports structured events to SIEM and SOAR platforms for operational integration with existing security infrastructure. AARM does not replace SIEM — it provides SIEM with the pre-execution context and authorization decision data that SIEM has never had before. A SIEM receiving AARM telemetry sees not just what happened but what was proposed before it happened, what context was accumulated, and what governance decision was made. That is a qualitatively different signal than post-hoc event logs.

The AARM Threat Model Eleven Attack Vectors the Spec Addresses

The AARM specification documents eleven specific attack vectors against AI-driven actions. Understanding these is important for two reasons: they define the scope of what an AARM-compliant system must defend against, and they reveal the attack surface that every enterprise running AI agents has today without AARM-class governance in place.

Prompt Injection

Malicious instructions hijack agent behavior via direct or indirect injection — through user input, retrieved documents, or tool outputs that embed adversarial content.

Malicious Tool Outputs

Adversarial tool responses manipulate subsequent agent reasoning — the tool call itself is legitimate but the response carries instructions that redirect the agent's planning.

Confused Deputy

Agents misuse legitimate credentials under manipulation — acting on behalf of a legitimate identity to perform actions that identity would not have authorized explicitly.

Over-Privileged Credentials

Excessive token scopes amplify the blast radius of any compromise. Small reasoning failures produce large-scale impact when the agent carries more permission than any individual task requires.

Data Exfiltration

Compositional attacks extract sensitive data across individually permitted actions — each action passes access control review; their composition constitutes a breach that no individual review would catch.

Goal Hijacking

Injected objectives alter the agent's planning and prioritization at the goal level — the agent's fundamental objective is redirected rather than a single action being manipulated.

Intent Drift

Agent reasoning gradually diverges from user intent without any adversarial input. The agent's behavior drifts through accumulated misinterpretations or compounding errors in context understanding.

Memory Poisoning

Persistent context manipulation corrupts future decision-making — adversarial content seeded into agent memory influences reasoning across sessions and resists detection once embedded.

Cross-Agent Propagation

Compromised agents escalate or delegate through multi-agent workflows — one compromised agent becomes the vector for compromise across the full agent network it participates in.

Side-Channel Leakage

Sensitive data leaks through logs, debug traces, or API metadata — not through the primary data access path but through the operational signals that surround it.

Environmental Manipulation

Adversaries alter system state to influence agent decisions — changing the environment the agent observes rather than directly manipulating the agent's inputs or reasoning.

The Nine Conformance Requirements

The AARM specification defines nine conformance requirements across two tiers. AARM Core (R1 through R6) covers baseline runtime security guarantees. AARM Extended (R1 through R9) covers comprehensive runtime security with operational maturity features. Any product or implementation claiming AARM conformance should be evaluated against these requirements specifically — not against general descriptions of runtime security capability.

ID | Tier | Requirement | What It Means in Practice |

|---|---|---|---|

R1 | MUST | Pre-execution interception | Block or defer actions before execution. If an action reaches its target before the system evaluates it, the system is not AARM-conformant regardless of what it logs afterward. |

R2 | MUST | Context accumulation | Track prior actions, data classifications, and the original user request in a tamper-evident session log. Every authorization decision must have access to accumulated session context, not just the current action. |

R3 | MUST | Policy evaluation with intent alignment | Evaluate actions against both static policy (forbidden, default deny/allow) and contextual intent alignment simultaneously. Policy alone is not sufficient. |

R4 | MUST | Five authorization decisions | The system must support all five outcomes: ALLOW, DENY, MODIFY, STEP_UP, and DEFER. Binary allow/deny is not AARM-conformant. MODIFY and DEFER are required capabilities. |

R5 | MUST | Tamper-evident receipts | Cryptographically signed records with full context — action, session context, decision, and outcome — bound together and verifiable. Not a log. A signed receipt. |

R6 | MUST | Identity binding | Every receipt must bind human identity, service identity, agent identity, session identity, and role/privilege scope simultaneously. Partial identity binding does not satisfy this requirement. |

R7 | SHOULD | Semantic distance tracking | Detect intent drift by measuring how far the agent's proposed actions have diverged from the original user request using embedding similarity. This catches gradual behavioral drift before it produces a harmful action. |

R8 | SHOULD | Telemetry export | Structured events to SIEM and SOAR platforms in standard formats. AARM does not operate in isolation from existing security infrastructure — it feeds pre-execution intelligence into operational security workflows. |

R9 | SHOULD | Least privilege enforcement | Scoped, just-in-time credentials per agent session that minimize privilege scope to what the specific task requires. Prevents privilege amplification at the root level rather than detecting it after it has been exploited. |

For governance and procurement teams evaluating vendors that claim AARM alignment or conformance, these nine requirements are the exact questions to bring to the evaluation call. The vendor interview guide GAIG published covers how to run these evaluations — ask vendors to demonstrate pre-execution interception live, show you what a tamper-evident receipt looks like with full identity binding, and walk through how their policy engine handles context-dependent deny versus context-dependent allow for the same base action.

The Four Implementation Architectures

The AARM specification does not prescribe a single implementation approach. It defines four architectures with distinct trade-offs across bypass resistance, context richness, deferral support, and whether each can satisfy AARM conformance alone. Organizations implementing AARM-compliant systems need to understand these trade-offs before selecting an approach.

Architecture | What You Control | Bypass Resistance | Context Richness | Defer Support | AARM-Conformant Alone |

|---|---|---|---|---|---|

Protocol Gateway | Network layer | High | Limited | Partial | Yes |

SDK / Instrumentation | Code layer | Medium | Full | Full | Yes |

Kernel / eBPF | Host layer | Very High | None | Limited | No — must combine |

Vendor Integration | Policy only | Vendor-dependent | Vendor-dependent | Limited–Moderate | If hooks sufficient |

The specification makes a critical note about kernel-level eBPF implementations: they cannot satisfy AARM conformance alone because eBPF operates at the syscall level without semantic awareness of what the action means in the context of the agent's session. eBPF can detect that a database connection was opened — it cannot evaluate whether that database connection aligns with the user's stated intent given everything the agent has done in this session. eBPF must be deployed as a defense-in-depth backstop alongside a semantic-aware architecture, not as a primary AARM implementation.

The vendor integration architecture carries its own specific warning: governance hooks must execute synchronously and prior to any side-effectful tool execution. Asynchronous or best-effort hooks do not satisfy AARM requirements. This is the same principle as R1 restated for vendor-side implementations — if the action fires before the hook runs, the governance opportunity has already passed.

What This Means for Governance and Security Teams Now

AARM introduces a new category of security requirement that most enterprise AI security programs do not currently have infrastructure to satisfy. Understanding where existing programs stand against the AARM framework is the first step toward addressing the gap.

For Security Teams Currently Relying on Identity and Access Controls

The AARM analysis of IAM and RBAC limitations is direct: these systems evaluate permissions in isolation and cannot detect compositional threats. For organizations whose AI agent security program consists primarily of OAuth scoping, role-based access, and access reviews, AARM reveals that the compositional risk surface is unaddressed. An agent that is appropriately scoped for every individual permission it holds can still construct a harmful sequence of individually-permitted actions. The security program that cannot see the sequence cannot catch the threat.

For Governance Programs Currently Built on Documentation

AARM is a runtime governance framework — it operates at execution time, not at approval time. A governance program that reviews agent deployments before they go live and produces documentation of approved scope is doing necessary work. It is not doing sufficient work for production agentic environments where the agent's actual behavior in a specific session may diverge from what was approved. AARM fills the gap between pre-deployment approval and production session governance.

For Compliance Teams Dealing With Article 72 and Audit Evidence

The tamper-evident receipt requirement in AARM (R5) and the identity binding requirement (R6) together produce exactly the kind of audit evidence that EU AI Act Article 72 post-market monitoring requires — and that most current enterprise audit trails do not capture. A receipt that binds action, session context, authorization decision, identity across all dimensions, and outcome in a cryptographically verifiable record is audit-ready evidence of runtime governance. It proves that decisions were made, not just that actions occurred.

Honest Limitation: AARM Is a Specification

AARM is v1.0 of an open specification with 46 companies building against it. The implementation ecosystem is early. Buyers looking for mature, enterprise-grade AARM-conformant products will find a market that is actively being built rather than one that has fully matured. The conformance testing protocol is published, but third-party conformance certification infrastructure does not yet exist at the depth that SOC 2 or ISO 27001 certification does. Organizations evaluating vendors against AARM should use the conformance requirements as an evaluation framework for vendor claims — understanding that "AARM-aligned" and "AARM-conformant" represent different levels of specification compliance.

"AARM is the first framework I have seen that starts from the right question for agentic AI security: not whether the agent has access, but whether what it is doing right now is appropriate given what it has done so far in this session and what the user actually asked for. That reframe — from access to action, from identity to intent, from point-in-time to session-aware — is the architectural shift that agentic AI security requires. The CSA's adoption of this framework under Jim Reavis's leadership gives it the vendor-neutral credibility it needs to become a genuine industry standard rather than one company's internal specification. This is a category GAIG will be covering closely."

Nathaniel Niyazov

CEO, GetAIGovernance.net

Sources & References

AARM Specification v1.0 — aarm.dev (Autonomous Action Runtime Management)

CSA — Securing the Agentic Control Plane: Key Progress at the CSAI Foundation (April 29, 2026)

GAIG — Your AI Monitoring Dashboard Is Full of Data Nobody Acts On

GAIG — AI Compliance: Certifications, Frameworks, and Laws Explained

Our Take

AI Security Take

The CSA's adoption of AARM is the most significant governance framework development for agentic AI security that GAIG has covered. Not because AARM is fully mature — it is not, and Jim Reavis is explicit about that. But because it formally names and architecturally defines a security gap that has been growing silently for the past eighteen months as enterprises deployed autonomous agents without any runtime governance layer whatsoever. GAIG is starting coverage of this category now because the gap it addresses is already causing harm in production environments, even before AARM is widely adopted.

The two exponentials Reavis describes — model capability growth and viral agent adoption — are both accelerating. The security infrastructure for governing what agents do with those capabilities at runtime has been growing much more slowly. AARM is the first serious attempt to close that gap at the specification level rather than at the product level. Specifying what a runtime governance system must do before the market fragments into incompatible proprietary approaches is exactly the right intervention at this moment in the market's development.

The action classification system — four categories replacing binary allow/deny — is the most practically significant innovation in the specification for security architects. It resolves the two failure modes that binary access control produces for agentic systems: over-blocking legitimate operations and over-permitting compositional threats. Organizations that are currently trying to govern AI agents with IAM policies and prompt guardrails need to understand that these tools address access, not action, and that the compositional risk surface their agents are creating is invisible to both.

The conformance requirements give procurement teams a specific evaluation framework for vendors claiming AARM alignment. Ask for live demonstration of pre-execution interception. Ask what a tamper-evident receipt looks like with full identity binding. Ask how the policy engine handles context-dependent deny versus allow for the same base action. Ask whether governance hooks execute synchronously before tool execution or asynchronously after. Those questions separate vendors with genuine AARM-class capability from vendors with AARM-adjacent marketing. GAIG will continue tracking this space closely as the implementation ecosystem matures.