Evaluating runtime detection for AI infrastructure? Submit an inquiry and GAIG will match you with vendors covering this threat surface.

Submit an Inquiry →

Key Numbers

7.8 CVSS score — High severity. Qualys internal score: 95

NVD · Qualys ThreatPROTECT

732 Bytes in the public Python PoC exploit. No compiled code. No race condition.

Xint Code Research Team

91 Separate PoC exploits identified in the wild as of early May 2026

Exploit Intelligence via U of Toronto Security

9 yrs The optimization introducing the flaw was committed in 2017. Disclosed April 2026.

Sysdig / Linux kernel commit log

4 Bytes written into page cache. Enough to stage shellcode into /usr/bin/su and get root.

Theori / Xint Code

CVE ID: CVE-2026-31431

CVSS Score: 7.8 HIGH

Disclosed: April 29, 2026

Kernels Affected: 4.14 → 6.19.11

PoC Status: Public / Active

Copy Fail is not a sophisticated nation-state tool. It is a 732-byte Python script that gets root on Ubuntu, Amazon Linux, RHEL, and SUSE without a race condition, without compiled code, and without touching any file on disk. Every file integrity tool you have will tell you nothing happened. The kernel knows otherwise.

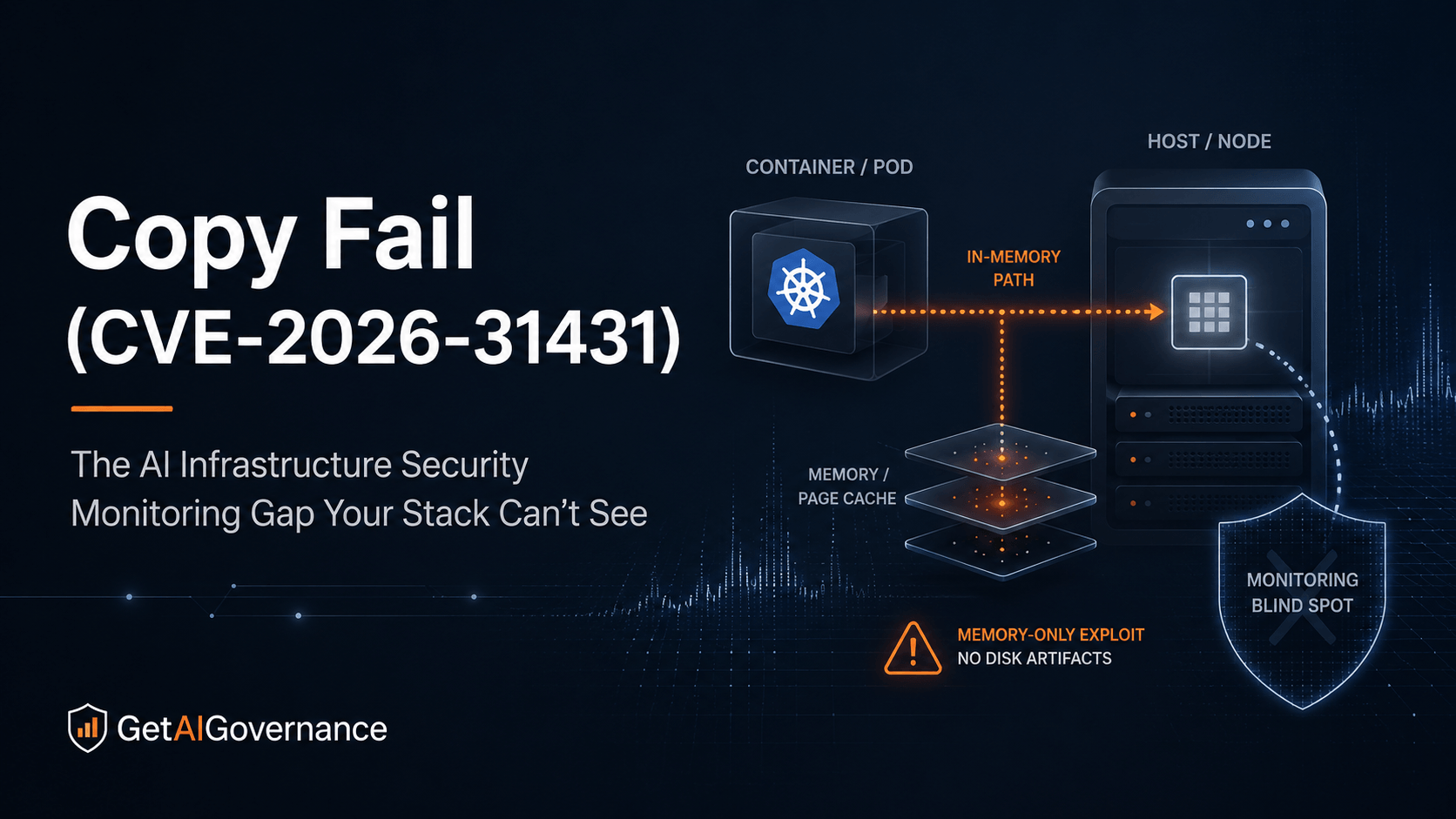

On April 29, 2026, security firm Theori publicly disclosed CVE-2026-31431 — a local privilege escalation vulnerability in the Linux kernel that has been sitting in production infrastructure since a 2017 performance optimization. The security community named it Copy Fail. What makes it different from every other kernel CVE is not the severity score. It's the combination of three things that almost never appear together: reliability, stealth, and container escape potential.

For enterprises running AI workloads on Kubernetes, this matters in a specific and urgent way. The detection gap at the center of Copy Fail is not a configuration failure or a missed patch. It's a structural assumption baked into every major monitoring and integrity tool: that malicious activity leaves marks on disk. Copy Fail was specifically designed — whether intentionally or by circumstance — to invalidate that assumption entirely.

This is a deep dive into how Copy Fail works, why standard tooling misses it, what the Kubernetes blast radius looks like for AI infrastructure, and what a governance program needs to do about the accountability gap it exposes.

What Copy Fail Actually Does

The vulnerability lives in the Linux kernel's algif_aead module — the AEAD socket interface of the kernel's userspace crypto API (AF_ALG). In 2017, a performance optimization introduced by commit 72548b093ee3 switched AEAD operations to run "in-place" by setting the source and destination buffers to the same memory. That optimization remained in production kernels for nine years.

The researchers at Xint Code, whose AI-assisted analysis produced the initial finding, describe the technical mechanism with unusual precision:

"Copy Fail (CVE-2026-31431) is a logic bug in the Linux kernel's authencesn cryptographic template. It lets an unprivileged local user trigger a deterministic, controlled 4-byte write into the page cache of any readable file on the system. A single 732-byte Python script can edit a setuid binary and obtain root on essentially all Linux distributions shipped since 2017."

Xint Code Research Team — Copy Fail: 732 Bytes to Root on Every Major Linux Distribution

Four bytes sounds small. The payload is not the point. The location is. When a readable file is spliced into an AF_ALG socket, the kernel passes references to that file's page cache pages rather than making copies. The authencesn algorithm writes four bytes as scratch space for Extended Sequence Number rearrangement — and because of the flaw, those four bytes land directly inside the spliced file's cached data in memory.

The Sysdig Threat Research Team documented the complete exploitation chain:

"The HMAC verification fails as expected, but the corruption persists in the page cache. Repeating the primitive at successive offsets stages a small shellcode into the cached pages of /usr/bin/su. Running su afterward executes the patched binary and yields a root shell."

What the attacker controls is the target file, the write offset, and the four-byte payload. No race condition. No compiled code. No per-distribution offsets or kernel version checks. The same script runs on Ubuntu 24.04, Amazon Linux 2023, RHEL 10.1, and SUSE 16. Microsoft's security team confirmed the scope in their analysis:

"Successful exploitation leads to full root privilege escalation (high impact to confidentiality, integrity, and availability) and could facilitate container breakout, multi-tenant compromise, and lateral movement within shared environments. Its reliability, stealth (in-memory-only modification), and cross-platform applicability make it particularly dangerous in cloud, CI/CD, and Kubernetes environments where untrusted code execution is common."

The Detection Gap That Makes This a Governance Problem

Most kernel CVEs are serious. This one is governance-relevant in a way most are not, because the gap it exposes is in the monitoring layer — not just the security layer. File integrity monitoring, hash-based validation, and disk-based scanning all share the same foundational assumption: if something changed, the change is on disk. Copy Fail operates entirely in memory.

Oligo's security team, who published the first detailed post-disclosure analysis, named this directly:

"Most detection and integrity tooling is built around a core assumption: if something malicious happened, it left a mark on disk. Copy Fail invalidates that assumption entirely. File integrity monitoring checks the disk. The disk is untouched. Hash-based validation compares against the on-disk binary. It matches. Disk-based scanning finds nothing, because there's nothing to find there."

Mike O'Malley, Oligo Security — Copy Fail: When "Local" Becomes a Full System Problem, May 4, 2026

This is the point that matters most for security program owners. An organization can have fully deployed file integrity monitoring, continuous hash validation, and a functional SIEM receiving disk-level alerts — and every one of those controls will produce a clean report after a successful Copy Fail exploitation. The attacker gained root. The audit trail shows nothing.

CERT-EU's advisory, published April 30, 2026, confirmed that even MAC mechanisms fail in default configurations:

"MAC mechanisms such as SELinux and AppArmor can mitigate the exploit, but only when they are configured so that only legitimately required services are granted access to the AF_ALG socket family. In default configurations, any unconfined or broadly permitted process can still open AF_ALG sockets, so the protection is effectively absent and the exploit remains reachable."

Qualys assigned a Vulnerability Score of 95 to CVE-2026-31431 — significantly above the CVSS 7.8 score — specifically because of active exploitation in the wild, public PoC maturity, and the breadth of affected distributions. By the time of their analysis, 91 separate proof-of-concept exploits had been identified in the wild.

The Governance Accountability Gap

A monitoring program that cannot detect active exploitation is not a monitoring program. It is a dashboard. Copy Fail exposes which organizations have runtime behavioral detection versus which ones have disk-based tools they believe are equivalent. Those are not the same thing, and this CVE makes the difference operational rather than theoretical.

The Kubernetes Blast Radius for AI Infrastructure

Copy Fail is classified as a local privilege escalation — meaning an attacker needs some form of code execution on the target system before the exploit fires. That description is technically accurate and operationally misleading for anyone running AI workloads on shared Kubernetes infrastructure.

The Bugcrowd analysis is direct on why the "local" framing understates the real exposure:

"The cloud-native posture of the last decade has been to treat the Linux container as a defense-in-depth layer. 'Run untrusted code in a container, so even if it's malicious, it can't reach the host.' Copy Fail puts real pressure on that posture because the page cache is host-wide and the primitive is reliable. Don't be fooled by the 'high' (not critical) CVSS score."

The page cache is shared across all processes on a host — including across container boundaries. An attacker with access to an unprivileged container can corrupt setuid binaries visible to other containers and to the host kernel. The University of Toronto Information Security advisory confirmed this directly: "Kubernetes container escape: because the page cache is shared across all processes on a host, including across container boundaries, a compromised container can corrupt setuid binaries visible to other containers and the host kernel."

For AI infrastructure specifically, the foothold problem is different from a typical enterprise environment. Three surfaces are particularly exposed:

AI training and inference nodes on shared Kubernetes clusters. Most enterprise AI deployments run multiple workloads on shared node pools to optimize GPU utilization. Namespace isolation does not address this class of issue. A compromised inference pod can reach host-level binaries.

CI/CD pipelines running model evaluation or fine-tuning jobs. These runners frequently execute code from external repositories, third-party model weights, or user-submitted evaluation scripts. Any of those inputs can carry the 732-byte exploit.

Jupyter notebook environments and AI development infrastructure. Shared notebook servers are among the most common AI infrastructure deployments and among the most permissive in terms of code execution by unprivileged users. A researcher running their own notebook code on a shared host is exactly the scenario Copy Fail was built for.

The governance accountability question here is one that most AI security programs have not yet formally assigned: who owns Kubernetes node security for AI workloads? In most organizations, the answer is split between infrastructure teams, platform engineering, and AI operations — with no single named owner and no defined response SLA for a kernel-level compromise. That ownership gap is what Copy Fail makes urgent.

How Detection Has to Work

Because Copy Fail is designed to leave no disk artifacts, detection has to operate at the runtime layer — observing what the system is actually doing, not comparing file states before and after. Oligo's post identified the behavioral signals that, taken together, constitute a detection signature:

AF_ALG socket usage associated with authencesn templates

splice() activity targeting setuid binaries

Unprivileged processes interacting with privileged execution paths

Unexpected root shell spawning

Anomalous execution of trusted binaries following cryptographic activity

No single signal is conclusive. The detection is the correlation of multiple behavioral signals in sequence — which is precisely the kind of detection that disk-based tools cannot produce.

Sysdig's runtime detection approach is one of the more specific in the published literature. Their team built a Falco rule targeting the AF_ALG page cache poisoning chain specifically:

"Sysdig Secure customers automatically have a detection in place, using the rule AF_ALG Page Cache Poisoning Leading to Privilege Escalation. It is part of the Sysdig Runtime Behavioral Analytics managed policy. This rule is very precise due to the advanced Observations detection engine."

The detection logic is worth understanding precisely because it illustrates the broader principle. The Falco rule monitors for AF_ALG socket creation — the first step in the exploit chain. It catches the attack before the page cache write completes, not after a root shell has already been spawned. That is what runtime detection provides that disk-based monitoring cannot: interception during the action, not inspection after the artifact.

Interim mitigation — disable vulnerable kernel module (CERT-EU recommended)

echo "install algif_aead /bin/false" > /etc/modprobe.d/disable-algif.conf

rmmod algif_aead 2>/dev/null || true

# Verify no active AF_ALG usage before disabling

lsof | grep AF_ALG

# Block AF_ALG socket creation via seccomp on all containerized workloads

# regardless of patch status — prevents exploitation on unpatched kernels

What Having This Right Looks Like

The organizations that are not exposed to the governance dimension of Copy Fail — not just the technical vulnerability, but the accountability gap — share a specific set of characteristics. Each one maps to a control that standard disk-based monitoring programs do not provide.

Named ownership of Kubernetes node security for AI workloads. Not "the infrastructure team is responsible" — a specific named owner with a documented response SLA for kernel-level privilege escalation events. Most AI security programs have not made this assignment. Copy Fail is a forcing function.

Runtime behavioral detection deployed on every AI infrastructure host. The detection requirement is behavioral, not disk-based. Organizations that have deployed runtime observability tools — those that monitor syscall-level activity, process lineage, and socket creation patterns — have Copy Fail coverage. Organizations relying solely on file integrity monitoring, endpoint detection built on disk scanning, or hash-based validation do not.

Seccomp profiles on all containerized AI workloads. CERT-EU explicitly recommended this as a compensating control regardless of patch status. A seccomp policy that prevents AF_ALG socket creation blocks the first step of the exploit chain on unpatched kernels. Most AI workload deployments do not have seccomp profiles configured because the performance and compatibility tradeoffs have historically seemed not worth the operational overhead. Copy Fail changes that calculus.

An incident response playbook that covers container-origin host compromise. The standard AI incident response template covers model misbehavior, data leakage, and prompt injection. Almost none of them cover the scenario where a compromised AI workload escalates to root on the Kubernetes node and begins moving laterally. That playbook gap needs to be closed before the next Copy Fail, not after.

The Bugcrowd analysis named the structural shift that Copy Fail represents across the industry:

"Shared-kernel multi-tenancy is a riskier default than it used to be. If your isolation story is 'containers on a shared host kernel,' the threat model needs a hardware-or-VM boundary, not a namespace boundary."

What Copy Fail Doesn't Tell You

The governance conversation around Copy Fail is real and urgent. It also carries risk of scope creep that turns a specific, actionable vulnerability into a vague anxiety about AI infrastructure security generally. A few important limitations on the threat model are worth stating plainly.

Copy Fail requires local code execution first. An attacker cannot trigger it remotely over the network in isolation. It becomes dangerous when chained with an initial access vector — a compromised container, a CI job executing untrusted code, a weak credential, a web application vulnerability. Organizations that have no external exposure on their AI infrastructure nodes, run fully isolated single-tenant workloads, or have already deployed microVM or gVisor-based isolation face a materially different risk profile than a multi-tenant GPU cluster running untrusted model evaluation jobs.

Patching resolves the vulnerability. The upstream fix — reverting the 2017 in-place optimization — was committed April 1, 2026. Patched kernels are available or in progress for all major distributions. Organizations that patch promptly, reboot to load the new kernel, and verify patch application remove the Copy Fail attack surface entirely. The monitoring and accountability gaps this article describes are real governance concerns regardless of patch status — but patch is the right first action, and it is decisive.

Related Reading

Our Take

AI Security Take

Copy Fail matters beyond its CVE score because of what it reveals about the monitoring architecture most enterprises have deployed for AI infrastructure. The detection gap is not a configuration error. It is a structural assumption — that malicious activity leaves disk artifacts — that has been true enough for long enough that it became invisible as an assumption. Copy Fail makes it visible by violating it cleanly and reliably.

Every organization running AI workloads on shared Kubernetes infrastructure should answer two questions this week. First: do we have runtime behavioral detection — syscall-level, process-lineage-level, behavioral signal detection — on our AI infrastructure hosts, or do our detection tools depend on disk state? Second: who is the named owner of Kubernetes node security for AI workloads, and what is their documented response SLA for a kernel-level privilege escalation event?

If the answer to the first question is "disk-based tools only," Copy Fail is not the last exploit that will bypass your detection entirely. If the answer to the second question is "unclear," then your AI security program has a governance accountability gap that a patch cannot close.

Browse AI Infrastructure Security and AI Threat Detection platforms in the GAIG marketplace for runtime behavioral detection tools evaluated for AI workload environments. Read the CISO's Pre-Failure Signal Guide for the broader signal framework this vulnerability fits into — or submit an inquiry and we'll match you with vendors who have built detection specifically for the AI infrastructure threat surface.