Why This Was Inevitable Before It Started

Samsung's semiconductor division is one of the most IP-sensitive environments on the planet. Semiconductor manufacturing processes are measured in nanometers. Source code governing chip measurement, defect detection, and yield optimization represents years of R&D and billions in competitive advantage. This is not a business unit where casual data handling is a minor risk. Every line of proprietary code is a trade secret.

On March 11, 2023, Samsung's device solutions division — which manages its semiconductor and display businesses — officially permitted employees to use ChatGPT. The decision came without a formal AI system registry, without an access control framework governing what data employees could submit to external models, and without any technical enforcement mechanism at the endpoint, network, or browser level.

The policy was a memo. The memo said employees should not submit company-related information or personal data. There was no technical control that enforced it. There was no shadow AI detection scanning what was leaving the environment. There was no data loss prevention layer scanning outbound content. There was no audit log capturing what employees were submitting to external AI services. The permission was granted into a governance vacuum.

ChatGPT itself warned users not to enter sensitive information — OpenAI stated this explicitly in its FAQ at the time. Samsung's engineers knew they were using an external service. What they did not fully understand, and what Samsung had not technically prevented, was that every prompt entered into the consumer-facing ChatGPT interface was potentially retained by OpenAI and could be used to train its models. That gap between employee awareness and organizational enforcement is not a training problem. It is a controls problem. And Samsung had zero controls in place.

What Actually Happened

Between March 11 and approximately March 30, 2023, three separate Samsung engineers in the semiconductor division submitted highly sensitive proprietary data to ChatGPT. All three incidents were reported by The Economist of South Korea on March 30 and subsequently confirmed through reporting by CIO Dive, TechCrunch, and Bloomberg. None of the three engineers were acting maliciously. They were trying to work efficiently with a tool their employer had just sanctioned.

Incident 1

An engineer pasted proprietary source code from Samsung's semiconductor facility measurement database download program into ChatGPT to identify and fix a bug. The code contained critical details about Samsung's semiconductor manufacturing measurement infrastructure.

Incident 2

An engineer pasted source code for a program that identifies defective semiconductor equipment into ChatGPT, seeking optimization suggestions. This code contained proprietary logic for detecting manufacturing defects — one of the most competitively sensitive capabilities in chip production.

Incident 3

An engineer recorded an internal Samsung meeting on a smartphone, transcribed the recording using Naver CLOVA — a separate AI transcription service — then fed the full transcript into ChatGPT to generate meeting minutes. Internal meeting content, including strategic discussions, entered an external AI pipeline.

As reported by the AI Incident Database (Incident #768), all three incidents occurred within approximately 20 days of Samsung officially permitting ChatGPT use. Samsung launched an internal investigation and initiated disciplinary proceedings against the engineers involved. On May 1, 2023, Samsung banned generative AI tools across all company-owned devices and internal networks. According to a reporter at TechCrunch, a Samsung spokesperson stated: "The company is reviewing measures to create a secure environment for safely using generative AI to enhance employees' productivity and efficiency."

A reporter at Bloomberg confirmed that Samsung's memo to staff noted the company's concern that data transmitted to external AI platforms is stored on external servers, making it "difficult to retrieve and delete" — and that the data could potentially be disclosed to other users. The damage was already done.

Confirmed Timeline

Mar 11, 2023: Samsung officially permits ChatGPT use in its device solutions division with no technical controls in place.

Mar 11–30: Three separate incidents of proprietary data submission to ChatGPT occur within 20 days.

Mar 30, 2023: Korean Economist reports that Samsung has identified at least three internal data leak incidents.

April 2023: Samsung internal survey finds 65% of participants believe using generative AI tools carries a security risk.

May 1, 2023: Samsung bans generative AI tools across all company-owned devices and internal networks.

Aug 2023: OpenAI launches ChatGPT Enterprise, accelerated in part by the Samsung incident and enterprise demand for private deployments.

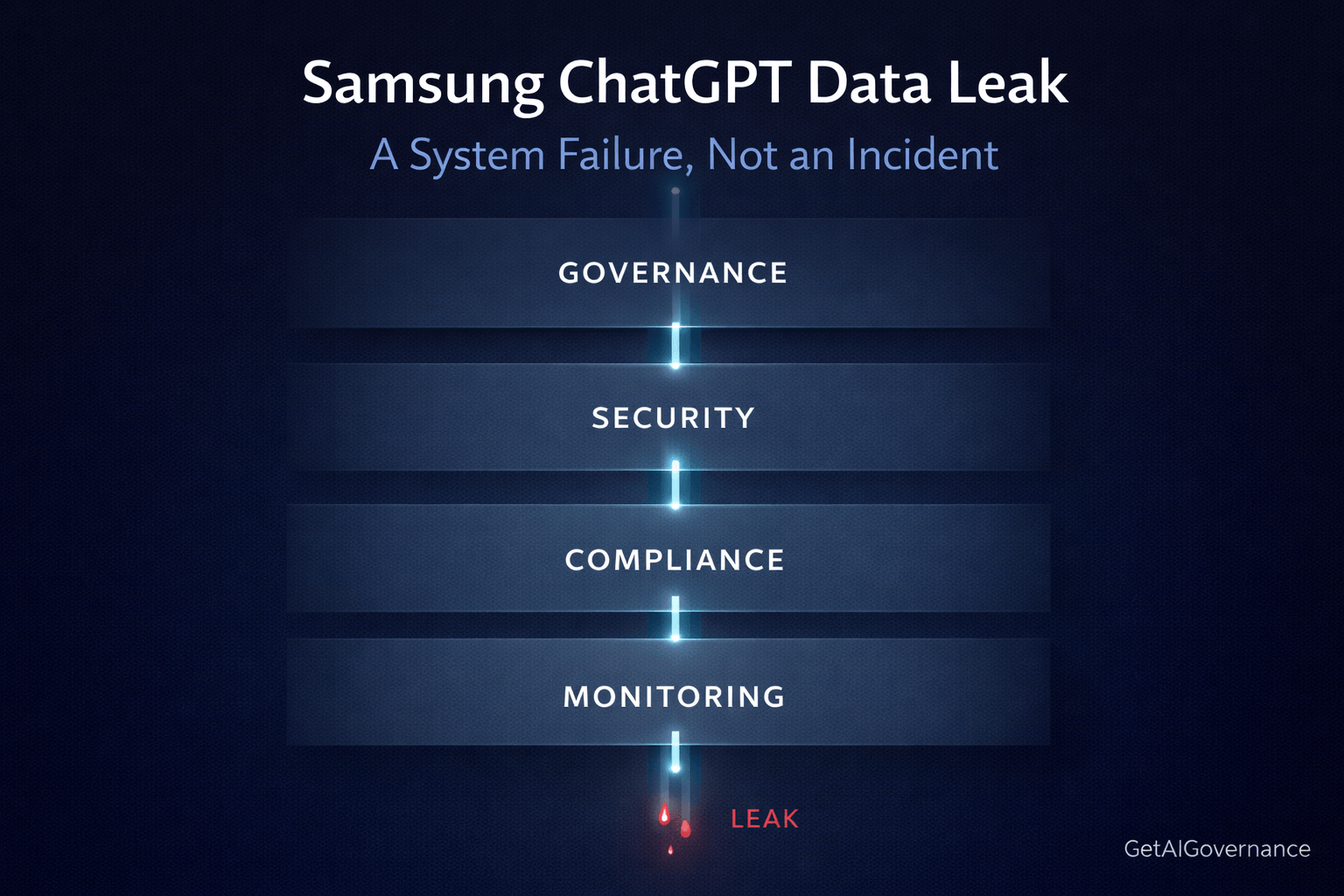

How Six Missing Controls Made Three Incidents Inevitable

This was not bad luck. Each of the following missing controls represents a specific point in the chain where the first incident could have been stopped — before there were three. They did not fail independently. They failed together, and each absent layer made the next failure more likely.

No AI System Registry or Third-Party AI Inventory (Governance)

Samsung permitted ChatGPT use without ever formally classifying it as an external AI system requiring governance review. The AI Governance Capabilities framework identifies visibility and discovery as the foundational governance layer — you cannot apply risk assessments, access controls, or monitoring to a system that was never formally registered. ChatGPT was sanctioned via memo, not governance process. That decision bypassed every downstream control that a proper AI registry would have triggered.

No Risk Classification for External AI Tool Access (Governance)

Permitting employees in a semiconductor division to submit prompts to an external consumer AI model is a high-risk operation. Samsung's device solutions division handles the most IP-sensitive data in the company. A proper risk and control definition process would have classified external LLM usage in this context as high-risk immediately — triggering mandatory access restrictions, data submission rules with technical enforcement, and elevated audit requirements. No classification was made. No risk tier was assigned. Policy preceded enforcement, and enforcement never arrived.

No Shadow AI Detection or Asset Discovery (Security)

The Asset & Discovery control layer — specificallyShadow AI Detection— exists to scan endpoints, browser traffic, and network connections for unauthorized or unreviewed AI tool usage. As detailed in the AI Security Controls framework, shadow AI detection flags connections to external LLM services in real time and identifies which user or device initiated the connection. Samsung had no such capability. When the first engineer pasted semiconductor source code into ChatGPT, nothing in Samsung's environment detected the outbound data transfer. The absence of this single control meant all three incidents were invisible until an internal report surfaced them.

No Data Leakage Prevention on Outbound AI Prompts (Security)

Data Leakage Prevention (DLP) in the context of AI security means inspecting every model prompt for sensitive content patterns — source code signatures, proprietary identifiers, internal system references — before the prompt reaches an external service. The AI Security Controls framework classifies this under the Threat & Vulnerability layer. Samsung had no DLP scanning on outbound ChatGPT prompts. Semiconductor source code and internal meeting transcripts transited to OpenAI's servers without any inspection, pattern-matching, or blocking at the content level. A DLP control operating on the first incident would have flagged the source code pattern and blocked the submission before OpenAI received a single character of it.

No Audit Trail for External AI Submissions (Compliance)

The Audit & Evidence Controls layer requires tamper-proof logging of every model interaction — what was submitted, by whom, to which external service, and when. Samsung had no audit trail capturing what employees were submitting to ChatGPT. When the incidents were eventually discovered through internal reporting, Samsung could not reconstruct a complete picture of what had been exposed. According to a reporter at CIO Dive, Samsung's post-incident response included implementing a 1,024-byte upload cap per prompt — a band-aid measure that confirmed no prior logging had existed. As the AI Governance Capabilities guide notes, compliance infrastructure that depends on human-entered documentation produces records of what humans chose to report — not what systems actually did. Samsung had neither.

No Behavioral or Usage Monitoring for AI Tool Activity (Monitoring)

The AI Monitoring Signals framework identifies User Behavior Signals and Input/Prompt Signals as distinct monitoring categories. User behavior monitoring tracks how employees interact with AI tools — what they submit, how frequently, and whether usage patterns deviate from expected norms. Prompt signal monitoring tracks the structural characteristics of what enters AI systems. Samsung had neither. Three engineers submitted proprietary code to an external model across multiple sessions over 20 days with no monitoring system in place to detect the pattern, flag the anomaly, or alert a security or governance team. The incidents were discovered through internal reporting — meaning a human told someone, not a system. That is the definition of absent monitoring.

The critical point, as analyzed by a researcher at redteams.ai, is that "policy preceded enforcement: Samsung lifted its ChatGPT ban with only a memo-based policy and no technical enforcement. The 1,024-byte limit was not enforced at the network level, and there was no content classification system at the browser or endpoint level." Policy without enforcement is aspiration, not security. Samsung had aspiration. It had nothing else.

How the Layers Failed Each Other

The failure was not six independent problems. It was one system with no layers talking to each other. Here is exactly how each missing control left the next one with nothing to work from.

Layer | Specific Gap | What It Left Exposed | GAIG Reference |

|---|---|---|---|

Governance | No AI registry. ChatGPT never entered a formal review process. | No downstream controls could trigger because the system was never classified. Risk assessment, access controls, and monitoring all depend on registry entry. All were skipped. | |

Governance | No risk classification. High-risk external AI use treated as a productivity tool. | Without a risk tier, no mandatory controls were triggered. The absence of classification meant the absence of every obligation that classification would have created. | |

Security | No shadow AI detection. No endpoint or network scanning for external LLM connections. | The security layer had nothing to enforce because it had no visibility. It could not block what it could not see. Governance's failure to register the tool meant security never knew to watch for it. | |

Security | No DLP on outbound AI prompts. Source code and meeting transcripts left the perimeter unchecked. | Even without shadow AI detection, a DLP control scanning outbound content would have flagged the source code pattern in Incident 1 before OpenAI received it. The absence of one reinforced the other. | |

Compliance | No audit trail for external AI submissions. No tamper-proof log of what was submitted, when, and by whom. | Compliance had no records to produce. When the incidents surfaced, Samsung could not reconstruct what had been exposed. The 1,024-byte post-incident band-aid confirmed that nothing had been logged before. Regulators in South Korea and the EU noted this gap in subsequent policy discussions. | |

Monitoring | No user behavior or prompt-pattern monitoring. Three incidents over 20 days were invisible until a human reported them internally. | Monitoring had no signals to surface because security never generated them and compliance never logged them. The monitoring layer was the last line that could have caught the pattern — and it was absent entirely. |

This is the cross-layer failure pattern that makes Samsung more than a cautionary anecdote. Governance did not trigger security. Security did not generate compliance records. Compliance had nothing to audit. Monitoring had nothing to observe. Each layer's absence made the next layer's absence irrelevant. The whole stack was missing, not just one piece of it.

What It Actually Cost, And Which Gap Owns Each Line Item

The Damage Ledger

IP Exposure: Proprietary semiconductor source code — including defect detection algorithms and facility measurement systems — was submitted to OpenAI's consumer training pipeline. Samsung never disclosed the financial value of the exposed IP. The semiconductor fabrication industry measures process advantages in nanometers and yield fractions of a percent. Source code governing those processes is worth billions in competitive advantage, and Samsung could not recover or delete it once submitted.

Owned by: Security — no DLP.

Investigation & Response: Samsung launched internal investigations, initiated disciplinary proceedings against three engineers, and conducted an internal survey across its organization. A reporter at CIO Dive noted Samsung implemented a 1,024-byte upload cap after the incidents — a reactive technical measure that confirmed the absence of prior controls. IBM's 2025 Cost of Data Breach Report, cited by a researcher at Tenlines, puts the average cost of AI-associated data breaches at $650,000 per incident in direct remediation, regulatory response, and legal fees. Samsung had three incidents.

Owned by: Compliance — no audit trail made reconstruction expensive.

Productivity Loss: Samsung banned generative AI tools across all company-owned devices and internal networks effective May 1, 2023. According to a reporter at TechCrunch, the ban covered ChatGPT, Microsoft Bing AI, and Google Bard simultaneously. While Samsung employees were locked out of every external AI productivity tool, competitors continued accelerating AI-assisted development. The productivity gap during the ban period — and the cost of developing internal alternatives — is unquantified but material.

Owned by: Governance — no registry, no controlled deployment path, only a blanket ban as the only available response.

Regulatory Attention: The incident contributed directly to regulatory discussions in South Korea, the EU, and the US about enterprise obligations when employees use third-party AI services. A researcher at redteams.ai documented that the Samsung case became a reference point in policy conversations across multiple jurisdictions about what constitutes adequate enterprise AI governance.

Owned by: Compliance — no audit trail, no evidence of controls, no defensible governance record.

Industry Impact: Samsung's response — building internal LLM alternatives to keep data within its own infrastructure — became a template for large enterprises globally. Bloomberg reported that major banks including Bank of America, Goldman Sachs, and JPMorgan moved to restrict or ban ChatGPT in the same period. The Samsung incident accelerated OpenAI's development of ChatGPT Enterprise, launched August 2023. The entire enterprise AI tool market pivoted on this incident. The cost of that pivot was borne by every organization that had to rebuild its AI access strategy from scratch instead of deploying with proper controls from the start.

What Having It Right Would Have Looked Like

A properly governed version of Samsung's March 2023 ChatGPT deployment would have looked like this. None of these controls are exotic. All of them existed in the market at the time. The gap was not technology availability. It was the absence of an AI governance program that connected them.

AI Registry entry before access was granted. Governance capability: Visibility & Discovery. ChatGPT would have been formally registered as an external AI system before the March 11 permission was issued. That registration would have triggered a risk classification review, which would have immediately flagged external LLM access in a semiconductor division as high-risk. Every subsequent control would have been triggered automatically from that classification.

Shadow AI detection scanning endpoint and network traffic. Security capability: Asset & Discovery Controls — Shadow AI Detection. When the first engineer opened ChatGPT on a Samsung device or Samsung network on March 11, shadow AI detection would have flagged the connection, logged the session, and surfaced the usage to the security team within minutes. Incident 1 would have been visible the moment it started.

DLP scanning on all outbound AI prompts for source code patterns. Security capability: Threat & Vulnerability Controls — Data Leakage Prevention. A DLP control inspecting outbound content for source code signatures, internal system identifiers, and proprietary pattern structures would have flagged Incident 1's semiconductor source code before it left Samsung's perimeter. The submission would have been blocked. OpenAI would never have received the data.

Tamper-proof audit logging of all external AI submissions. Compliance capability: Compliance & Evidence layer. Every prompt submitted to ChatGPT would have been logged with user identity, timestamp, session context, and content classification. When Incident 1 surfaced, Samsung's compliance team would have had a complete, timestamped reconstruction of exactly what was submitted, when, and by whom — usable as evidence in any regulatory or legal proceeding.

User behavior monitoring flagging anomalous AI tool usage patterns. Monitoring capability: User Behavior Signals. A monitoring layer tracking prompt patterns and usage anomalies would have surfaced the unusual behavior within Incident 1's session — a semiconductor engineer submitting structured code blocks to an external consumer AI service. That signal would have reached a security or governance team in real time, not three weeks later through internal reporting.

Third-party AI governance review before vendor access was approved. Governance capability: Third-Party AI Governance layer. A vendor review of OpenAI's data handling practices — specifically the consumer training data policy that made submitted prompts available for model training — would have been a mandatory step before any Samsung engineer was permitted to use the service. That review would have identified the data retention risk immediately and either blocked consumer ChatGPT entirely or required use of an API configuration with data retention disabled.

Every one of these controls was available in the market in March 2023. The Samsung engineers who triggered the incidents were not negligent in the way the word is usually meant. They were using a tool their employer sanctioned, doing their jobs efficiently. The failure was organizational, not individual. An organization with a functioning AI governance stack does not discipline engineers for doing what the governance program failed to prevent.

Looking for platforms that cover AI shadow detection, DLP for outbound prompts, audit trail generation, and behavioral monitoring for external AI tool usage? Browse the GAIG marketplace across AI Data Security, AI Access Control, AI Governance Platforms, and AI Audit & Documentation — or submit an inquiry and we'll match you with vendors that address your specific exposure.

Submit an Inquiry

Sources

TechCrunch — Samsung bans use of generative AI tools like ChatGPT after data leak (May 2, 2023)

Bloomberg — Samsung Bans Generative AI Use by Staff After ChatGPT Data Leak (May 2, 2023)

CIO Dive — Samsung employees leaked corporate data in ChatGPT: report (April 7, 2023)

AI Incident Database — Incident #768: ChatGPT Implicated in Samsung Data Leak

redteams.ai — Case Study: Samsung ChatGPT Confidential Data Leak (2023)

Tenlines — Samsung's ChatGPT Leak: What CISOs Should Learn (February 2026)

Cybernews — Lessons learned from ChatGPT's Samsung leak (May 9, 2023)

GAIG — AI Governance Capabilities Explained

GAIG — AI Security Controls Explained

GAIG — AI Monitoring Signals Explained

GAIG — AI Compliance: Certifications, Frameworks, and Laws Explained

Our Take

AI Governance Take

Samsung is not an outlier. It is the most documented version of a pattern that plays out every week in organizations that permit AI tool usage through memo-based policies with no technical enforcement. The difference between Samsung and the companies that avoided this incident in 2023 is not that those companies had better employees. It is that they had controls sitting between their employees and the external AI services those employees were using.

The specific failure pattern here — governance without enforcement, security without discovery, compliance without logging, monitoring without signals — is the same pattern behind every major enterprise AI incident. The layers exist in isolation or not at all. The registry does not trigger the risk classification. The risk classification does not activate the security controls. The security controls do not feed the audit log. The audit log does not surface to monitoring. The result is an organization that has a document saying it takes AI governance seriously and zero technical evidence to support that claim when something goes wrong.

Samsung's response — a blanket ban followed by a 1,024-byte prompt cap — is what happens when organizations have no governance infrastructure and respond to incidents with the only tool available: restriction. The irony is that the ban cost Samsung more in competitive productivity and AI development delay than a properly governed deployment would have cost to build. A memo is cheap. The incident it fails to prevent is not.

Two years later, the AI governance market has significantly matured. The platforms that address every layer of this failure chain exist and are actively deployed in enterprise environments. The question for organizations reading this is not whether Samsung's incident was preventable — it obviously was. The question is whether your current AI governance stack would have caught it. Most organizations cannot answer that question with confidence. That uncertainty is the gap.