A new analysis published by VentureBeat has spotlighted a serious and under-appreciated vulnerability in modern enterprise AI systems: tool registry poisoning. As organizations rapidly shift from simple copilots to fully autonomous agentic systems, the registries these agents use to discover and select tools have quietly become one of the most attractive attack surfaces in the entire AI stack.

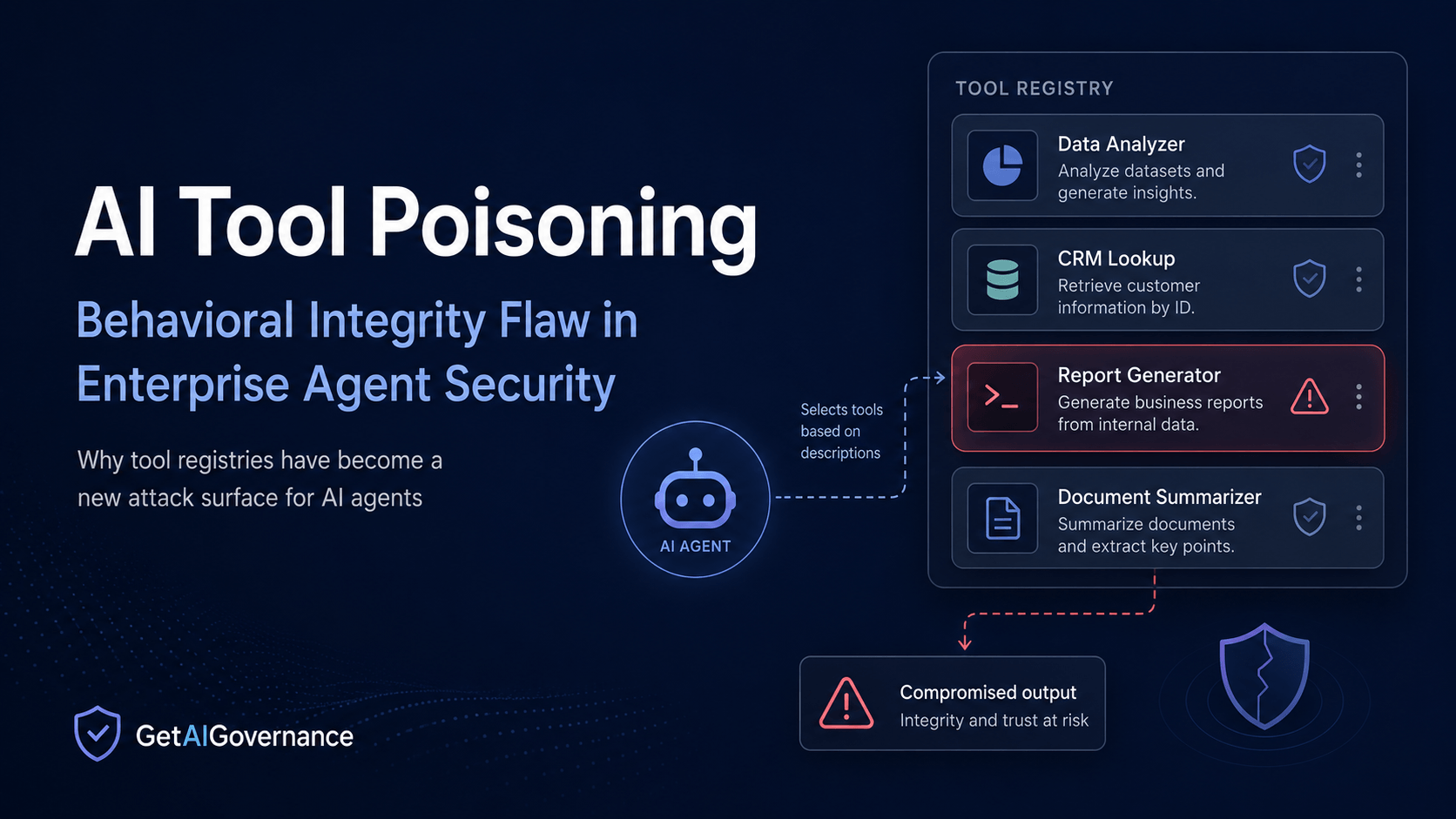

The fundamental problem is architectural. Modern AI agents do not blindly execute hardcoded functions. Instead, they reason over available tools by reading natural-language descriptions, parameter schemas, and metadata. They match these descriptions against the task at hand and then decide which tool to invoke. This design enables flexibility and composability, but it also creates a critical trust gap that traditional security controls were never built to address.

Principal engineer Nik Kale, who researched and reported the issue, captured the essence of the problem in one clear sentence:

“AI agents choose tools from shared registries by matching natural-language descriptions. But no human is verifying whether those descriptions are true.”

Principal engineer Nik Kale

This is not a minor technical edge case or a theoretical vulnerability. It represents a systemic failure in how enterprises are currently approaching agent security and governance. Traditional software supply chain protections — such as code signing, SBOMs, SLSA, and Sigstore — focus almost entirely on artifact integrity: confirming that the code has not been tampered with and comes from a trusted source.

What agents actually need, however, is behavioral integrity: assurance that the tool does exactly what its description claims, nothing more, and continues to behave that way throughout its lifecycle. The gap between these two concepts is where the real danger lies in the agentic era. When agents autonomously chain multiple tools together with minimal human oversight, even subtle manipulation of a single tool description can lead to significant security breaches, data leaks, or unauthorized actions.

Key Terms

Tool Registry Poisoning: The deliberate manipulation of tool metadata, natural-language descriptions, parameter schemas, or underlying behavior in a shared registry to influence or compromise how AI agents discover, select, and use tools.

Behavioral Integrity: The assurance that a tool performs exactly as described in its registry entry — and crucially, does nothing else — consistently throughout its entire lifecycle, especially during runtime execution.

Artifact Integrity: Traditional supply chain security controls (code signing, SBOMs, SLSA, Sigstore, etc.) that verify whether the code artifact is authentic, unchanged, and originates from an approved source.

Discovery Binding: A critical security control that cryptographically or technically binds the tool an agent actually calls at runtime to the exact version and description the agent evaluated during the discovery phase.

Model Context Protocol (MCP): An emerging standard for how AI agents communicate with and invoke external tools. Because it relies heavily on natural language descriptions, it is particularly susceptible to description-based poisoning attacks.

Behavioral Drift: A situation where a tool’s actual behavior changes after it has been registered and approved — either through legitimate updates or malicious modification — without the registry or agents being properly notified.

These terms mark a fundamental shift that many security and governance teams have not yet fully internalized: we are moving from static code security to dynamic, runtime behavioral governance. Most current tools and processes remain stuck in the former paradigm.

Conditions Driving This Change

Several powerful and mutually reinforcing trends have made tool registry poisoning not only possible, but highly attractive to both sophisticated nation-state actors and skilled cybercriminals:

Enterprise adoption of agentic AI has accelerated sharply in 2026. Organizations are no longer content with chat-based copilots — they are deploying autonomous agents capable of planning, reasoning, and executing multi-step workflows across internal systems and external tools.

Tool ecosystems are becoming significantly more open and decentralized. Standards such as the Model Context Protocol (MCP) allow agents to dynamically discover tools from internal registries, partner platforms, or even public sources.

Development and deployment velocity frequently outpaces security review processes. Teams prioritize speed and functionality, often registering new tools with only superficial checks on their descriptions and schemas.

Modern agents are powered by large language models that interpret tool descriptions using the same reasoning processes they use for instructions. This makes prompt-injection-style attacks embedded in metadata unusually effective.

Traditional security tooling and monitoring solutions remain heavily focused on code-level threats and human identities, leaving a growing blind spot around autonomous, non-human tool usage and behavioral monitoring.

Supply chain attacks targeting AI components have proven extremely successful over the past year, encouraging adversaries to explore newer, more subtle vectors such as registry poisoning.

Regulatory frameworks like the EU AI Act are increasing pressure on organizations to demonstrate real runtime controls and auditability for high-risk AI systems, yet many programs still rely on documentation and static checks.

There is currently a widespread lack of standardized behavioral verification mechanisms, meaning organizations are unknowingly trusting unverified natural language descriptions at massive scale.

This combination of factors has created ideal conditions for a new class of attacks that exploit the fundamental gap between what agents “see” during tool discovery and what actually executes at runtime.

What the Research Reveals

In his VentureBeat analysis, Nik Kale details how attackers can compromise agent behavior through multiple stages of the tool lifecycle. The vulnerability exists because agents fundamentally rely on descriptive metadata rather than strict, enforceable behavioral contracts.

Kale explains the core shortfall of today’s defenses with striking clarity:

“Artifact integrity controls (code signing, SLSA, SBOMs) all ask whether an artifact really is as described. But behavioral integrity is what agent tool registries actually need: Does a given tool behave as it says, and does it act on nothing else? None of the existing controls address behavioral integrity.”

Nik Kale

He goes further with a sobering historical parallel:

“If the industry applies SLSA and Sigstore to agent tool registries and declares the problem solved, we will repeat the HTTPS certificate mistake of the early 2000s: Strong assurances about identity and integrity, with the actual trust question left unanswered.”

Nik Kale

The research outlines several concrete attack techniques, including:

Embedding hidden instructions or prompt injections inside tool descriptions

Causing behavioral drift after initial approval

Bait-and-switch tactics where a tool behaves normally during review but performs malicious actions during live execution

These attacks are especially dangerous because they can bypass many existing security layers while appearing completely legitimate to both the AI agent and human oversight teams.

Our Take

AI Governance Take

This report is a clear and urgent illustration of GAIG’s central thesis: governance is architecture, not documentation. In the agentic era, enterprises can no longer afford to trust natural language descriptions or static pre-deployment checks alone. Runtime behavioral enforcement must become a foundational control layer.

Organizations that continue relying primarily on artifact integrity, manual reviews, and policy documents are quietly accumulating serious Pre-Failure Signals. A single successfully poisoned tool in a shared registry can lead to large-scale data exfiltration, privilege escalation, unauthorized financial transactions, or other harmful actions — all while maintaining the appearance of normal, expected behavior.

Effective mitigation demands new architectural patterns: strong discovery binding mechanisms, runtime output validation and schema enforcement, continuous behavioral monitoring, and clear human ownership and accountability for critical tool registries.

Without these controls, visibility remains high while actual accountability stays dangerously low. The agentic shift has fundamentally changed the risk model. Agents don’t just suggest answers — they take action. Governance, security, and compliance programs that fail to adapt to the need for behavioral integrity will face rising incidents, regulatory findings, and real business damage.

CISOs, governance program managers, and security architects building or evaluating agent platforms should treat this as an immediate priority. The window for getting ahead of this class of attacks is narrowing quickly.