Explore AI Compliance and AI Governance vendors addressing workforce AI accountability in the GAIG marketplace.

Browse AI Compliance Browse AI Governance

In February 2023, Derek Mobley — an African American, disabled man over the age of 40 — filed suit against Workday claiming their AI applicant screening system rejected him from more than a hundred jobs automatically. Not slowly. Not after review. Within minutes of submission. The court didn't dismiss it. By May 2025, a federal judge conditionally certified the age discrimination claims as a nationwide collective action. Workday's own court filings acknowledged that 1.1 billion applications were rejected through their software during the relevant period. The collective could include hundreds of millions of people.

Everyone called this an AI bias problem. It wasn't. The algorithm did exactly what it was built and incentivized to do — screen fast, screen at scale, reduce friction for employers drowning in applications. The failure was that nobody owned what happened after that. Nobody validated that the process worked the way the documentation said it did. Nobody had the authority or the structure to catch what the system was actually doing in production. That is a workflow governance failure dressed as an AI problem. And almost every organization using AI in hiring right now has the same exposure.

1.1B Applications rejected via Workday AI during the relevant period

11,500+ Workday clients globally — 60%+ of the Fortune 500

100M+ Potential collective members in the certified class action

The Full Timeline And How We Got Here

This case didn't happen overnight. It built for years across documented court proceedings. Here is the full timeline of Mobley v. Workday, Northern District of California, Case No. 23-cv-00770-RFL.

Feb. 2023

The Complaint Filed Derek Mobley files suit in the Northern District of California alleging Workday's AI-powered applicant recommendation system discriminated against him based on race, age, and disability. He states he applied to over 100 positions using Workday's platform and was rejected from every single one — many within minutes of submission. The complaint names disparate impact as the legal theory: no intentional discrimination required, just systematic, documented outcome disparities.

Early 2024

First Motion to Dismiss — Denied Workday's first attempt to have the case thrown out is rejected. The court allows the plaintiff to amend the complaint. Workday's primary defense: they are not an employer and therefore cannot be liable for employment discrimination. The court begins examining whether an AI vendor performing traditional hiring functions could be held directly liable under an "agent" theory.

Apr. 2024

EEOC Files Amicus Brief The Equal Employment Opportunity Commission files a brief supporting the plaintiff, urging the court to deny Workday's second motion to dismiss. The EEOC warns that if Workday's algorithmic tools in fact make hiring decisions at the scale Mobley suggests, it would be critically important to ensure Workday complies with federal anti-discrimination law. This signals that federal regulators view AI vendor liability as an open and serious legal question.

Jul. 2024

Second Motion to Dismiss — Denied Judge Rita Lin issues a mixed ruling. The court rejects the theory that Workday acted as an "employment agency" under federal law. But it allows the "agent" theory of liability to proceed — establishing precedent that AI vendors who perform traditional hiring functions can face direct discrimination liability even without being the employer. This ruling is new legal territory that every HR AI vendor should be studying.

Late 2024

Workday Publishes External Bias Audit — And the Numbers War Begins Workday commissions an external bias audit covering 10 of its largest enterprise clients using New York City Local Law 144 methodology. Conclusion: no evidence of disparate impact on race or gender. Clean bill of health. Mobley's attorneys run their own analysis on the same published data and find statistically significant disparities against African American applicants and women — with odds greater than one in a quadrillion that the system was race-neutral. Same data. Opposite conclusions. This is the moment the audit theater problem becomes impossible to ignore.

May 2025

Nationwide Class Certification Granted Judge Lin conditionally certifies the ADEA age discrimination claims as a nationwide collective action. The court finds that Mobley sufficiently alleged a unified policy in the form of Workday's AI screening system. Workday argued that different employer clients' use of the tools made collective treatment inappropriate. The court ruled those differences immaterial. The case is now potentially one of the largest collective actions ever certified.

Mar. 2026

Age Discrimination Claims Survive Latest Challenge A federal judge allows age discrimination claims under the Age Discrimination in Employment Act to move forward. The court reinforces that anti-discrimination laws apply to job seekers, not just people already employed. Workday's argument that disparate impact claims were applicable only to employees — not applicants — is rejected. The case continues.

Ongoing

Discovery Phase — The Reckoning Discovery will examine how Workday's screening tools operate, how training data was selected, and whether the system creates unintended bias. Two specific Workday tools have come under scrutiny: Candidate Skills Match (part of Workday Recruiting) and related recommendation components. Both sides must now produce documentation of how decisions were made at scale. This is precisely the documentation most organizations cannot produce.

Three Governance Failures

Here is what actually broke down. Not the algorithm. The governance structure around it. Workday's AI did what it was trained to do. What failed was everything that was supposed to catch it.

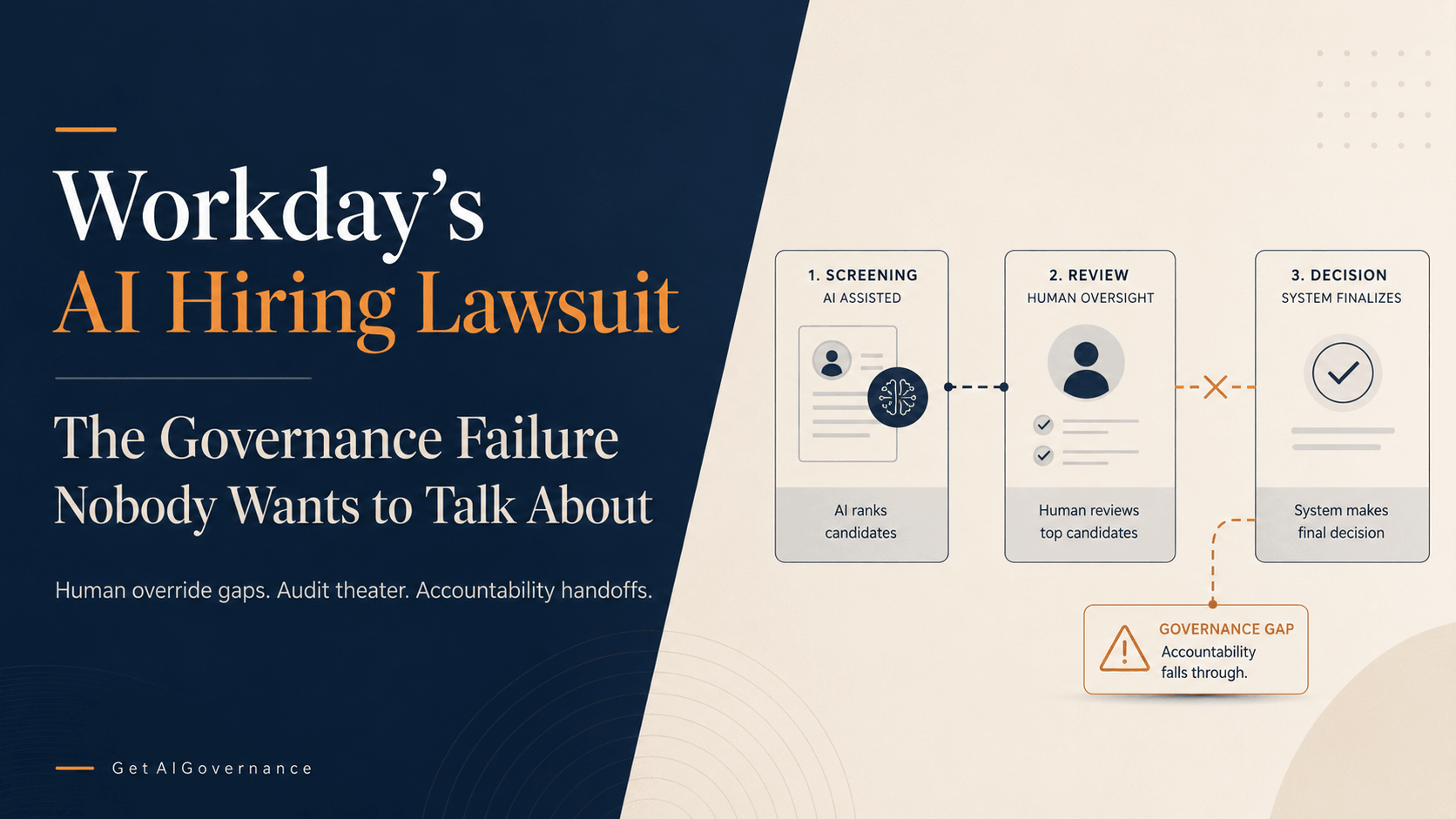

Failure 1 — The Human Override Gap

Nobody had named authority to question what the AI recommended. HR teams saw outputs. They acted on outputs. The entire value proposition of the tool was to eliminate the human review that would have caught the pattern. A candidate rejected within minutes of submitting an application was never going to be reviewed by a person — that was the feature, not the bug. Governance requires named people with actual authority to override automated decisions. There were none. And when courts asked who owned that decision, the answer from both Workday and its employer clients was the same: not us.

Failure 2 — The Audit Theater Problem

Workday commissioned an external bias audit. It cleared them. Plaintiff attorneys ran the same data and found disparities with odds greater than one in a quadrillion of being race-neutral. Same data. Opposite conclusions. This happens because bias testing has no single agreed methodology — one approach minimizes errors across the whole dataset, another focuses on equal error rates across groups, a third tries to ensure correct decisions at equal rates. These definitions are mathematically incompatible. When the party being audited gets to choose the methodology, the audit produces a document, not a verdict. Documentation of compliance is not compliance. GAIG has been making this point about monitoring dashboards for two years. It applies here with identical force.

Failure 3 — The Accountability Handoff Gap

Workday said employers controlled the hiring decisions. Employers said Workday's tool controlled the screening. Liability lived in the gap between them — unowned, unmonitored, and unresolved — for years before courts assigned it. Courts have now said employers own it whether they claimed to or not. The lesson is not complicated: if AI performs a function in your organization, you are accountable for what that function does. Outsourcing the tool does not outsource the accountability. This is the accountability doctrine that GAIG applies to every governance layer, and Workday is the most expensive illustration of why it matters.

GAIG Key Finding

The Workday failure is an accountability failure that used AI as the mechanism instead of a normal AI failure. The distinction matters because fixing the AI model without fixing the accountability structure around it leaves the same liability in place with a different tool doing the damage.

What Good Actually Looks Like Before You Turn on the AI

Before any organization deploys AI in hiring, performance management, or any other workforce decision, here is what a governed workflow actually requires. This isn't aspirational. It's the minimum — and it's what Workday's employer clients did not have in place.

Named ownership of the end-to-end process. Someone with a name and a title owns what the AI screening does. Not the vendor. Not the platform. A person inside your organization who can be asked in a deposition what they did when the system flagged an anomaly and can give a specific, documented answer.

Workflow validation before deployment. The process is tested in conditions that reflect actual use — real job postings, real applicant volumes, real edge cases. The question isn't whether the tool works in a demo. It's whether the handoffs between the tool and the humans who act on its outputs function the way the documentation claims.

Independent bias methodology. Audit frameworks chosen by independent parties, not by the vendor being tested. If your vendor runs their own bias audit and clears themselves, that is marketing. Effective oversight requires reviewers with authority to challenge, methodology they didn't design, and conclusions they can defend under cross-examination.

Real override authority. A human being must be able to say "the system scored this candidate low and I'm advancing them anyway" without that being treated as breaking the process. If your governance structure makes human override the exception rather than the protected option, it's not governance — it's a rubber stamp with extra steps.

Audit trails that capture human responses, not just system events. A log of what the AI did is not compliance documentation. An audit trail shows what the AI did, what the human reviewed, what decision the human made, and why. If you can't produce that chain in discovery, you don't have a governed process. You have system logs.

Periodic real-world validation that the documented process matches actual execution. Policies drift. People find workarounds. The process that gets documented in onboarding is rarely the process that runs in production six months later. Someone has to check — systematically, on a schedule, with findings that go somewhere actionable.

We Went Looking for Who Actually Solves This and Found ForceTechh

After mapping the Workday failure chain, we did what GAIG does: we went looking for who addresses the specific problem underneath the headline. Not the AI model bias problem. The workflow governance problem. The human accountability gap. The documented misalignment between what organizations say their processes do and what those processes actually do when the AI is running in production.

Most of what we found addresses the technology layer. Bias detection platforms. Algorithmic auditing tools. Model monitoring dashboards. All useful for different things. None of them solve the problem Workday revealed — because that problem was never in the model. It was in the organizational structure around it, in the handoffs between teams, in the gap between the documentation and the reality.

Then ForceTechh came across our desk. Small company. May's Landing, New Jersey. Founded and led by Jeffrey H. Hughes Jr., who carries a CAGE code (0QD17) and UEI, signaling government-adjacent operational experience. No enterprise software product. No AI-powered dashboard. What they have is a structured operational intelligence framework — called S.A.I.P.H. — that does something almost no AI vendor bothers with: it maps how your actual workflows function before and after you introduce automation, identifies where accountability degrades across handoffs, and validates in real-world conditions that what your documentation says happens is what actually happens.

We Went Looking for Who Actually Solves This and Found ForceTechh?

After mapping the Workday failure chain, we did what GAIG does: we went looking for who addresses the specific problem underneath the headline. Not the AI model bias problem. The workflow governance problem. The human accountability gap. The documented misalignment between what organizations say their processes do and what those processes actually do when the AI is running in production.

Most of what we found addresses the technology layer. Bias detection platforms. Algorithmic auditing tools. Model monitoring dashboards. All useful for different things. None of them solve the problem Workday revealed — because that problem was never in the model. It was in the organizational structure around it, in the handoffs between teams, in the gap between the documentation and the reality.

Then ForceTechh came across our desk. Small company. May's Landing, New Jersey. Founded and led by Jeffrey H. Hughes Jr., who carries a CAGE code (0QD17) and UEI, signaling government-adjacent operational experience. No enterprise software product. No AI-powered dashboard. What they have is a structured operational intelligence framework — called S.A.I.P.H. — that does something almost no AI vendor bothers with: it maps how your actual workflows function before and after you introduce automation, identifies where accountability degrades across handoffs, and validates in real-world conditions that what your documentation says happens is what actually happens.

ForceTechh — Operational Intelligence

May's Landing, NJ

Small Business

CAGE: 0QD17 · UEI: TABPQ97FNS87ForceTechh was established to help organizations address operational systems challenges involving systems coordination, workforce systems alignment, operational workflows, and organizational resilience. Through collaborative operational intelligence engagements, structured systems evaluation, and human-led workflow analysis, ForceTechh helps organizations identify system inefficiencies, coordination gaps, operational exposures, and opportunities to improve systems performance, operational stability, and long-term organizational effectiveness.

The company's primary differentiator is methodology. ForceTechh uses a systems-level operational intelligence approach designed to evaluate how workforce systems, operational workflows, organizational coordination, and resilience structures interact across complex environments — treating the organization as an interconnected operating system rather than a collection of independent processes. That framing is directly relevant to where Workday's governance failed.

Primary ContactJeffrey H. Hughes Jr., Owner/CEO

Coveragez: Nationwide

Classification: Small Business

Contact: saiphassess@forcetechh.com · 302-401-1044

The S.A.I.P.H. Framework What It Does and Why It Matters Here

We Spoke to Jeffrey Hughes, CEO of the company and he told us this. S.A.I.P.H. stands for the operational intelligence lifecycle ForceTechh runs engagements through. It's a four-stage process that evaluates three things most organizations have never formally assessed: how their operational systems actually function in practice, how resilient those systems are under real pressure, and how their workforce coordination structures either support or undermine the processes they've documented.

The framework operates through four sequential phases:

Discovery and Alignment

Establish operational context. Identify stakeholders and workflow priorities. The outcome is a shared operational understanding and aligned engagement scope — meaning ForceTechh and the client agree on what is actually being evaluated before any analysis begins. This step alone prevents the scope drift that causes most governance reviews to miss the actual failure points.

System Analysis

Evaluate workflows and system interactions. Assess coordination, dependencies, and continuity readiness. This is where ForceTechh looks at how work actually moves through the organization — not how the process documentation says it moves, but how it actually moves in production conditions. This is the step that would have caught the Workday failure before it scaled to 1.1 billion decisions.

Findings and Insights

Consolidate operational observations and prioritize organizational impact areas. The output is structured operational intelligence — not a list of theoretical risks, but a prioritized map of where the actual friction and dependency failures live. Specific, named, ranked by operational impact.

Recommendations and Roadmap

Deliver operational guidance and strategic direction. Establish phased alignment priorities. The client leaves with a defined operational roadmap — not a deck of suggestions, but a sequenced plan for closing the gaps that were actually found. ForceTechh also offers continued implementation support for organizations that want to move from visibility into execution.

The Systems Integrity Audit — an advanced diagnostic layer within S.A.I.P.H. — is where this gets specifically relevant to the Workday failure pattern. It explicitly surfaces misalignment between documented processes and actual execution, identifies breakdown points between functions where accountability degrades, and finds what ForceTechh calls "human node overload" — single points of human failure where one person's absence or overwhelm causes the whole accountability structure to collapse. It is human-led, not automated. That distinction is the point.

How ForceTechh's Approach Maps to Each Workday Failure

The three governance failures in the Workday case map directly to what the S.A.I.P.H. framework is designed to find and fix before deployment, not after the lawsuit.

Workday Failure | What Was Missing | ForceTechh Addresses It By |

|---|---|---|

Human Override Gap | No named person had authority to question or override the AI's screening decisions. Accountability for outcomes lived nowhere. | Identifying who owns each decision point in the workflow and whether that ownership is real or theoretical. If override authority exists only on paper, the engagement surfaces it before the AI goes live. |

Audit Theater Problem | The bias audit was conducted using methodology chosen by the party being tested. Same data, opposite conclusions, no independent validation. | Validating in real-world conditions that the process functions as documented — not in a controlled environment, not using the vendor's preferred framework, but under actual operational conditions with actual workflow friction included. |

Accountability Handoff Gap | Liability lived in the space between Workday and its employer clients. Neither party owned the outcome when the handoff broke down. | Mapping exactly where work, data, and accountability degrade across teams and systems. The specific output of the Systems Integrity Audit is a mapped view of operational weak points at the handoff level — which is precisely where the Workday failure chain broke. |

Worth saying directly: ForceTechh is a small, human-led consulting firm. They're not a software platform. They don't have a SaaS product. For some organizations, that's a limitation. For the specific problem the Workday case revealed — which was never a software problem — it is precisely the right kind of engagement. The failure was human and structural. The fix has to be human and structural.

On Their Approach

ForceTechh's "operational" engagement philosophy states that they prioritize operational visibility before intrusive intervention, allowing organizations to maintain ownership, operational control, and workflow continuity throughout engagement activities. Standard engagements are designed around collaborative operational review, guided walkthroughs, workflow analysis, and strategic visibility rather than unrestricted production-system access. That is exactly the posture that would have given Workday's employer clients the documented validation they cannot currently produce in discovery.

Sources: Mobley v. Workday, Inc., N.D. Cal. Case No. 23-cv-00770-RFL (court records); EEOC Amicus Brief (Apr. 2024); Fisher Phillips LLP analysis (May 2025); OutSolve HR analysis (Apr. 2026); InformationWeek, "AI on Trial: The Workday Case That CIOs Can't Ignore" (May 2026); HR Morning (May 2025); Law and the Workplace (Jun. 2025); ForceTechh S.A.I.P.H. Framework documentation (provided materials, Apr. 2026).

Our Take

Pretty much every company using AI for hiring, performance reviews, or any other people decisions is carrying the same exposure right now — if they haven’t actually checked that what the tool does in real life matches what’s written in their documentation, and that real humans are clearly accountable for the outcomes.

Courts are already assigning liability, and private class actions are picking up speed no matter what happens with federal regulators. When discovery hits in the Workday case, companies are going to have to show exactly who owned each decision, how overrides worked, and how they proved the tool behaved the way the vendor promised. Most organizations simply won’t be able to answer those questions.

The fix isn’t a smarter AI model. It’s the governance and workflow validation work that should have been done before the system ever went live. It’s hard, slow, human work — exactly why most AI vendors skip it. They weren’t required to do it until judges started certifying nationwide class actions.

That’s the work ForceTechh actually does. We came across them while mapping out this exact problem, and the fit is genuinely strong. This isn’t a random plug. The problem is structural, so the solution has to be too.