Why This Was Inevitable

Vercel sits at one of the most privileged positions in modern software infrastructure. It is the deployment layer. Teams building production applications on Vercel hand it something more sensitive than almost any other vendor relationship: their environment variables. Database connection strings, authentication secrets, API keys for payment processors, internal service tokens, OAuth credentials. These are not configuration details — they are the operational keys to downstream applications that may serve millions of users.

Third-party AI tools have been proliferating across enterprise development workflows for the past two years without a governance model that matches their access. Developer tools with AI features get integrated into internal pipelines, granted OAuth scopes, connected to internal Linear boards, GitHub repositories, and deployment dashboards. Every one of those integrations creates a trust relationship. Every trust relationship is an attack surface. And in most engineering organizations, neither governance nor security teams have a complete inventory of which AI tools have been granted what access to which internal systems.

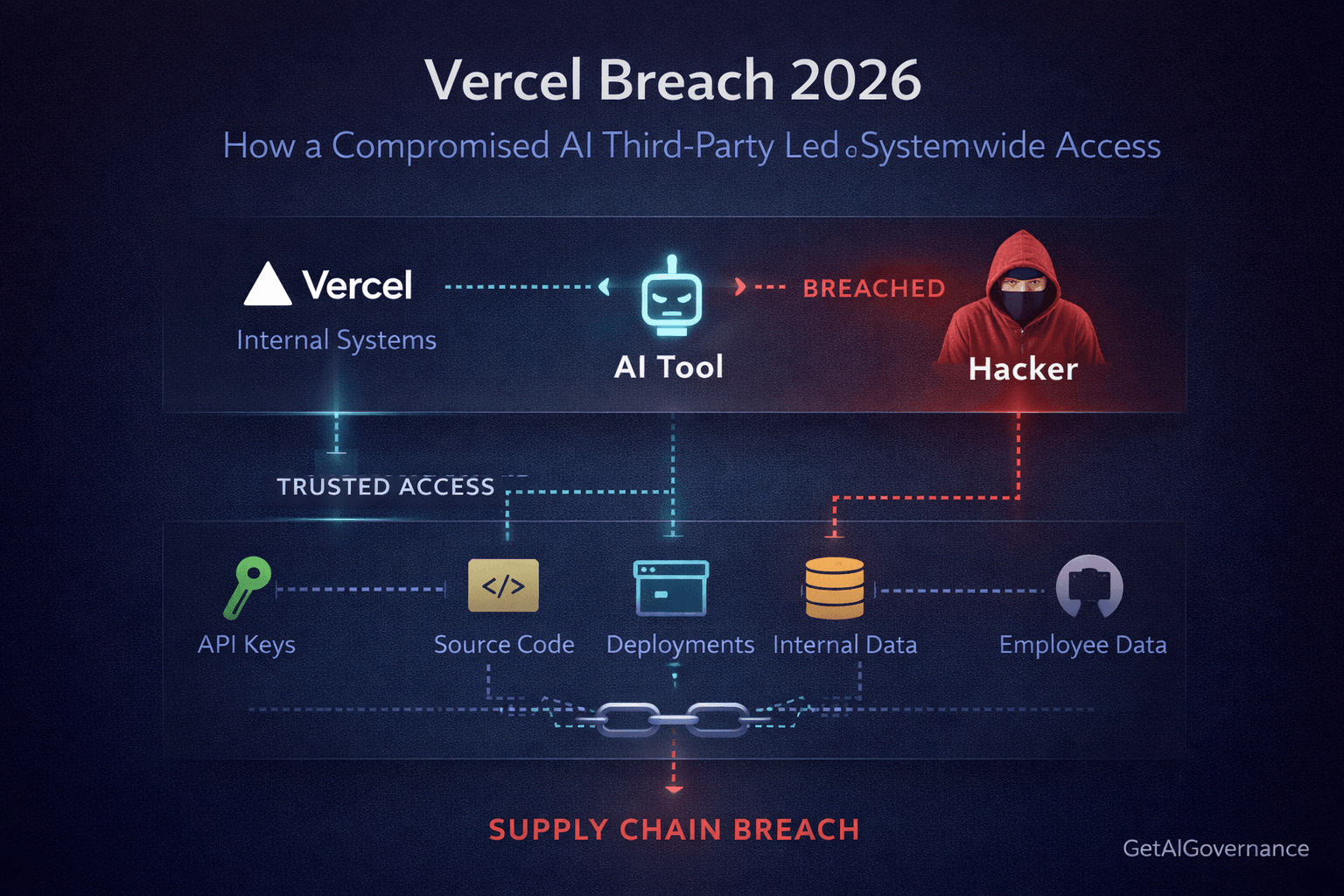

Vercel confirmed the attack vector: a compromised third-party AI tool. The platform was not penetrated through a vulnerability in Vercel's own code. It was penetrated through a trust relationship that already existed. That is not bad luck. That is a predictable failure mode for any organization that has not built a formal AI tool access governance layer — and most have not.

What Actually Happened

On April 19, 2026, a security bulletin from Vercel confirmed a breach: unauthorized access to certain internal systems, a limited subset of customers affected, external incident response experts engaged, law enforcement notified. The company said its platform remained operational and that Next.js, Turbopack, and its open-source projects were unaffected.

The confirmation came after a threat actor posting under the ShinyHunters name listed the breach on a hacking forum. BleepingComputer reported on the post's contents: access keys, source code, database data, multiple employee accounts with access to internal deployments, and API keys including NPM and GitHub tokens. The attacker also shared a text file of 580 employee records containing names, Vercel email addresses, account statuses, and activity timestamps. A screenshot of what appeared to be an internal Vercel Enterprise dashboard was shared on Telegram alongside the listing.

The attacker claimed to be in contact with Vercel about the incident and alleged a $2 million ransom demand. Developer Theo Browne noted publicly that Vercel's Linear and GitHub integrations bore the brunt of the attack. Vercel CEO Guillermo Rauch later confirmed the mechanism:

"We do have a capability to designate environment variables as 'non-sensitive.' Unfortunately, the attacker got further access through their enumeration."

Vercel CEO Guillermo Rauch

Environment variables marked as sensitive remained protected. Variables not explicitly designated as sensitive did not.

April 19, 2026 — Morning

Threat actor posts on hacking forum claiming to sell Vercel internal access, API keys, source code, and employee data for $2 million.

April 19, 2026 — Afternoon

Vercel publishes security bulletin confirming unauthorized access to internal systems, acknowledging a limited subset of customers was affected.

April 19, 2026 — Evening

Vercel CEO Guillermo Rauch discloses the specific mechanism: the attacker gained further access through enumeration of non-sensitive environment variables. Vercel rolls out updated dashboard tooling for managing sensitive variable designation.

April 19, 2026 — Ongoing

Investigation continues. Customers advised to review environment variables, rotate credentials, and enable the sensitive variable feature. Multiple ShinyHunters-linked actors deny involvement, suggesting attribution remains unclear.

Vercel confirmed the entry point as a compromised third-party AI tool integrated into internal workflows. The platform has not disclosed which vendor. The attacker leveraged access gained through that tool to enumerate environment variables, extract non-protected credentials, and move into internal Linear and GitHub integrations. The ransom demand was communicated through Telegram. As of publication, BleepingComputer had not independently verified the full scope of data authenticity.

The Chain of Failure

This did not happen because a clever attacker found a zero-day in Vercel's platform code. It happened because a third-party AI tool with internal access was compromised — and the controls that should have limited, monitored, and bounded that access were either absent or insufficiently enforced. Each missing control left the next link in the chain with nothing to work from.

No formal third-party AI tool access inventory

An AI tool was integrated into Vercel's internal workflows and granted access to critical systems including Linear, GitHub, and the deployment environment. There is no indication this tool's permissions were formally scoped, registered in an access control inventory, or reviewed against a least-privilege standard. Third-party AI tools with internal access are trust relationships — and trust relationships without formal governance are open doors.

Broad OAuth scope granted to the AI integration

The attacker accessed Linear, GitHub tokens, NPM tokens, and internal deployment dashboards through the compromised tool. Each of those accesses implies that the AI tool had been granted OAuth permissions broad enough to cover all of them — either explicitly or through credential access that was available in the environment. Scoped, time-limited credentials with minimum necessary access would have contained the blast radius to a fraction of what was exposed.

Non-sensitive variable designation not enforced as a default

Vercel's platform supports a "sensitive" designation for environment variables that encrypts them at rest and restricts access. The attacker enumerated and extracted variables that had not been designated sensitive. The feature existed. The default was not to use it. A governance posture that treats all environment variables as sensitive-by-default unless explicitly de-classified would have materially reduced what was accessible through enumeration.

No anomaly detection on internal tool access patterns

The attacker enumerated environment variables across internal systems — a behavior that produces a distinctive access pattern. Enumeration generates volume. It touches many resources in sequence. Behavioral monitoring on internal API access that establishes a baseline and flags deviation would have surfaced this pattern before credential extraction was complete. Nothing flagged it.

Supply chain trust assumptions not validated continuously

An AI tool that was trusted yesterday is not automatically trustworthy today. Third-party tools can be compromised between the moment they are granted access and the moment they are used. Continuous validation of third-party AI tool integrity — whether through attestation, behavioral monitoring of outbound activity, or regular scope review — would have provided a mechanism to detect compromise before the tool's existing access was weaponized.

The Governance Gaps — How the Layers Failed Each Other

The failure is cross-layer and interconnected. Security, governance, compliance, and monitoring each had a contribution to make. None of them made it. Here is exactly how each missing control left the next layer with nothing to catch.

Layer | Specific Gap | What It Left Exposed |

|---|---|---|

Governance | No AI tool access registry. The third-party AI tool was integrated and granted internal access without formal scoping, documentation, or risk review. | Without a registry entry, no downstream controls could trigger. Risk classification, access scoping, and audit requirements all depend on a formal integration record. All were bypassed. |

Security | No third-party access controls enforcing least privilege. The AI tool held OAuth scopes covering Linear, GitHub, NPM tokens, and deployment environments simultaneously. | When the tool was compromised, every scope it held was available to the attacker immediately. Scoped, task-bounded credentials would have constrained each access path independently. |

Security | No runtime enforcement on environment variable access. Sensitive designation was opt-in rather than default. Non-sensitive variables were enumerable without additional access controls. | The attacker enumerated accessible variables systematically. A default-sensitive posture with explicit de-classification required would have reduced the enumerable surface to near zero. |

Monitoring | No behavioral anomaly detection on internal API access. Enumeration of environment variables across multiple systems produces a distinctive access volume pattern. | The attacker operated inside the trusted access perimeter of the compromised tool without triggering any behavioral signal. Baseline deviation monitoring would have flagged the enumeration pattern before extraction completed. |

Compliance | No continuous attestation or integrity validation of third-party AI tool access. The tool's trustworthiness was assumed at integration time and never re-evaluated. | Tool compromise between grant and use is undetectable without continuous validation. Standing access granted at integration time remained valid after the tool was compromised. |

Compliance | No audit trail granular enough to reconstruct the access chain before the breach was publicly disclosed. Vercel's investigation is ongoing, implying limited pre-breach visibility into what the AI tool was accessing. | Without immutable, granular logs of third-party tool activity, the organization cannot distinguish legitimate tool behavior from attacker behavior until after the fact. |

The governance layer's failure was first in the chain. No registry entry means no risk classification. No risk classification means no mandatory access scoping. No access scoping means overpermissioned credentials exist by default. No scoped credentials means full blast radius when any one of those credentials is compromised. The security failures that followed were made structurally possible by the governance failure that preceded them.

What It Actually Cost And Which Gap Owns It

Direct Cost

A $2 million ransom demand. External incident response engagement. Law enforcement involvement. Ongoing forensic investigation. Customer notification and direct support for credential rotation across an affected subset of a platform serving thousands of development teams. Post-breach dashboard redesign and sensitive variable feature rollout under crisis conditions.

Supply Chain Cost

Vercel's infrastructure position makes this incident structurally different from a typical data breach. API keys and GitHub tokens exposed through Vercel are not just Vercel's problem — they are keys to downstream applications. Crypto and Web3 projects built on Vercel were auditing their exposure within hours, operating under the assumption that any data in Vercel's environment during the compromise window should be treated as potentially accessible. RPC endpoints, wallet-adjacent credentials, third-party service tokens. The downstream remediation burden across Vercel's customer base is substantially larger than the breach surface of Vercel itself.

Business Trajectory Cost

Vercel had reported 240% revenue growth and was preparing for an IPO at the time of this incident. Enterprise accounts — its fastest-growing segment — are the most sensitive to breach narratives and the most expensive to win back. A security incident during a quiet period carries additional constraints on investor and public communication. The reputational cost of a supply chain breach at the infrastructure layer, disclosed the same day a threat actor listed the data publicly, is not recoverable through a dashboard update.

Which gap owns the cost: The third-party AI access control gap owns the majority of it. Everything downstream — the enumeration, the credential extraction, the GitHub and NPM token exposure — flowed through the permissions that existed because the AI tool was granted broad internal access without formal scoping. If the access control gap had been closed at integration time, the compromised tool would have had a fraction of the access it held. The blast radius narrows proportionally to how tightly credentials are scoped.

What Having It Right Would Have Looked Like

The controls that would have changed the outcome here are not exotic. They map directly to established agentic identity and access management principles and to the third-party access controls in any mature AI security framework. None of them require building something new — they require applying existing infrastructure rigorously to AI tool integrations specifically.

Third-Party AI Tool Access Registry

Every AI tool integrated into internal workflows gets a formal entry: the system, what OAuth scopes it requested, what it was granted, who approved it, and when it was last reviewed. The integration cannot proceed without the entry. The entry triggers a risk classification. The risk classification determines the maximum scopes permissible.

Scoped, Short-Lived Credentials at Integration

The AI tool does not receive standing access to Linear, GitHub, NPM, and deployment environments simultaneously through a single integration. It receives scoped credentials for each system independently, issued at task time with short TTLs. Compromise of one credential set does not transfer to others. The attacker who compromised the tool gets the scope of one task, not the full integration footprint.

Sensitive-by-Default Environment Variable Posture

All environment variables are treated as sensitive unless explicitly de-classified through a review process. The designation is not opt-in. The attacker's enumeration finds nothing accessible without traversing a classification boundary — which requires permissions that a compromised AI tool does not hold.

Behavioral Monitoring on Third-Party Tool Access

A baseline is established for what normal AI tool API activity looks like. Volume, access patterns, resource types, time-of-day distribution. Enumeration deviates from all of them. The anomaly surfaces in monitoring before extraction is complete. Security operations can revoke credentials while the attacker is still mapping the environment.

Continuous Attestation on Active Integrations

Trust granted at integration time is not permanent. Active AI tool integrations are periodically re-validated — behavioral checks, scope audits, vendor security posture reviews. A tool that has been compromised between grant and use fails attestation before it can weaponize its existing access.

Our Take

AI Security Take

There is a pattern running through a significant share of the AI security incidents of the past two years: the entry point is not a vulnerability in the primary target's own systems. It is a trust relationship. A third-party AI tool, an OAuth integration, a development workflow automation, a sanctioned-but-unscoped connection granted during a productivity initiative. The attacker does not break in through the wall. They walk in through the door that was already open.

Vercel is an unusually high-value target for this pattern because of its position in the deployment stack. The credentials stored on Vercel are not just Vercel's credentials — they are the operational keys to every application built on top of it. A supply chain breach at the infrastructure layer produces downstream exposure that is structurally disproportionate to the breach surface of the platform itself. That makes third-party AI tool access governance not a Vercel-specific problem but an industry-wide posture question that every organization running AI tools inside their development pipeline needs to answer.

The CoSAI Agentic IAM framework, published March 2026 and co-authored by contributors from IBM, Google, Intel, Anthropic, Palo Alto, Amazon, and others, describes exactly this failure mode: third-party agents and integrations with broad, standing OAuth access that was granted at integration time and never formally governed or continuously validated. The framework calls for short-lived, task-scoped credentials; formal third-party access registries; and continuous attestation of active integrations. The Vercel breach is a documented production case study for every recommendation in that document