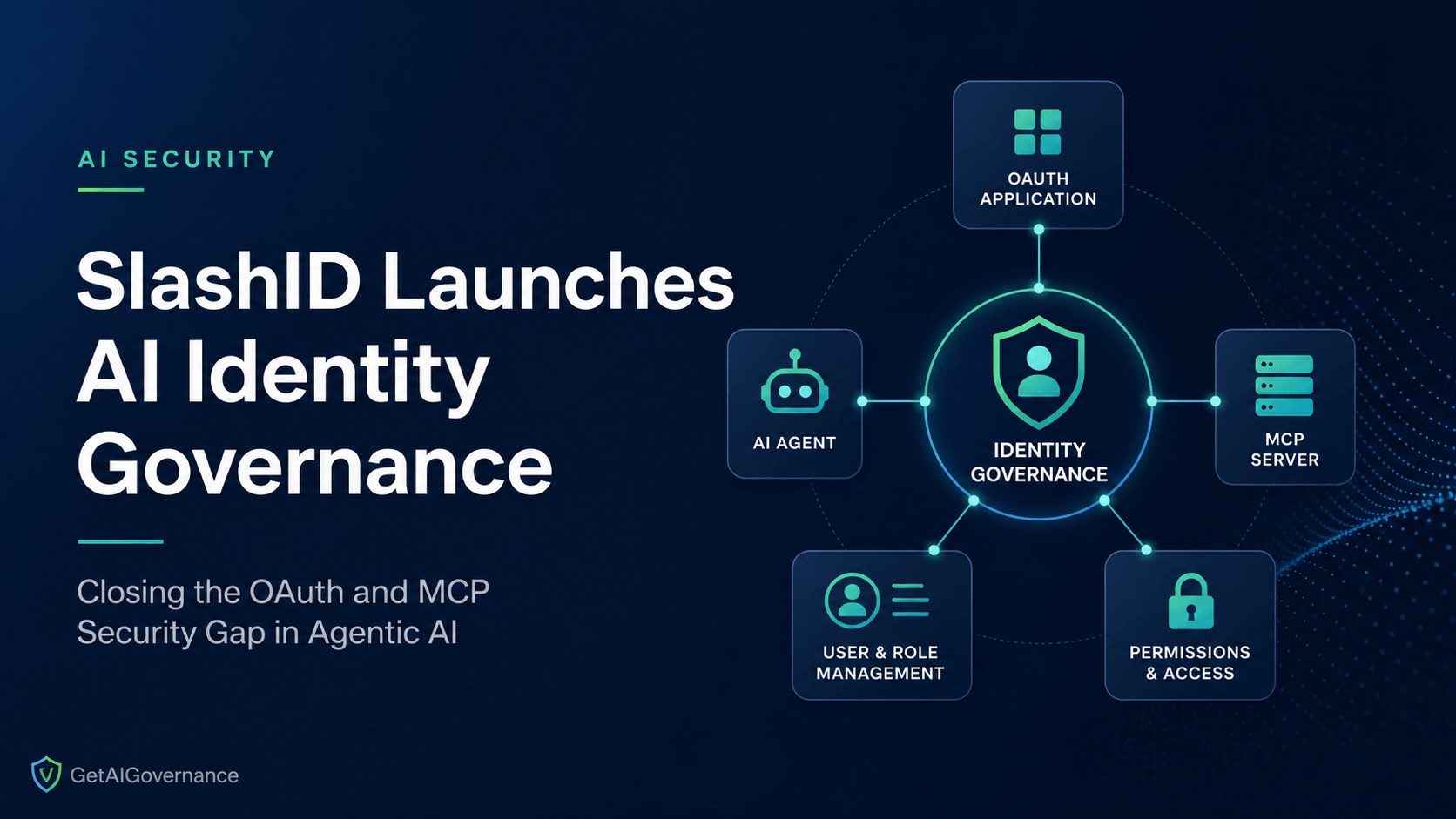

SlashID launched AI Identity Governance on May 5, 2026, making a direct architectural claim: organizations cannot govern AI agents by extending traditional identity tools built for human users. The platform targets a specific and largely unaddressed problem — the sprawl of OAuth tokens, MCP server credentials, and agent permissions that accumulates when enterprises deploy autonomous AI at scale.

The announcement lands against a backdrop of documented failures. In April 2026, Vercel disclosed that attackers compromised an employee's Google Workspace account through a malicious OAuth 2.0 application from Context.ai, a third-party AI tool. The attacker didn't exploit a vulnerability in the traditional sense. They inherited trust that an employee had already granted through a standard OAuth authorization. SlashID's Identity Graph had already been tracking that incident category — what happens when OAuth grants to AI applications proliferate without centralized visibility or revocation capability.

The product addresses three surfaces simultaneously: OAuth-connected AI applications, autonomous agents operating under service accounts, and MCP servers that have become the dominant interface layer between AI agents and enterprise systems. Whether the architecture holds under scrutiny is the question worth asking.

Key Terms

OAuth token sprawl — the accumulation of OAuth 2.0 grants across an organization where AI applications and agents have been authorized to access systems, often with overly broad scopes and no active monitoring of whether those grants are still current or necessary.

MCP (Model Context Protocol) — the open standard, now governed by the Linux Foundation's Agentic AI Foundation, that allows AI agents to connect to external tools, data sources, and services in a standardized way. As of early 2026, the protocol has over 10,000 active public servers and 164 million monthly Python SDK downloads. Every MCP server connection is an access relationship that requires governance.

Access graph — a representation of every identity, credential, OAuth grant, and permission in an environment as a connected map, showing not just what access exists but how that access connects to other identities and systems. The SlashID claim is that this graph-native structure is what makes agent governance possible where traditional IAM tools fail.

Permission Creep Drift — the gradual expansion of an agent's or application's permissions over time, often without a corresponding business justification, as new OAuth scopes are granted and old ones are never revoked.

Conditions Driving This Launch

MCP has become the de facto standard for how AI agents connect to the outside world, with the Linux Foundation confirming over 10,000 active public MCP servers and tens of millions of monthly SDK downloads as of early 2026. Every one of those connections is an access relationship with credentials attached to it.

In a typical 10,000-person organization, research from Clutch Security found that 15.28% of employees are running an average of 2 MCP servers each — totaling over 3,000 deployments — with no least-privilege authorization and no way to govern them with existing identity infrastructure.

MCP tokens and credentials effectively become high-value access points, yet most organizations have not extended identity controls to cover them. The analogy to early API sprawl is intentional and apt: the same rapid deployment, limited visibility pattern played out with APIs, and MCP is following it faster.

MCP's authorization model has documented structural failures: once an agent authenticates to an MCP server, it implicitly gains access to every tool that server exposes, with no per-tool credential check. Tools share the server's execution context, meaning a tool invoked with low-sensitivity input can be induced to perform high-sensitivity actions using the server's ambient privileges.y

The Vercel breach in April 2026 made the OAuth attack surface concrete. A third-party AI tool with an active OAuth grant became the entry point. The question it left open — how many similar grants exist in your environment, and who's watching them — is exactly what SlashID is building toward.

Machine identities now vastly outnumber human identities in the enterprise, and AI is accelerating that imbalance. AI agents introduce identities that are dynamic, ephemeral, and often created without clear ownership. Traditional identity governance models, designed around predictable human behavior, are not equipped to manage identities that can appear, act, and disappear in seconds.

What AI Access Control Looked Like Before

Enterprise identity and access management was designed for humans. A user logs in, gets a role, and that role determines what they can reach. Even non-human identity tooling — service accounts, API keys, workload identities — was built around predictable, static actors with known lifecycles.

AI agents break every assumption that architecture rests on. An agent doesn't log in once and get a role. It operates under a service account with OAuth tokens granted across multiple applications, calls MCP servers that each carry their own credentials, and may spawn sub-agents that inherit permissions through on-behalf-of token passing. The access footprint is dynamic, the credential chain is often undocumented, and the permission scope is almost always broader than what the specific task requires.

Security teams responding to this were doing it manually. Auditing OAuth grants in Google Admin Console or Microsoft Entra one application at a time. Reviewing MCP server deployments through developer self-reporting. Checking service account permissions in spreadsheets that were outdated before anyone read them. The gap between what access existed and what access was visible was, in most environments, enormous — and growing every week as new agents went into production.

The monitoring layer wasn't helping. Alert fatigue from generic IAM tools meant that a new OAuth grant to an AI application looked identical to every other OAuth grant. There was no mechanism to flag that a new MCP server had connected to production Salesforce data under a service account that already had filesystem access and calendar read permissions. The visibility simply didn't exist at that granularity.

What's Changing Now

SlashID's AI Identity Governance platform is built around what the company calls an access graph — a continuous map of every OAuth 2.0 application, agent credential, MCP server connection, and permission scope across the organization. The Identity Graph provides a unified view of every OAuth 2.0 application authorized across an organization, the scopes each app holds, and which users have granted access.

The MCP-specific capability is where the architecture gets specific. Rather than treating MCP servers as unmanaged endpoints that developers spin up independently, SlashID's platform registers them within the access graph, enforces least-privilege authorization at the per-tool level (addressing the structural failure where a single MCP authentication grants access to all tools on that server), and provides continuous monitoring for anomalous access patterns across agent-to-tool connections.

The access graph-native claim matters because it changes the detection logic. Traditional IAM tools are built around policies applied to individual identities. An access graph lets you see that an AI agent operating under service account A, with OAuth token B granting calendar read and email write permissions, has also been authorized through an MCP connection to a production database that service account A has no documented business reason to reach. That lateral movement risk is invisible to policy-based tools and visible in a graph representation.

Built-in detections fire automatically across identity providers for risky scope grants and anomalous OAuth 2.0 applications — apps requesting high-privilege scopes like full email access, directory management, or drive write access. For incident response, the platform allows security teams to immediately identify every user affected by a compromised OAuth application and understand exactly what level of access was exposed, rather than working through admin consoles application by application.

Our Take

AI Security Take

SlashID's launch puts a specific architectural answer on a problem that most enterprises are still measuring rather than solving. The MCP governance gap is real, documented, and widening every month as agent deployments scale faster than identity infrastructure extends to cover them.

The questions worth pressing: How does the access graph handle ephemeral agents that authenticate, execute a task, and terminate — the identity lifecycle problem that traditional IAM is worst at? What's the enforcement mechanism when a risky scope grant is detected? Detection without revocation capability at speed is a dashboard, not a control. And how does the platform handle the multi-MCP-server environment where a single agent workflow touches six different tool servers in sequence, each with its own credential?

Those questions aren't rhetorical gaps — they're the procurement checklist. If SlashID's answers hold up, this is a meaningful addition to the AI access control category at exactly the right moment.

Organizations evaluating their agentic AI security posture should explore AI Access Control and AI Threat Detection platforms in the GAIG marketplace — and if your current IAM tooling has no MCP server awareness, that gap is worth prioritizing before the next OAuth incident makes it urgent.