Nudge Security announced today — March 24, 2026 — the launch of AI agent discovery capabilities in research preview. The new feature gives security teams immediate visibility into every autonomous agent employees are building across low-code platforms, mapping each one to its human owner, data connections, permissions, and associated risks without requiring any new deployment.

This matters because agentic AI has crossed a critical threshold. Employees are no longer just chatting with models — they are now building autonomous systems that can pull data from multiple sources, trigger actions in other tools, make decisions, and run on schedules without constant human input. What used to require a developer and weeks of work can now be assembled in an afternoon by a business user.

Consider a revenue operations analyst who uses Salesforce Agentforce to create an agent that automatically pulls fresh pipeline data, enriches it with external market signals, updates the CRM, and posts a daily summary to the sales leadership Slack channel. The agent runs silently every morning. Until today, security and governance teams had no reliable way to know it existed, who built it, what customer data it could access, or whether its connections were properly secured. Nudge’s discovery changes that equation by catching the agent at the exact moment it is published.

Key Terms

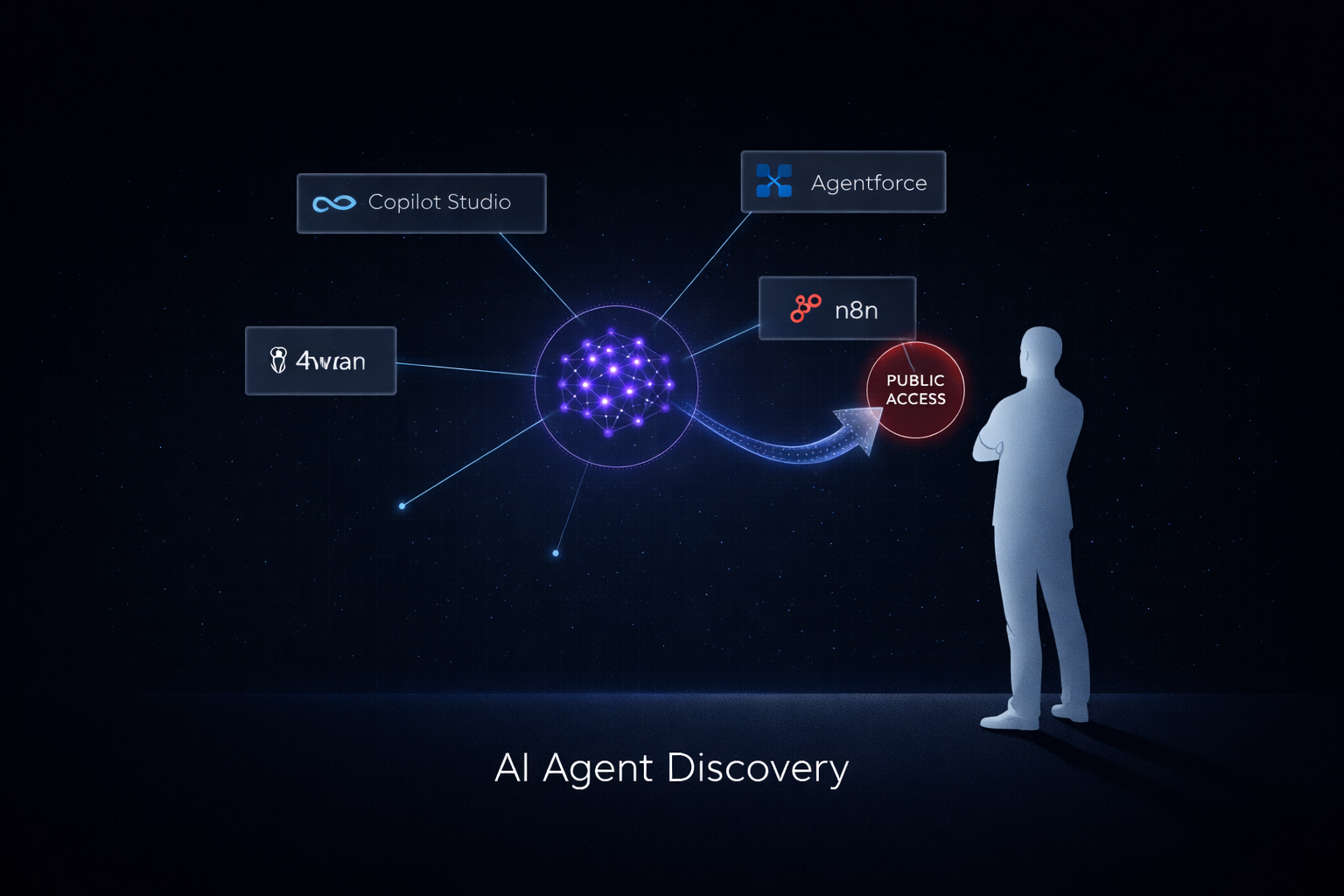

AI Agent Discovery

Continuous scanning that identifies autonomous agents the instant they are created and published in low-code builders, providing an up-to-date inventory of ownership and capabilities.

Shadow AI Agents

Autonomous agents built outside approved governance channels that can independently access data, trigger workflows, and interact with systems without central oversight or visibility.

Agentic AI

AI systems that go beyond answering questions to execute multi-step tasks, make decisions, and interact with other applications and data sources on behalf of users.

MCP Connections

Model Context Protocol links that allow agents to reach remote systems, often without strong authentication, creating a frequent high-risk vector in agent deployments.

Nudge Guardrails

Real-time notifications sent directly to the agent’s human creator asking for justification, additional controls, or remediation before the agent becomes active.

Conditions Driving the Launch

Agentic AI has moved from pilot projects to daily business operations so quickly that traditional SaaS discovery tools — built for static apps and chatbots — are now completely blind to the new layer of autonomous risk. Security teams are struggling to keep pace with how fast employees can create powerful agents that act independently.

Employees are building custom agents in low-code platforms like Microsoft Copilot Studio, Salesforce Agentforce, ServiceNow, n8n, Tines, Workato, and Abacus faster than governance teams can review them, often completing an entire agent in a single afternoon.

80% of organizations have already experienced real agentic AI incidents involving improper data exposure or unauthorized system access.

Agentic AI has become the top security concern for nearly half of all security professionals surveyed this year.

Existing discovery tools stop at apps and chatbots; they cannot see the autonomous workflows agents trigger or the data pipelines they quietly establish across multiple systems.

Agents frequently receive broad OAuth scopes and connect to sensitive systems such as CRM, HRIS, and finance tools without any pre-approval or review.

Specific risks compound quietly: publicly accessible agents that anyone on the internet can call, hardcoded credentials baked into the agent logic, unauthenticated MCP connections, high-risk third-party integrations, and orphaned agents whose creators have left the company.

Boards and regulators are starting to demand a living inventory of every AI system that touches corporate data, not just a static list of approved chat tools.

Security teams need to reach the actual human creator at the exact moment the agent is built and deployed, not weeks later during an audit or after an incident has already occurred.

What AI Security Looked Like Before This Shift

A couple of years ago security teams had reasonable visibility into shadow AI apps and chatbots through connected-app scanning. They could see which users were accessing ChatGPT or Claude and block high-risk prompts in some cases. However, autonomous agents operated in a completely different layer that existing tools simply could not see.

These agents live inside low-code builders and run on their own schedules. There was no reliable way to map who built them, what data sources they could reach, or what actions they were authorized to perform. Risks only surfaced after something went wrong — a compliance audit, a data leak investigation, or when an orphaned agent continued pulling sensitive information long after its creator had left the company.

The governance gap widened dramatically in the last 12–18 months as agentic platforms exploded in popularity. Teams could show auditors a tidy list of approved tools and written policies, but they had almost zero evidence of what agents were actually doing in production. They lacked owner names, permission maps, and risk scores. This left organizations exposed to silent, persistent risks that traditional monitoring could not catch, turning every business unit into a potential source of unmanaged AI exposure.

What Nudge Security Is Actually Changing with AI Agent Discovery

The new capability automatically discovers agents the instant they are published across Microsoft Copilot Studio, Salesforce Agentforce, ServiceNow, n8n, Tines, Workato, Abacus, ChatGPT, and Gemini for Google Workspace. It then links each agent to its human creator and maps every integration, data permission, and external connection in real time.

It surfaces prioritized, actionable risk insights such as publicly accessible agents, hardcoded credentials, unauthenticated MCP connections, high-risk third-party integrations, and orphaned agents. Instead of simply flagging the issue for security, the system immediately sends a “nudge” notification directly to the person who built the agent, asking them to justify the access level or apply additional controls before the agent goes live.

No new deployment or agent is required for existing Nudge customers — the feature builds on the same connected-apps scanning infrastructure already in place. Currently available in research preview, it already delivers a complete living inventory plus practical guardrails that let security teams make informed decisions without slowing down legitimate business innovation or forcing teams to play constant catch-up.

Our Take

AI Security Take

This launch marks a meaningful step from securing static AI tools to actually governing dynamic, autonomous agents with clear accountability tied directly to the human who created them. For the first time, governance teams can move beyond hoping policies are followed and instead maintain a living, auditable record of every agent, its owner, its permissions, and its risk profile.

Instead of discovering problems only after a breach or during a painful audit, organizations now have concrete evidence they can show regulators and boards: who built what, what data it touches, and what controls are in place. The “nudge” mechanism adds a human layer that keeps innovation moving while still enforcing accountability at the point of creation.

Over time, companies that adopt this approach will be able to embrace the speed and creativity of agentic AI without turning security operations into an endless game of whack-a-mole. It transforms shadow-agent chaos into governed, defensible innovation — giving governance teams the visibility and tools they need to stay ahead rather than constantly reacting.