On May 7, 2026, the Council of the European Union and European Parliament negotiators reached a provisional political agreement on targeted amendments to the EU AI Act. The changes form part of the Omnibus VII simplification package, aimed at reducing administrative burdens on businesses while preserving the regulation’s core risk-based framework.

The agreement delays the application of high-risk AI system obligations, introduces a new prohibition on AI-generated non-consensual intimate content and child sexual abuse material (CSAM), strengthens exemptions for SMEs and small mid-caps, and clarifies the interaction between the AI Act and sectoral legislation such as the Machinery Regulation.

“Today’s agreement on the AI Act significantly supports our companies by reducing recurring administrative costs. It ensures legal certainty and a smoother implementation… while stepping up the protection of children.”

Marilena Raouna, Deputy Minister for European Affairs of Cyprus

This marks the first major deliverable under the EU’s “One Europe, One Market” simplification roadmap and responds to recommendations in the Letta and Draghi reports on boosting European competitiveness.

Conditions Driving This Agreement

Intense pressure to improve EU competitiveness and reduce regulatory burden following the Letta and Draghi competitiveness reports.

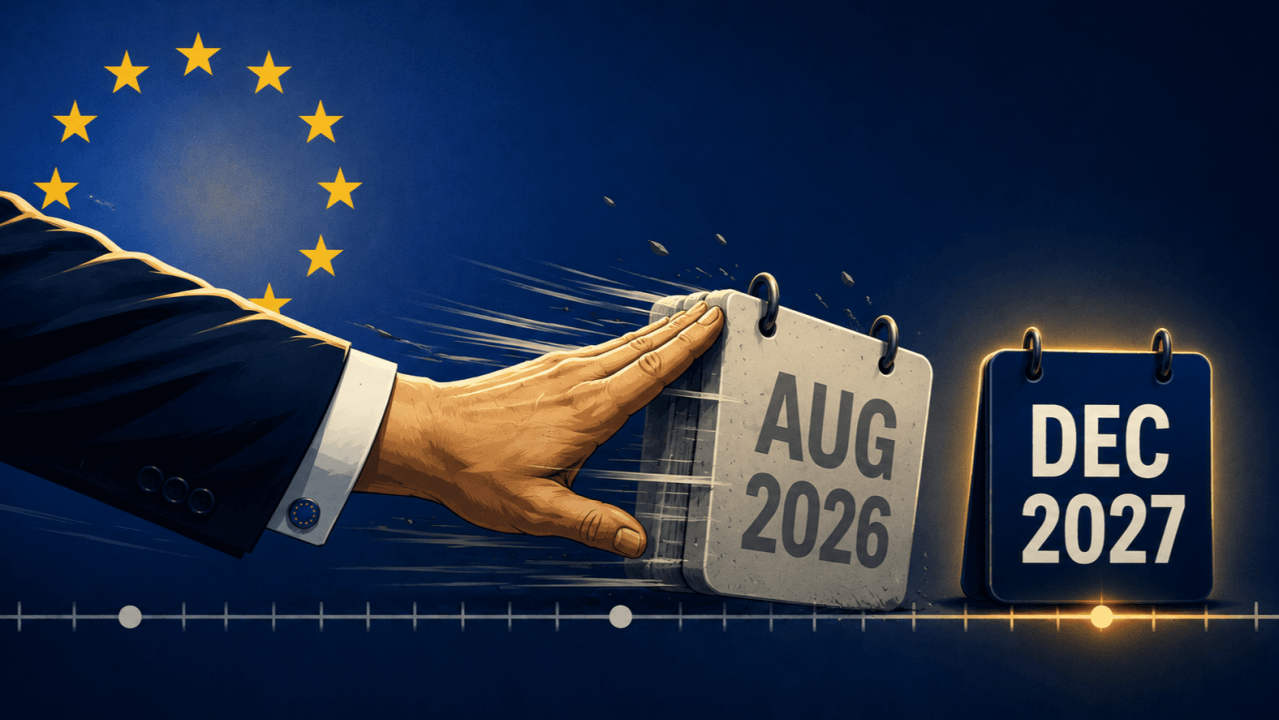

Recognition that the original high-risk compliance deadline of August 2026 was unrealistic due to missing harmonised standards, notified bodies, and technical infrastructure.

Strong industry feedback highlighting implementation challenges and high compliance costs, particularly for SMEs and small mid-caps.

Need to resolve overlaps and conflicts between the AI Act and existing sectoral regulations (machinery, medical devices, etc.).

Political priority to deliver tangible simplification results under the new “One Europe, One Market” agenda.

Growing public and political concern over harmful AI applications, such as “nudification” tools and deepfake intimate content.

Desire to maintain the EU’s leadership in trustworthy AI while making rules more practical and innovation-friendly.

The need for clearer governance roles between the EU AI Office and national authorities.

What EU AI Act Implementation Looked Like Before

Prior to this agreement, organizations faced significant uncertainty and pressure. The original EU AI Act timeline required high-risk systems to comply starting August 2, 2026 — a deadline many considered unfeasible given the lack of supporting standards, conformity assessment bodies, and technical guidance.

Businesses, especially SMEs, struggled with complex requirements, high compliance costs, and overlapping rules with sectoral legislation. Many companies were forced to pause or slow down AI projects due to regulatory ambiguity. Governance teams had to prepare for full obligations without knowing exactly how the AI Act would interact with existing laws in areas like machinery or medical devices.

The absence of adequate regulatory sandboxes and practical implementation tools further complicated preparation. At the same time, rising incidents of harmful AI uses — particularly non-consensual intimate imagery — increased calls for stronger protections. Overall, the framework risked becoming a barrier to innovation rather than an enabler of trustworthy AI in Europe.

What Changes Now

The provisional agreement introduces several practical changes. High-risk AI system obligations are now delayed to 2 December 2027 for stand-alone systems and 2 August 2028 for high-risk AI embedded in products. A new prohibition bans AI systems that generate non-consensual sexual/intimate content and CSAM.

The deal extends SME exemptions to small mid-caps, reinstates the obligation for providers to register certain high-risk systems in the EU database, and maintains a strict necessity standard for processing special category data for bias mitigation. Regulatory sandboxes are postponed until August 2027, while the timeline for transparency obligations on AI-generated content is shortened to December 2026.

Importantly, the agreement clarifies the relationship with sectoral legislation (e.g., exempting the Machinery Regulation from direct AI Act application in certain cases) and strengthens the role of the EU AI Office while defining exceptions for national authorities. The Commission will also provide additional guidance to help economic operators minimize compliance burdens.

Our Take

AI Compliance Take

This provisional agreement represents a pragmatic and necessary evolution of the EU AI Act. Rather than weakening the regulation, it makes it more realistic and implementable while reinforcing protections against the most harmful uses of AI. The extended timelines give organizations, regulators, and standards bodies the breathing room needed to build proper compliance infrastructure.

For enterprises, this is welcome news. Companies now have additional time to conduct thorough risk assessments, implement governance frameworks, and prepare for conformity assessments. However, organizations should not treat the delay as a reason to slow down preparation. The core obligations remain, and early movers will gain competitive advantage.

The addition of the ban on nudification and CSAM tools also sends a clear signal that the EU remains committed to protecting fundamental rights. Overall, this agreement strikes a better balance between innovation, competitiveness, and citizen protection — a balance that will likely influence global AI regulation discussions in the coming years.

Organizations operating in or with the EU should use the extended timeline to strengthen their AI governance programs, inventory high-risk systems, and engage with upcoming guidance from the Commission and AI Office.